- 重要な情報

- はじめに

- 用語集

- Standard Attributes

- ガイド

- インテグレーション

- エージェント

- OpenTelemetry

- 開発者

- Administrator's Guide

- API

- Partners

- DDSQL Reference

- モバイルアプリケーション

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- アプリ内

- ダッシュボード

- ノートブック

- DDSQL Editor

- Reference Tables

- Sheets

- Watchdog

- アラート設定

- メトリクス

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Service Management

- Actions & Remediations

- インフラストラクチャー

- Cloudcraft

- Resource Catalog

- ユニバーサル サービス モニタリング

- Hosts

- コンテナ

- Processes

- サーバーレス

- ネットワークモニタリング

- Cloud Cost

- アプリケーションパフォーマンス

- APM

- Continuous Profiler

- データベース モニタリング

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Digital Experience

- RUM & セッションリプレイ

- Synthetic モニタリング

- Continuous Testing

- Product Analytics

- Software Delivery

- CI Visibility (CI/CDの可視化)

- CD Visibility

- Deployment Gates

- Test Visibility

- Code Coverage

- Quality Gates

- DORA Metrics

- Feature Flags

- セキュリティ

- セキュリティの概要

- Cloud SIEM

- Code Security

- クラウド セキュリティ マネジメント

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- ログ管理

- Observability Pipelines(観測データの制御)

- ログ管理

- CloudPrem

- 管理

Connect BigQuery for Warehouse-Native Experiment Analysis

This product is not supported for your selected Datadog site. ().

このページは日本語には対応しておりません。随時翻訳に取り組んでいます。

翻訳に関してご質問やご意見ございましたら、お気軽にご連絡ください。

翻訳に関してご質問やご意見ございましたら、お気軽にご連絡ください。

Overview

Warehouse-native experiment analysis lets you run statistical computations directly in your data warehouse.

To set this up for BigQuery, connect a BigQuery service account to Datadog and configure your experiment settings. This guide covers:

- Preparing Google Cloud resources

- Granting permissions to the Datadog service account

- Configuring experiment settings in Datadog

Prerequisites

Datadog connects to BigQuery through a Google Cloud service account. If you already have a service account connected to Datadog, skip to Step 1. Otherwise, expand the section below to create one.

Create a Google Cloud service account

Create a Google Cloud service account

- Open your Google Cloud console.

- Navigate to IAM & Admin > Service Accounts.

- Click Create service account.

- Enter the following:

- Service account name.

- Service account ID.

- Service account description.

- Click Create and continue.

- Note: The Permissions and Principals with access settings are optional here. These are configured in Step 2.

- Click Done.

After you create the service account, continue to Step 1 to set up the Google Cloud resources.

If you plan to use other Google Cloud observability functionality in Datadog, see Datadog's Google Cloud Platform integration documentation to determine which resources to enable.

Step 1: Prepare the Google Cloud resources

Datadog Experiments uses a BigQuery dataset for caching experiment results and a Cloud Storage bucket for staging experiment records.

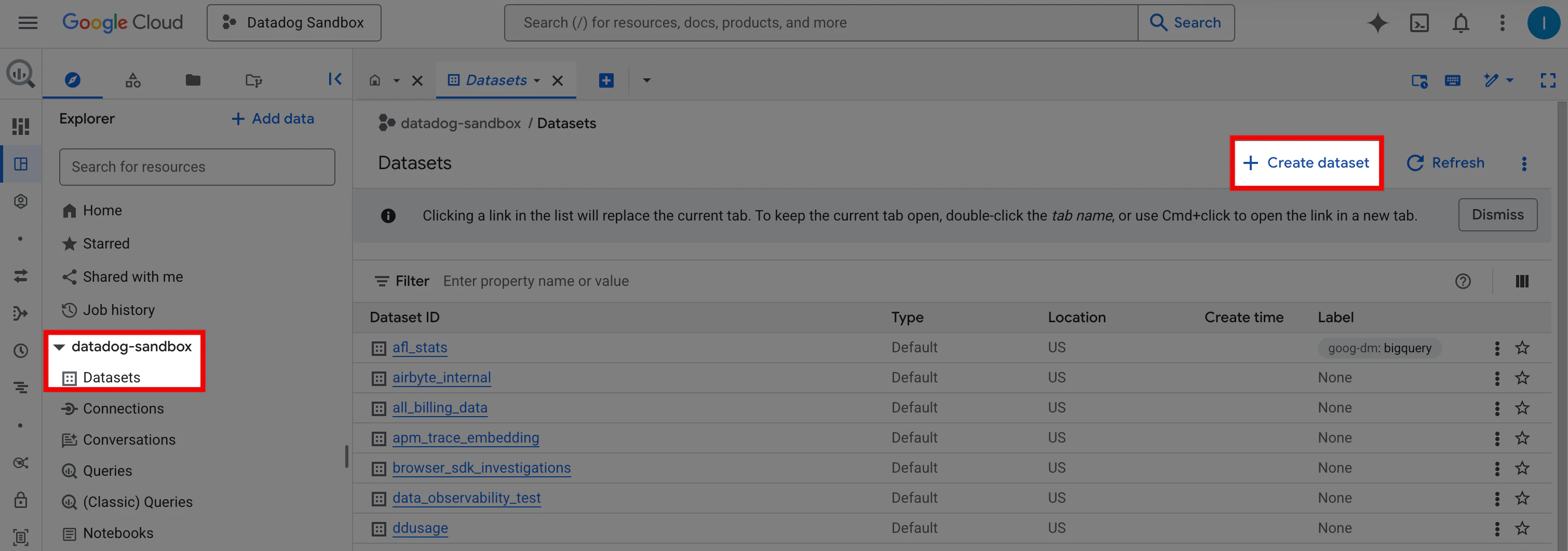

Create a BigQuery dataset

- Open your Google Cloud console.

- In the Search bar, search for BigQuery.

- In the Explorer panel, expand your project (for example,

datadog-sandbox). - Select Datasets, then click Create dataset.

- Enter a Dataset ID (for example,

datadog_experiments_output). - (Optional) Select a Data location from the dropdown, add Tags, and set Advanced options.

- Click Create dataset.

Create a Cloud Storage bucket

Create a Cloud Storage bucket that Datadog Experiments can use to stage experiment exposure records. See Google’s Create a bucket documentation.

Step 2: Grant permissions to the Datadog service account

The Datadog Experiments service account requires specific permissions to run warehouse-native experiment analysis.

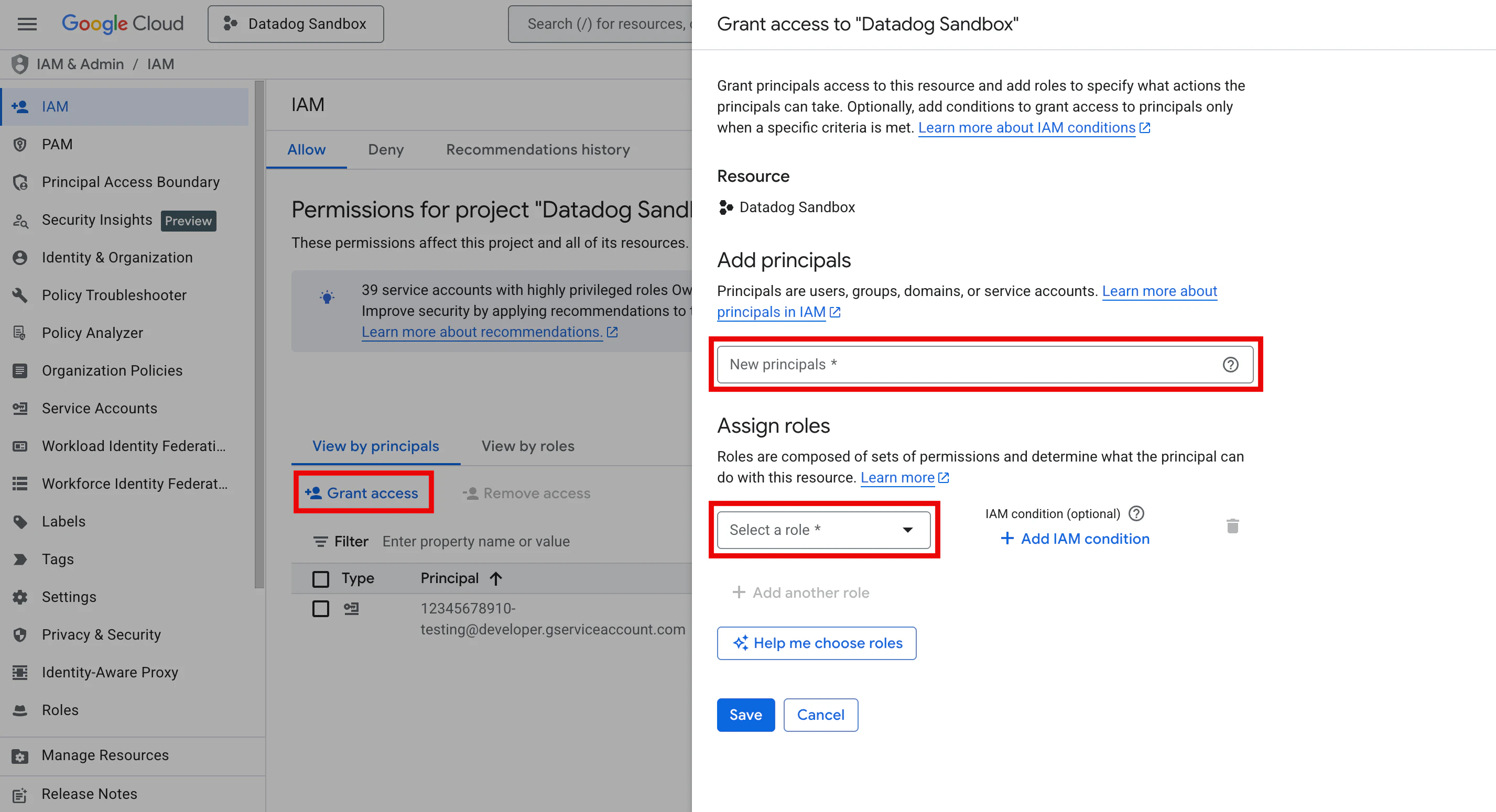

Assign IAM roles at the project level

To assign IAM roles so Datadog Experiments can read and write data, and run jobs in your data warehouse:

- Open your Google Cloud console and navigate to IAM & Admin > IAM.

- Select the Allow tab and click Grant access.

- In the New principals field, enter the service account email.

- Using the Select a role dropdown, add the following roles:

- BigQuery Job User: Allows the service account to run BigQuery jobs.

- BigQuery Data Owner: Grants the service account full access to the Datadog Experiments output dataset.

- Storage Object User: Allows the service account to read and write objects in the storage bucket that Datadog Experiments uses.

- BigQuery Data Viewer: Allows the service account to read tables used in warehouse-native metrics.

- Click Save.

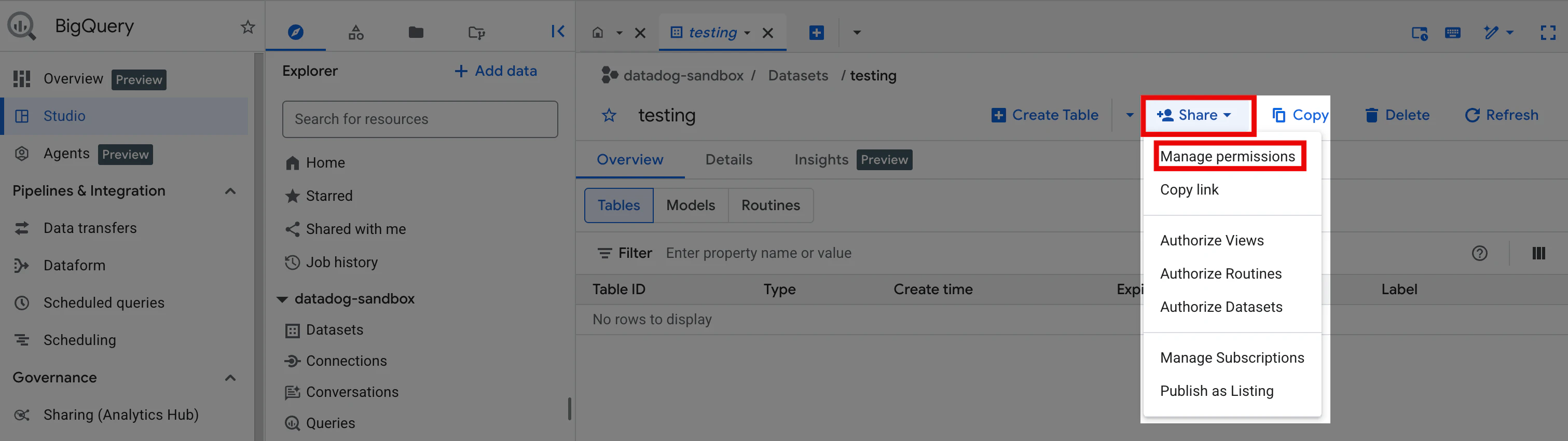

Grant read access to specific source tables

Repeat the following steps for each dataset you plan to use for experiment metrics:

- In the Google Cloud console Search bar, search for BigQuery.

- In the Explorer panel, expand your project (for example,

datadog-sandbox). - Click Datasets, then select the dataset containing your source tables.

- Click the Share dropdown and select Manage permissions.

- Click Add principal.

- In the New principals field, enter the service account email.

- Using the Select a role dropdown, select the BigQuery Data Viewer role.

- Click Save.

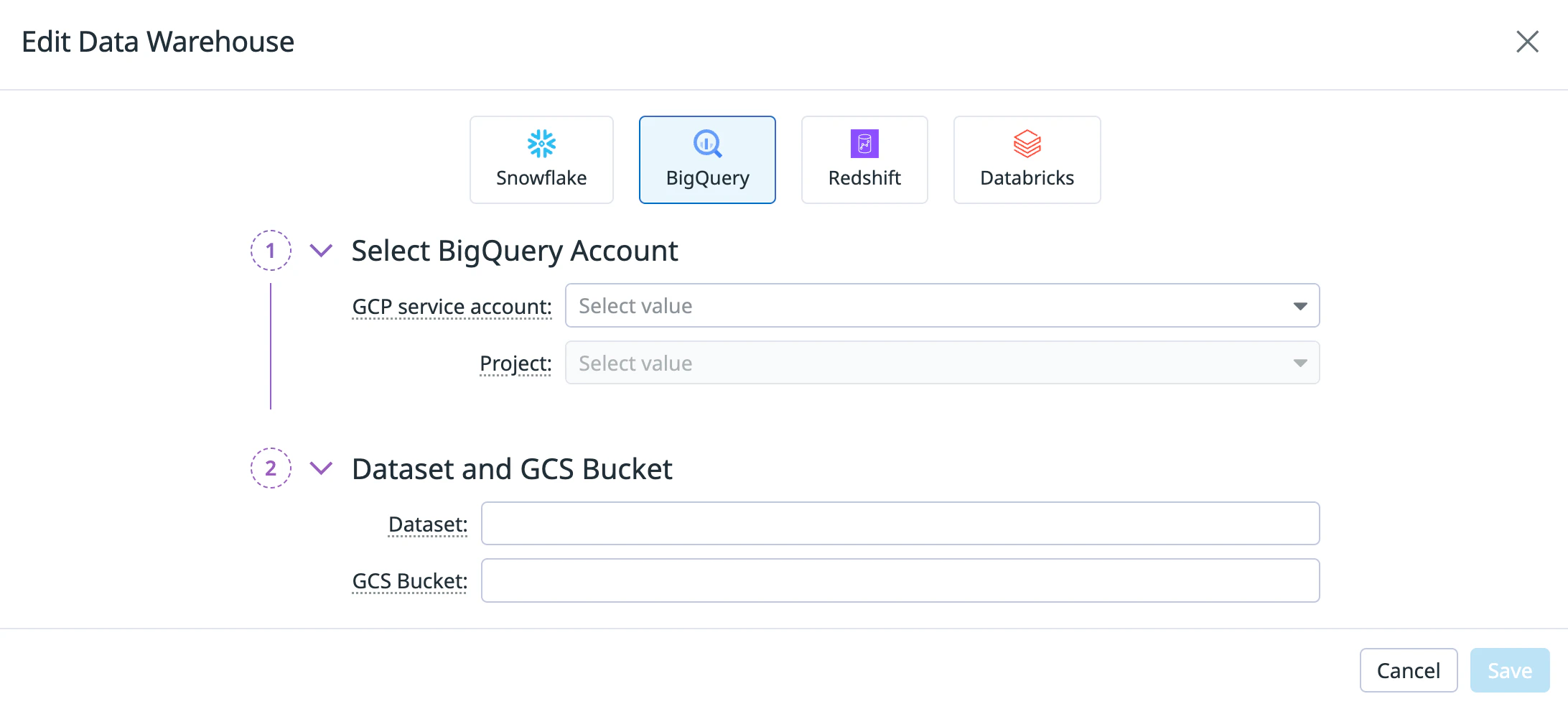

Step 3: Configure experiment settings

Datadog supports one warehouse connection per organization. Connecting BigQuery replaces any existing warehouse connection (for example, Snowflake).

After you set up your Google Cloud resources and IAM roles, configure the experiment settings in Datadog:

- Open Datadog Product Analytics.

- In the left navigation, hover over Settings and click Experiments.

- Select the Warehouse Connections tab.

- Click Connect a data warehouse. If you already have a warehouse connected, click Edit instead.

- Select the BigQuery tile.

- Under Select BigQuery Account, enter:

- GCP service account: The service account you are using for Datadog Experiments.

- Project: Your Google Cloud project.

- Under Dataset and GCS Bucket, enter:

- Click Save.

After you save your warehouse connection, create experiment metrics using your BigQuery data.