- Esenciales

- Empezando

- Agent

- API

- Rastreo de APM

- Contenedores

- Dashboards

- Monitorización de bases de datos

- Datadog

- Sitio web de Datadog

- DevSecOps

- Gestión de incidencias

- Integraciones

- Internal Developer Portal

- Logs

- Monitores

- OpenTelemetry

- Generador de perfiles

- Session Replay

- Security

- Serverless para Lambda AWS

- Software Delivery

- Monitorización Synthetic

- Etiquetas (tags)

- Workflow Automation

- Centro de aprendizaje

- Compatibilidad

- Glosario

- Atributos estándar

- Guías

- Agent

- Arquitectura

- IoT

- Plataformas compatibles

- Recopilación de logs

- Configuración

- Automatización de flotas

- Solucionar problemas

- Detección de nombres de host en contenedores

- Modo de depuración

- Flare del Agent

- Estado del check del Agent

- Problemas de NTP

- Problemas de permisos

- Problemas de integraciones

- Problemas del sitio

- Problemas de Autodiscovery

- Problemas de contenedores de Windows

- Configuración del tiempo de ejecución del Agent

- Consumo elevado de memoria o CPU

- Guías

- Seguridad de datos

- Integraciones

- Desarrolladores

- Autorización

- DogStatsD

- Checks personalizados

- Integraciones

- Build an Integration with Datadog

- Crear una integración basada en el Agent

- Crear una integración API

- Crear un pipeline de logs

- Referencia de activos de integración

- Crear una oferta de mercado

- Crear un dashboard de integración

- Create a Monitor Template

- Crear una regla de detección Cloud SIEM

- Instalar la herramienta de desarrollo de integraciones del Agente

- Checks de servicio

- Complementos de IDE

- Comunidad

- Guías

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Aplicación móvil de Datadog

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- En la aplicación

- Dashboards

- Notebooks

- Editor DDSQL

- Reference Tables

- Hojas

- Monitores y alertas

- Watchdog

- Métricas

- Bits AI

- Internal Developer Portal

- Error Tracking

- Explorador

- Estados de problemas

- Detección de regresión

- Suspected Causes

- Error Grouping

- Bits AI Dev Agent

- Monitores

- Issue Correlation

- Identificar confirmaciones sospechosas

- Auto Assign

- Issue Team Ownership

- Rastrear errores del navegador y móviles

- Rastrear errores de backend

- Manage Data Collection

- Solucionar problemas

- Guides

- Change Tracking

- Gestión de servicios

- Objetivos de nivel de servicio (SLOs)

- Gestión de incidentes

- De guardia

- Status Pages

- Gestión de eventos

- Gestión de casos

- Actions & Remediations

- Infraestructura

- Cloudcraft

- Catálogo de recursos

- Universal Service Monitoring

- Hosts

- Contenedores

- Processes

- Serverless

- Monitorización de red

- Cloud Cost

- Rendimiento de las aplicaciones

- APM

- Términos y conceptos de APM

- Instrumentación de aplicación

- Recopilación de métricas de APM

- Configuración de pipelines de trazas

- Correlacionar trazas (traces) y otros datos de telemetría

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilidad del servicio

- Endpoint Observability

- Instrumentación dinámica

- Live Debugger

- Error Tracking

- Seguridad de los datos

- Guías

- Solucionar problemas

- Límites de tasa del Agent

- Métricas de APM del Agent

- Uso de recursos del Agent

- Logs correlacionados

- Stacks tecnológicos de llamada en profundidad PHP 5

- Herramienta de diagnóstico de .NET

- Cuantificación de APM

- Go Compile-Time Instrumentation

- Logs de inicio del rastreador

- Logs de depuración del rastreador

- Errores de conexión

- Continuous Profiler

- Database Monitoring

- Gastos generales de integración del Agent

- Arquitecturas de configuración

- Configuración de Postgres

- Configuración de MySQL

- Configuración de SQL Server

- Configuración de Oracle

- Configuración de MongoDB

- Setting Up Amazon DocumentDB

- Conexión de DBM y trazas

- Datos recopilados

- Explorar hosts de bases de datos

- Explorar métricas de consultas

- Explorar ejemplos de consulta

- Exploring Database Schemas

- Exploring Recommendations

- Solucionar problemas

- Guías

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Experiencia digital

- Real User Monitoring

- Pruebas y monitorización de Synthetics

- Continuous Testing

- Análisis de productos

- Entrega de software

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Configuración

- Network Settings

- Tests en contenedores

- Repositories

- Explorador

- Monitores

- Test Health

- Flaky Test Management

- Working with Flaky Tests

- Test Impact Analysis

- Flujos de trabajo de desarrolladores

- Cobertura de código

- Instrumentar tests de navegador con RUM

- Instrumentar tests de Swift con RUM

- Correlacionar logs y tests

- Guías

- Solucionar problemas

- Code Coverage

- Quality Gates

- Métricas de DORA

- Feature Flags

- Seguridad

- Información general de seguridad

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- Observabilidad de la IA

- Log Management

- Observability Pipelines

- Gestión de logs

- CloudPrem

- Administración

- Gestión de cuentas

- Seguridad de los datos

- Ayuda

Connect BigQuery for Warehouse-Native Experiment Analysis

Este producto no es compatible con el sitio Datadog seleccionado. ().

Esta página aún no está disponible en español. Estamos trabajando en su traducción.

Si tienes alguna pregunta o comentario sobre nuestro actual proyecto de traducción, no dudes en ponerte en contacto con nosotros.

Si tienes alguna pregunta o comentario sobre nuestro actual proyecto de traducción, no dudes en ponerte en contacto con nosotros.

Overview

Warehouse-native experiment analysis lets you run statistical computations directly in your data warehouse.

To set this up for BigQuery, connect a BigQuery service account to Datadog and configure your experiment settings. This guide covers:

- Preparing Google Cloud resources

- Granting permissions to the Datadog service account

- Configuring experiment settings in Datadog

Prerequisites

Datadog connects to BigQuery through a Google Cloud service account. If you already have a service account connected to Datadog, skip to Step 1. Otherwise, expand the section below to create one.

Create a Google Cloud service account

Create a Google Cloud service account

- Open your Google Cloud console.

- Navigate to IAM & Admin > Service Accounts.

- Click Create service account.

- Enter the following:

- Service account name.

- Service account ID.

- Service account description.

- Click Create and continue.

- Note: The Permissions and Principals with access settings are optional here. These are configured in Step 2.

- Click Done.

After you create the service account, continue to Step 1 to set up the Google Cloud resources.

If you plan to use other Google Cloud observability functionality in Datadog, see Datadog's Google Cloud Platform integration documentation to determine which resources to enable.

Step 1: Prepare the Google Cloud resources

Datadog Experiments uses a BigQuery dataset for caching experiment results and a Cloud Storage bucket for staging experiment records.

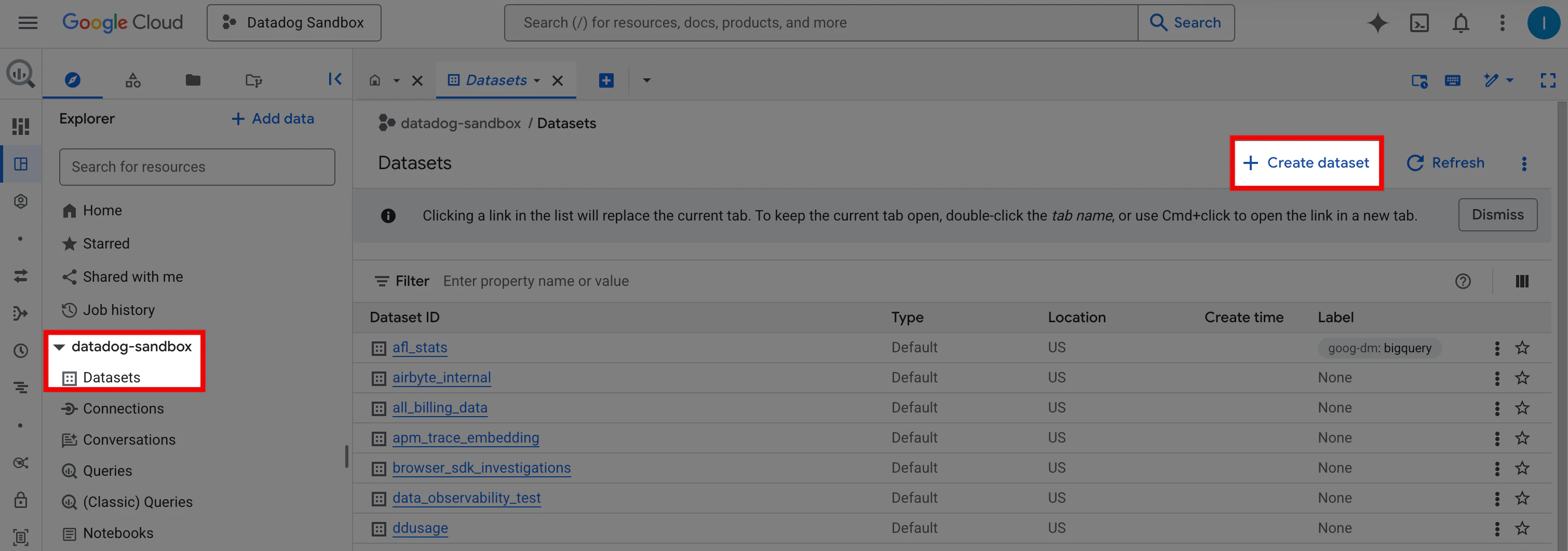

Create a BigQuery dataset

- Open your Google Cloud console.

- In the Search bar, search for BigQuery.

- In the Explorer panel, expand your project (for example,

datadog-sandbox). - Select Datasets, then click Create dataset.

- Enter a Dataset ID (for example,

datadog_experiments_output). - (Optional) Select a Data location from the dropdown, add Tags, and set Advanced options.

- Click Create dataset.

Create a Cloud Storage bucket

Create a Cloud Storage bucket that Datadog Experiments can use to stage experiment exposure records. See Google’s Create a bucket documentation.

Step 2: Grant permissions to the Datadog service account

The Datadog Experiments service account requires specific permissions to run warehouse-native experiment analysis.

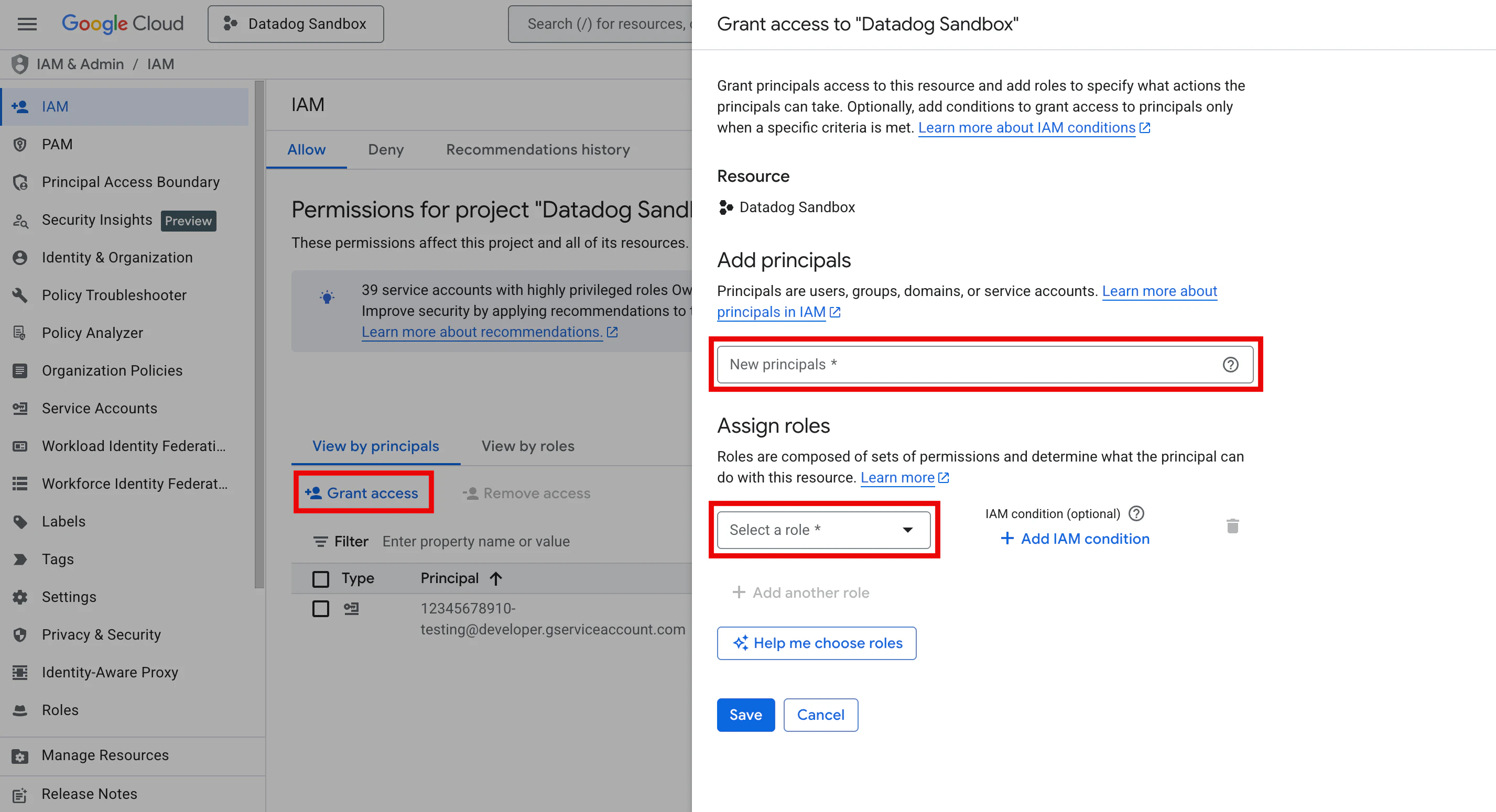

Assign IAM roles at the project level

To assign IAM roles so Datadog Experiments can read and write data, and run jobs in your data warehouse:

- Open your Google Cloud console and navigate to IAM & Admin > IAM.

- Select the Allow tab and click Grant access.

- In the New principals field, enter the service account email.

- Using the Select a role dropdown, add the following roles:

- BigQuery Job User: Allows the service account to run BigQuery jobs.

- BigQuery Data Owner: Grants the service account full access to the Datadog Experiments output dataset.

- Storage Object User: Allows the service account to read and write objects in the storage bucket that Datadog Experiments uses.

- BigQuery Data Viewer: Allows the service account to read tables used in warehouse-native metrics.

- Click Save.

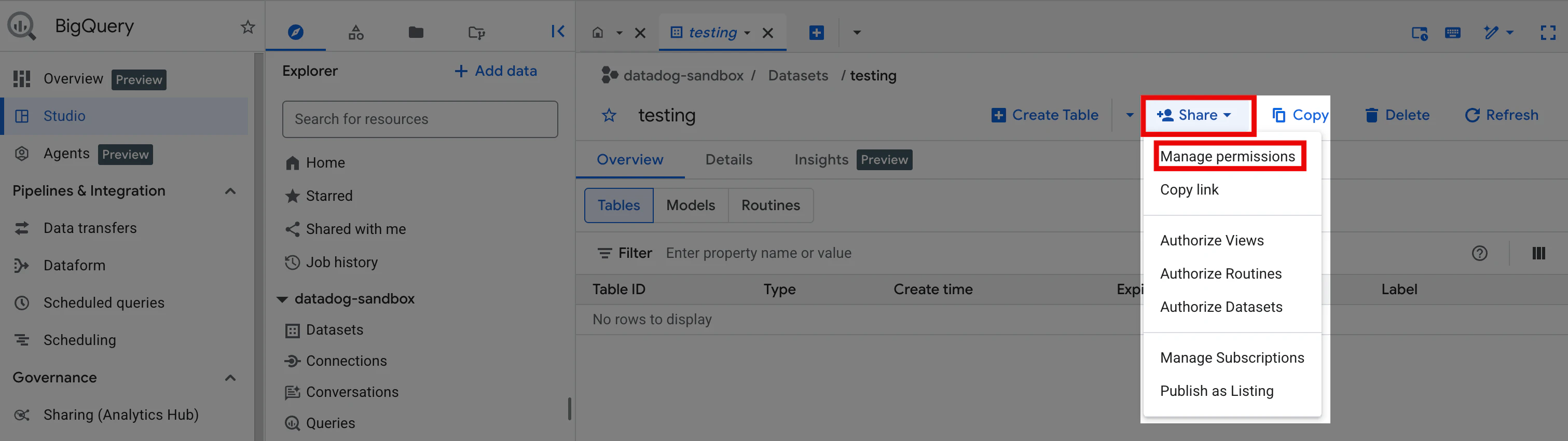

Grant read access to specific source tables

Repeat the following steps for each dataset you plan to use for experiment metrics:

- In the Google Cloud console Search bar, search for BigQuery.

- In the Explorer panel, expand your project (for example,

datadog-sandbox). - Click Datasets, then select the dataset containing your source tables.

- Click the Share dropdown and select Manage permissions.

- Click Add principal.

- In the New principals field, enter the service account email.

- Using the Select a role dropdown, select the BigQuery Data Viewer role.

- Click Save.

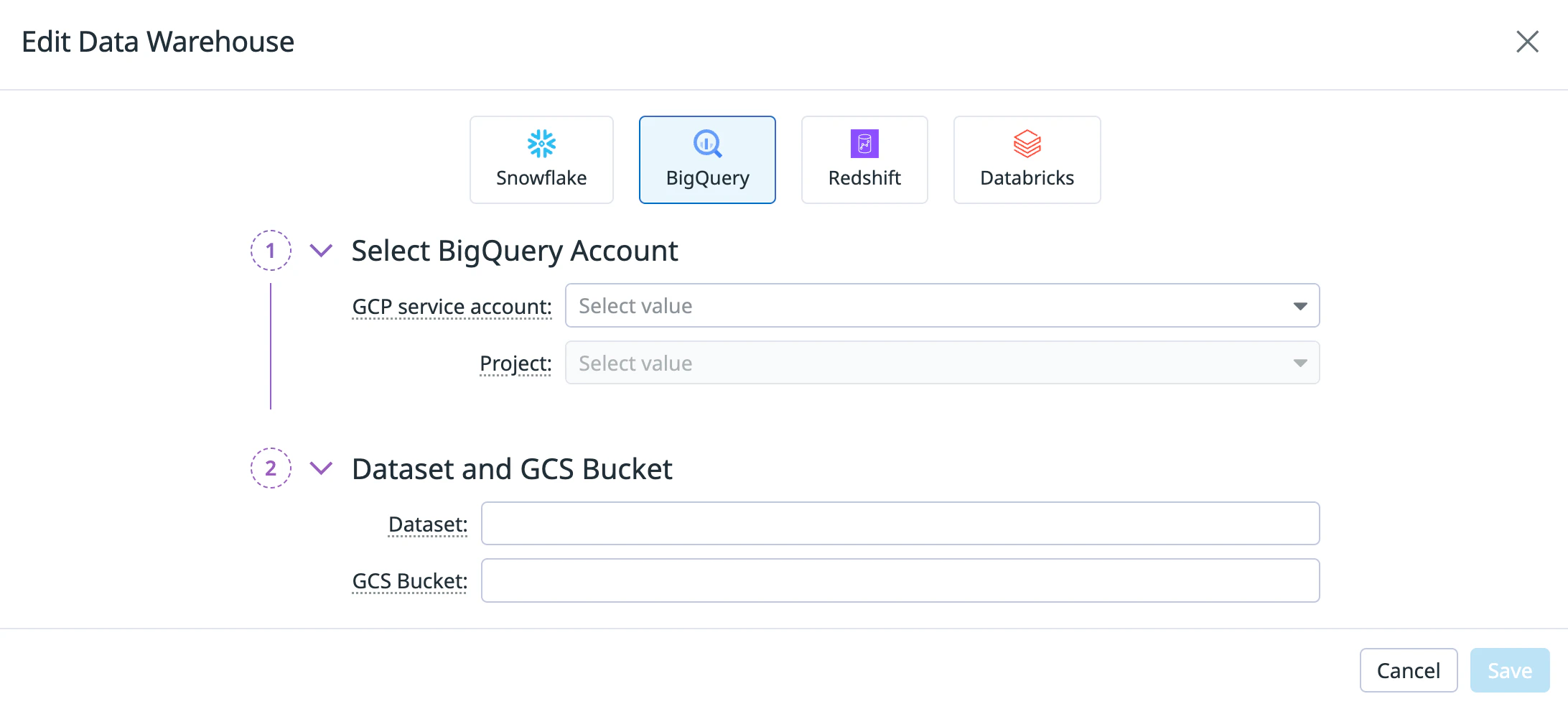

Step 3: Configure experiment settings

Datadog supports one warehouse connection per organization. Connecting BigQuery replaces any existing warehouse connection (for example, Snowflake).

After you set up your Google Cloud resources and IAM roles, configure the experiment settings in Datadog:

- Open Datadog Product Analytics.

- In the left navigation, hover over Settings and click Experiments.

- Select the Warehouse Connections tab.

- Click Connect a data warehouse. If you already have a warehouse connected, click Edit instead.

- Select the BigQuery tile.

- Under Select BigQuery Account, enter:

- GCP service account: The service account you are using for Datadog Experiments.

- Project: Your Google Cloud project.

- Under Dataset and GCS Bucket, enter:

- Click Save.

After you save your warehouse connection, create experiment metrics using your BigQuery data.

Further reading

Más enlaces, artículos y documentación útiles: