- Esenciales

- Empezando

- Agent

- API

- Rastreo de APM

- Contenedores

- Dashboards

- Monitorización de bases de datos

- Datadog

- Sitio web de Datadog

- DevSecOps

- Gestión de incidencias

- Integraciones

- Internal Developer Portal

- Logs

- Monitores

- OpenTelemetry

- Generador de perfiles

- Session Replay

- Security

- Serverless para Lambda AWS

- Software Delivery

- Monitorización Synthetic

- Etiquetas (tags)

- Workflow Automation

- Centro de aprendizaje

- Compatibilidad

- Glosario

- Atributos estándar

- Guías

- Agent

- Arquitectura

- IoT

- Plataformas compatibles

- Recopilación de logs

- Configuración

- Automatización de flotas

- Solucionar problemas

- Detección de nombres de host en contenedores

- Modo de depuración

- Flare del Agent

- Estado del check del Agent

- Problemas de NTP

- Problemas de permisos

- Problemas de integraciones

- Problemas del sitio

- Problemas de Autodiscovery

- Problemas de contenedores de Windows

- Configuración del tiempo de ejecución del Agent

- Consumo elevado de memoria o CPU

- Guías

- Seguridad de datos

- Integraciones

- Desarrolladores

- Autorización

- DogStatsD

- Checks personalizados

- Integraciones

- Build an Integration with Datadog

- Crear una integración basada en el Agent

- Crear una integración API

- Crear un pipeline de logs

- Referencia de activos de integración

- Crear una oferta de mercado

- Crear un dashboard de integración

- Create a Monitor Template

- Crear una regla de detección Cloud SIEM

- Instalar la herramienta de desarrollo de integraciones del Agente

- Checks de servicio

- Complementos de IDE

- Comunidad

- Guías

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Aplicación móvil de Datadog

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- En la aplicación

- Dashboards

- Notebooks

- Editor DDSQL

- Reference Tables

- Hojas

- Monitores y alertas

- Watchdog

- Métricas

- Bits AI

- Internal Developer Portal

- Error Tracking

- Explorador

- Estados de problemas

- Detección de regresión

- Suspected Causes

- Error Grouping

- Bits AI Dev Agent

- Monitores

- Issue Correlation

- Identificar confirmaciones sospechosas

- Auto Assign

- Issue Team Ownership

- Rastrear errores del navegador y móviles

- Rastrear errores de backend

- Manage Data Collection

- Solucionar problemas

- Guides

- Change Tracking

- Gestión de servicios

- Objetivos de nivel de servicio (SLOs)

- Gestión de incidentes

- De guardia

- Status Pages

- Gestión de eventos

- Gestión de casos

- Actions & Remediations

- Infraestructura

- Cloudcraft

- Catálogo de recursos

- Universal Service Monitoring

- Hosts

- Contenedores

- Processes

- Serverless

- Monitorización de red

- Cloud Cost

- Rendimiento de las aplicaciones

- APM

- Términos y conceptos de APM

- Instrumentación de aplicación

- Recopilación de métricas de APM

- Configuración de pipelines de trazas

- Correlacionar trazas (traces) y otros datos de telemetría

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilidad del servicio

- Endpoint Observability

- Instrumentación dinámica

- Live Debugger

- Error Tracking

- Seguridad de los datos

- Guías

- Solucionar problemas

- Límites de tasa del Agent

- Métricas de APM del Agent

- Uso de recursos del Agent

- Logs correlacionados

- Stacks tecnológicos de llamada en profundidad PHP 5

- Herramienta de diagnóstico de .NET

- Cuantificación de APM

- Go Compile-Time Instrumentation

- Logs de inicio del rastreador

- Logs de depuración del rastreador

- Errores de conexión

- Continuous Profiler

- Database Monitoring

- Gastos generales de integración del Agent

- Arquitecturas de configuración

- Configuración de Postgres

- Configuración de MySQL

- Configuración de SQL Server

- Configuración de Oracle

- Configuración de MongoDB

- Setting Up Amazon DocumentDB

- Conexión de DBM y trazas

- Datos recopilados

- Explorar hosts de bases de datos

- Explorar métricas de consultas

- Explorar ejemplos de consulta

- Exploring Database Schemas

- Exploring Recommendations

- Solucionar problemas

- Guías

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Experiencia digital

- Real User Monitoring

- Pruebas y monitorización de Synthetics

- Continuous Testing

- Análisis de productos

- Entrega de software

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Configuración

- Network Settings

- Tests en contenedores

- Repositories

- Explorador

- Monitores

- Test Health

- Flaky Test Management

- Working with Flaky Tests

- Test Impact Analysis

- Flujos de trabajo de desarrolladores

- Cobertura de código

- Instrumentar tests de navegador con RUM

- Instrumentar tests de Swift con RUM

- Correlacionar logs y tests

- Guías

- Solucionar problemas

- Code Coverage

- Quality Gates

- Métricas de DORA

- Feature Flags

- Seguridad

- Información general de seguridad

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- Observabilidad de la IA

- Log Management

- Observability Pipelines

- Gestión de logs

- CloudPrem

- Administración

- Gestión de cuentas

- Seguridad de los datos

- Ayuda

Destino Kafka

Este producto no es compatible con el sitio Datadog seleccionado. ().

Información general

Utiliza el destino Kafka de Observability Pipelines para enviar logs a temas Kafka.

Cuándo utilizar este destino

Escenarios frecuentes en los que puedes utilizar este destino:

- Para enrutar logs a los siguientes destinos:

- Clickhouse: Sistema abierto de gestión de bases de datos orientado a columnas utilizado para analizar grandes volúmenes de logs.

- Snowflake: Almacén de datos utilizado para el almacenamiento y la consulta.

- La integración de la API de Snowflake utiliza Kafka como método de ingesta de logs en tu plataforma.

- Databricks: Lago de datos para el análisis y el almacenamiento.

- Azure Event Hub: Servicio de ingesta y procesamiento en el ecosistema de Microsoft y Azure.

- Para enrutar datos a Kafka y utilizar el ecosistema Kafka Connect.

- Para procesar y normalizar tus datos con Observability Pipelines antes de enrutarlos a Apache Spark con Kafka para analizar datos y ejecutar cargas de trabajo de machine learning.

Instalación

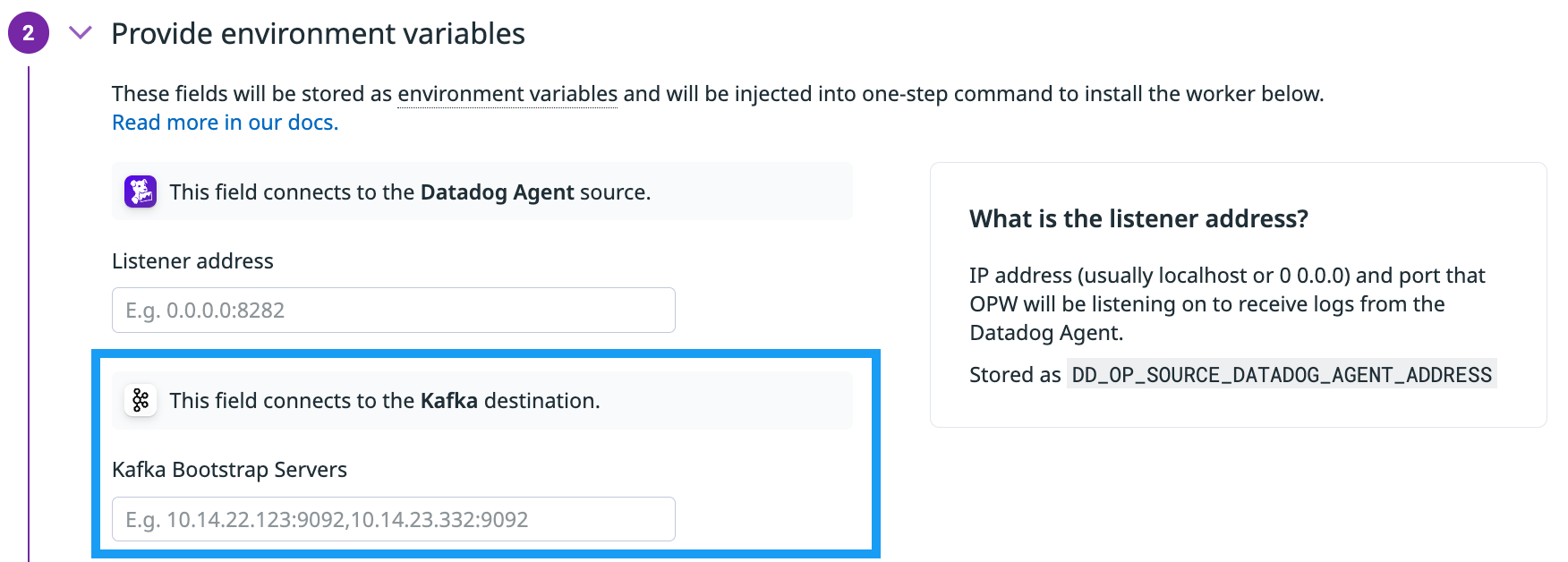

Configura el destino Kafka y sus variables de entorno cuando configures un pipeline. La siguiente información se configura en la interfaz de usuario del pipeline.

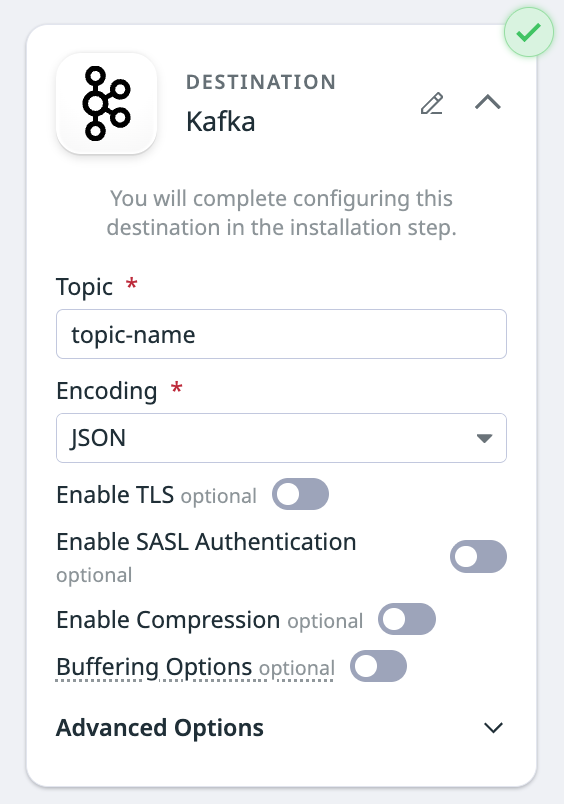

Configurar el destino

- Introduce el nombre del tema al que quieres enviar logs.

- En el menú desplegable Encoding (Codificación), selecciona

JSONoRaw messagecomo formato de salida.

Ajustes opcionales

Activar TLS

Alterna al interruptor para activar TLS** (Activar TLS). Se requieren los siguientes archivos de certificados y claves.

Nota: Todas las rutas a los archivos son relativas al directorio de datos de configuración, que es /var/lib/observability-pipelines-worker/config/ por defecto. Consulta Configuraciones avanzadas del worker para obtener más información. El archivo debe ser propiedad del usuario observability-pipelines-worker group y observability-pipelines-worker o al menos debe ser legible por el grupo o el usuario.

Server Certificate Path: La ruta al archivo del certificado que ha sido firmado por el archivo raíz de tu autoridad de certificación (CA) en DER o PEM (X.509).CA Certificate Path: La ruta al archivo del certificado que es el archivo raíz de tu autoridad de certificación (CA) en DER o PEM (X.509).Private Key Path: la ruta al archivo de clave privada.keyque pertenece a la ruta de tu certificado de servidor en formato DER o PEM (PKCS#8).

Activar la autenticación SASL

- Alterna el interruptor para activar la Autenticación SASL.

- Selecciona el mecanismo (PLAIN, SCHRAM-SHA-256 o SCHRAM-SHA-512) en el menú desplegable.

Activar la compresión

- Alterna a la opción Enable Compression (Activar compresión).

- En el menú desplegable Compression Algorithm (Algoritmo de compresión), selecciona un algoritmo de compresión (gzip, zstd, lz4 o snappy).

- (Opcional) Selecciona un nivel de compresión en el menú desplegable. Si no se especifica el nivel, se utiliza el nivel por defecto del algoritmo.

Almacenamiento en buffer

Toggle the switch to enable Buffering Options. Enable a configurable buffer on your destination to ensure intermittent latency or an outage at the destination doesn’t create immediate backpressure, and allow events to continue to be ingested from your source. Disk buffers can also increase pipeline durability by writing data to disk, ensuring buffered data persists through a Worker restart. See Destination buffers for more information.

- If left unconfigured, your destination uses a memory buffer with a capacity of 500 events.

- To configure a buffer on your destination:

- Select the buffer type you want to set (Memory or Disk).

- Enter the buffer size and select the unit.

- Maximum memory buffer size is 128 GB.

- Maximum disk buffer size is 500 GB.

- In the Behavior on full buffer dropdown menu, select whether you want to block events or drop new events when the buffer is full.

Opciones avanzadas

Haz clic en Advanced (Avanzado) si quieres configurar alguno de los siguientes campos:

- Campo clave del mensaje: Especifica qué campo de log contiene la clave del mensaje para particionar, agrupar y ordenar.

- Clave de cabecera: Especifica qué campo de log contiene tus cabeceras Kafka. Si se deja en blanco, no se escribe ninguna cabecera.

- Tiempo de espera del mensaje (ms): Tiempo de espera del mensaje local en milisegundos. Por defecto es

300,000 ms. - Tiempo de espera del socket (ms): Tiempo de espera por defecto en milisegundos para solicitudes de red. Por defecto es

60,000 ms. - Eventos de límite de frecuencia: Número máximo de solicitudes que el cliente Kafka puede enviar dentro de la ventana de tiempo de límite de frecuencia. Por defecto no hay límite de frecuencia.

- Ventana de tiempo de límite de frecuencia (segundos): Ventana de tiempo utilizada para la opción de límite de frecuencia.

- Este ajuste no tiene ningún efecto si no se define el límite de frecuencia para eventos.

- El valor por defecto es

1 secondsi Rate Limit Events (Eventos de límite de frecuencia) está configurado, pero Rate Limit Time Window (Ventana de tiempo límite de frecuencia) no está configurado.

- Para añadir opciones librdkafka adicionales, haz clic en Add Option (Añadir opción) y selecciona una opción en el menú desplegable.

- Introduce un valor para esa opción.

- Comprueba tus valores en la documentación de librdkafka para asegurarte de que tienen el tipo correcto y están dentro del rango establecido.

- Haz clic en Add Option (Añadir opción) para añadir otra opción librdkafka.

Configurar secretos

These are the defaults used for secret identifiers and environment variables.

Note: If you enter secret identifiers and then choose to use environment variables, the environment variable is the identifier entered and prepended with DD_OP. For example, if you entered PASSWORD_1 for a password identifier, the environment variable for that password is DD_OP_PASSWORD_1.

- Identificador de servidores de arranque Kafka:

- Hace referencia al servidor de arranque que el cliente utiliza para conectarse al clúster Kafka y detectar todos los demás hosts del clúster.

- En tu gestor de secretos, el host y el puerto deben introducirse en el formato

host:port, como10.14.22.123:9092. Si hay más de un servidor, utiliza comas para separarlos. - El identificador por defecto es

DESTINATION_KAFKA_BOOTSTRAP_SERVERS.

- Identificador de frase de contraseña TLS de Kafka (cuando TLS está activado):

- El identificador por defecto es

DESTINATION_KAFKA_KEY_PASS.

- El identificador por defecto es

- Autenticación SASL (si está activada):

- Identificador de nombre de usuario SASL de Kafka:

- El identificador por defecto es

DESTINATION_KAFKA_SASL_USERNAME.

- El identificador por defecto es

- Identificador de contraseña SASL de Kafka:

- El identificador por defecto es

DESTINATION_KAFKA_SASL_PASSWORD.

- El identificador por defecto es

- Identificador de nombre de usuario SASL de Kafka:

Kafka bootstrap servers

- The host and port of the Kafka bootstrap servers.

- This is the bootstrap server that the client uses to connect to the Kafka cluster and discover all the other hosts in the cluster. The host and port must be entered in the format of

host:port, such as10.14.22.123:9092. If there is more than one server, use commas to separate them. - The default environment variable is

DD_OP_DESTINATION_KAFKA_BOOTSTRAP_SERVERS.

TLS (when enabled)

- If TLS is enabled, the Kafka TLS passphrase is needed.

- The default environment variable is

DD_OP_DESTINATION_KAFKA_KEY_PASS.

SASL (when enabled)

- Kafka SASL username

- The default environment variable is

DD_OP_DESTINATION_KAFKA_SASL_USERNAME.

- The default environment variable is

- Kafka SASL password

- The default environment variable is

DD_OP_DESTINATION_KAFKA_SASL_PASSWORD.

- The default environment variable is

Opciones de librdkafka

Estas son las opciones de librdkafka disponibles:

- client.id

- queue.buffering.max_messages

- transactional.id

- enable.idempotence

- acks

Consulta la documentación de librdkafka para obtener más información y asegurarte de que tus valores tienen el tipo correcto y están dentro del rango.

Métricas

Consulte las métricas de Observability Pipelines para ver una lista completa de métricas de salud disponibles.

Métricas de salud de workers

Métricas de componente

Monitor the health of your destination with the following key metrics:

pipelines.component_sent_events_total- Events successfully delivered.

pipelines.component_discarded_events_total- Events dropped.

pipelines.component_errors_total- Errors in the destination component.

pipelines.component_sent_events_bytes_total- Total event bytes sent.

pipelines.utilization- Worker resource usage.

Métricas de buffer (cuando están activadas)

These metrics are specific to destination buffers, located upstream of a destination. Each destination emits its own respective buffer metrics.

- Use the

component_idtag to filter or group by individual components. - Use the

component_typetag to filter or group by the destination type, such asdatadog_logsfor the Datadog Logs destination.

pipelines.buffer_size_events- Description: Number of events in a destination’s buffer.

- Metric type: gauge

pipelines.buffer_size_bytes- Description: Number of bytes in a destination’s buffer.

- Metric type: gauge

pipelines.buffer_received_events_total- Description: Events received by a destination’s buffer. Note: This metric represents the count per second and not the cumulative total, even though

totalis in the metric name. - Metric type: counter

pipelines.buffer_received_bytes_total- Description: Bytes received by a destination’s buffer. Note: This metric represents the count per second and not the cumulative total, even though

totalis in the metric name. - Metric type: counter

pipelines.buffer_sent_events_total- Description: Events sent downstream by a destination’s buffer. Note: This metric represents the count per second and not the cumulative total, even though

totalis in the metric name. - Metric type: counter

pipelines.buffer_sent_bytes_total- Description: Bytes sent downstream by a destination’s buffer. Note: This metric represents the count per second and not the cumulative total, even though

totalis in the metric name. - Metric type: counter

pipelines.buffer_discarded_events_total- Description: Events discarded by the buffer. Note: This metric represents the count per second and not the cumulative total, even though

totalis in the metric name. - Metric type: counter

- Additional tags:

intentional:truemeans an incoming event was dropped because the buffer was configured to drop the newest logs when it’s full.intentional:falsemeans the event was dropped due to an error. pipelines.buffer_discarded_bytes_total- Description: Bytes discarded by the buffer. Note: This metric represents the count per second and not the cumulative total, even though

totalis in the metric name. - Metric type: counter

- Additional tags:

intentional:truemeans an incoming event was dropped because the buffer was configured to drop the newest logs when it’s full.intentional:falsemeans the event was dropped due to an error.

Métricas de buffer obsoletas

These metrics are still emitted by the Observability Pipelines Worker for backwards compatibility. Datadog recommends using the replacements when possible.

pipelines.buffer_events- Description: Number of events in a destination’s buffer. Use

pipelines.buffer_size_eventsinstead. - Metric type: gauge

pipelines.buffer_byte_size- Description: Number of bytes in a destination’s buffer. Use

pipelines.buffer_size_bytesinstead. - Metric type: gauge

Procesamiento de eventos por lotes

Un lote de eventos se descarga cuando se cumple uno de estos parámetros. Consulta el procesamiento de eventos por lotes para obtener más información.

| Eventos máximos | Tamaño máximo (MB) | Tiempo de espera (segundos) |

|---|---|---|

| 10,000 | 1 | 1 |