- Agents

- Essentials

- Getting Started

- Agent

- API

- APM Tracing

- Containers

- Dashboards

- Database Monitoring

- Datadog

- Datadog Site

- DevSecOps

- Incident Management

- Integrations

- Internal Developer Portal

- Logs

- Monitors

- Notebooks

- OpenTelemetry

- Profiler

- Search

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Synthetic Monitoring and Testing

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- Extend Datadog

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Build an Integration with Datadog

- Create an Agent-based Integration

- Create an API-based Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create an Integration Dashboard

- Create a Monitor Template

- Create a Cloud SIEM Detection Rule

- Install Agent Integration Developer Tool

- Service Checks

- Community

- Guides

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Datadog Mobile App

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft (Standalone)

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Monitors and Alerting

- Service Level Objectives

- Metrics

- Watchdog

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Event Management

- Incident Response

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- End User Device Monitoring

- Hosts

- Containers

- Processes

- Serverless

- Network Monitoring

- Storage Management

- Cloud Cost

- Application Performance

- APM

- Continuous Profiler

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Setting Up Postgres

- Setting Up MySQL

- Setting Up SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Setting Up ClickHouse

- Connecting DBM and Traces

- Data Collected

- Exploring Database Hosts

- Exploring Query Metrics

- Exploring Query Samples

- Exploring Database Schemas

- Exploring Recommendations

- Troubleshooting

- Guides

- Data Streams Monitoring

- Data Observability

- Digital Experience

- Real User Monitoring

- Synthetic Testing and Monitoring

- Continuous Testing

- Experiments

- Product Analytics

- Session Replay

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Optimization

- Code Coverage

- PR Gates

- DORA Metrics

- Feature Flags

- Developer Integrations

- Security

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security

- App and API Protection

- AI Guard

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Observability Pipelines

- Configuration

- Sources

- Processors

- Destinations

- Packs

- Akamai CDN

- Amazon CloudFront

- Amazon VPC Flow Logs

- AWS Application Load Balancer Logs

- AWS CloudTrail

- AWS Elastic Load Balancer Logs

- AWS Network Load Balancer Logs

- Cisco ASA

- Cloudflare

- F5

- Fastly

- Fortinet Firewall

- HAProxy Ingress

- Istio Proxy

- Juniper SRX Firewall Traffic Logs

- Netskope

- NGINX

- Okta

- Palo Alto Firewall

- Windows XML

- ZScaler ZIA DNS

- Zscaler ZIA Firewall

- Zscaler ZIA Tunnel

- Zscaler ZIA Web Logs

- Search Syntax

- Scaling and Performance

- Monitoring and Troubleshooting

- Guides and Resources

- Log Management

- CloudPrem

- Administration

APM Monitor

Overview

APM metric monitors work like regular metric monitors, but with controls tailored specifically to APM. Use these monitors to receive alerts at the service level on hits, errors, and a variety of latency measures.

Analytics monitors allow you to visualize APM data over time and set up alerts based on Indexed Spans. For example, use an Analytics monitor to receive alerts on a spike in slow requests.

Automatic APM monitors

Automatic Monitors for APM are available to new organizations and activate as soon as the Datadog Agent is installed and spans begin flowing into Datadog. This provides immediate alerting coverage on your key services with minimal configuration, and helps you to maintain coverage without gaps.

Monitors are automatically created for service entry points, which are identified by operations tagged with span.kind:server or span.kind:consumer and represent where requests enter your service.

Automatic monitors for APM include:

Error rate threshold monitors

Error rate threshold monitors are created per service entry point using APM trace metrics. These alert you when error behavior spikes and help ensure your most critical endpoints are covered by default. The default error rate is 10%, but can be configured for your environment.

Watchdog anomaly monitors

Watchdog anomaly monitors automatically detect unusual patterns in latency, errors, and request volume (hits) for all services without requiring you to manually configure thresholds.

Note: Automatic monitor creation is only available during a trial.

You can view and manage all automatically created monitors on the Monitors page, where they can be edited, cloned, or disabled.

Monitor creation

To create an APM monitor in Datadog, use the main navigation: Monitors –> New Monitor –> APM.

Choose between an APM Metrics or a Trace Analytics monitor:

Select monitor scope

Choose your primary tags, service, and resource from the dropdown menus.

Set alert conditions

Choose a Threshold or Anomaly alert:

Threshold alert

An alert is triggered whenever a metric crosses a threshold.

- Alert when

Requests per second,Errors per second,Apdex,Error rate,Avg latency,p50 latency,p75 latency,p90 latency, orp99 latency - is

above,above or equal to,below, orbelow or equal to - Alert threshold

<NUMBER> - Warning threshold

<NUMBER> - over the last

5 minutes,15 minutes,1 hour, etc. orcustomto set a value between 1 minute and 48 hours.

Anomaly alert

An alert is triggered whenever a metric deviates from an expected pattern.

- For

Requests per second,Errors per second,Apdex,Error rate,Avg latency,p50 latency,p75 latency,p90 latency, orp99 latency - Alert when

<ALERT_THRESHOLD>%,<WARNING_THRESHOLD>% - of values are

<NUMBER>deviationsabove or below,above, orbelow - the prediction during the past

5 minutes,15 minutes,1 hour, etc. orcustomto set a value between 1 minute and 48 hours.

Advanced alert conditions

For detailed instructions on the advanced alert options (no data, evaluation delay, etc.), see the Monitor configuration page. For the metric-specific option full data window, see the Metric monitor page.

There is a default limit of 1000 Trace Analytics monitors per account. If you are encountering this limit, consider using multi alerts, or Contact Support.

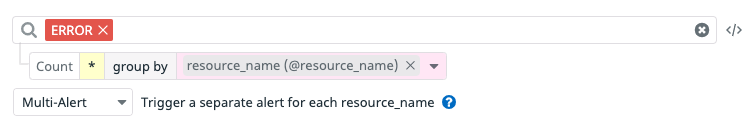

Define the search query

- Construct a search query using the same logic as a trace search.

- Choose to monitor over a trace count, facet, or measure:

- Monitor over a trace count: Use the search bar (optional) and do not select a facet or measure. Datadog evaluates the number of traces over a selected time frame and then compares it to the threshold conditions.

- Monitor over a facet or measure: If a facet is selected, the monitor alerts over the

Unique value countof the facet. If a measure is selected, then it’s similar to a metric monitor, and aggregation needs to be selected (min,avg,sum,median,pc75,pc90,pc95,pc98,pc99, ormax).

- Group traces by multiple dimensions (optional): All traces matching the query are aggregated into groups based on the value of up to four facets.

- Configure the alerting grouping strategy (optional):

- Simple alert: Simple alerts aggregate over all reporting sources. You receive one alert when the aggregated value meets the set conditions.If the query has a

group byand you select simple alert mode, you get one alert when one or multiple groups’ values breach the threshold. This strategy may be selected to reduce notification noise. - Multi alert: Multi alerts apply the alert to each source according to your group parameters. An alerting event is generated for each group that meets the set conditions. For example, you could group a query by

@resource.nameto receive a separate alert for each resource when a span’s error rate is high.

- Simple alert: Simple alerts aggregate over all reporting sources. You receive one alert when the aggregated value meets the set conditions.If the query has a

Note: Analytics monitors only evaluate spans retained by custom retention filters (not the intelligent retention filter). Additionally, spans indirectly indexed by trace-level retention filters (that is, spans that don’t match the query directly but belong to traces that do) are not evaluated by trace analytics monitors.

Select alert conditions

- Trigger when the query result is

above,above or equal to,below, orbelow or equal to. - The threshold during the last

5 minutes,15 minutes,1 hour, orcustomto set a value between 5 minutes and 48 hours. - Alert threshold:

<NUMBER> - Warning threshold:

<NUMBER>

No data and below alerts

To receive a notification when a group matching a specific query stops sending spans, set the condition to below 1. This notifies you when no spans match the monitor query in the defined evaluation period for the group.

Advanced alert conditions

For detailed instructions on the advanced alert options (evaluation delay, etc.), see the Monitor configuration page.

Notifications

For detailed instructions on the Configure notifications and automations section, see the Notifications page.

Note: Find service level monitors on the Software Catalog and on the Service Map, and find resource level monitors on the individual resource pages (you can get there by clicking on the specific resource listed on the a service details page).

Further Reading

Additional helpful documentation, links, and articles: