- Principales informations

- Getting Started

- Agent

- API

- Tracing

- Conteneurs

- Dashboards

- Database Monitoring

- Datadog

- Site Datadog

- DevSecOps

- Incident Management

- Intégrations

- Internal Developer Portal

- Logs

- Monitors

- OpenTelemetry

- Profileur

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Surveillance Synthetic

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Intégrations

- Développeurs

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Application mobile

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Alertes

- Watchdog

- Métriques

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Service Management

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- Hosts

- Conteneurs

- Processes

- Sans serveur

- Surveillance réseau

- Cloud Cost

- Application Performance

- APM

- Termes et concepts de l'APM

- Sending Traces to Datadog

- APM Metrics Collection

- Trace Pipeline Configuration

- Connect Traces with Other Telemetry

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilité des services

- Endpoint Observability

- Dynamic Instrumentation

- Live Debugger

- Suivi des erreurs

- Sécurité des données

- Guides

- Dépannage

- Profileur en continu

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Configuration de Postgres

- Configuration de MySQL

- Configuration de SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Connecting DBM and Traces

- Données collectées

- Exploring Database Hosts

- Explorer les métriques de requête

- Explorer des échantillons de requêtes

- Exploring Database Schemas

- Exploring Recommendations

- Dépannage

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Digital Experience

- RUM et Session Replay

- Surveillance Synthetic

- Continuous Testing

- Product Analytics

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Code Coverage

- Quality Gates

- DORA Metrics

- Feature Flags

- Securité

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Pipelines d'observabilité

- Log Management

- CloudPrem

- Administration

Processing Pipelines

Cette page n'est pas encore disponible en français, sa traduction est en cours.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

APM Processing Pipelines let you transform, normalize, and enrich span attributes after ingestion and before storage, without modifying application code.

Use pipelines to:

- Standardize attribute naming across services

- Consolidate inconsistent keys into a single canonical attribute

- Extract structured data from string values

Processing Pipelines run in the Datadog backend and apply only to newly ingested spans. Each pipeline contains a filter query that defines which spans enter the pipeline, and one or more processors that define how to transform matching spans. It evaluates pipelines in order from top to bottom.

Create a pipeline

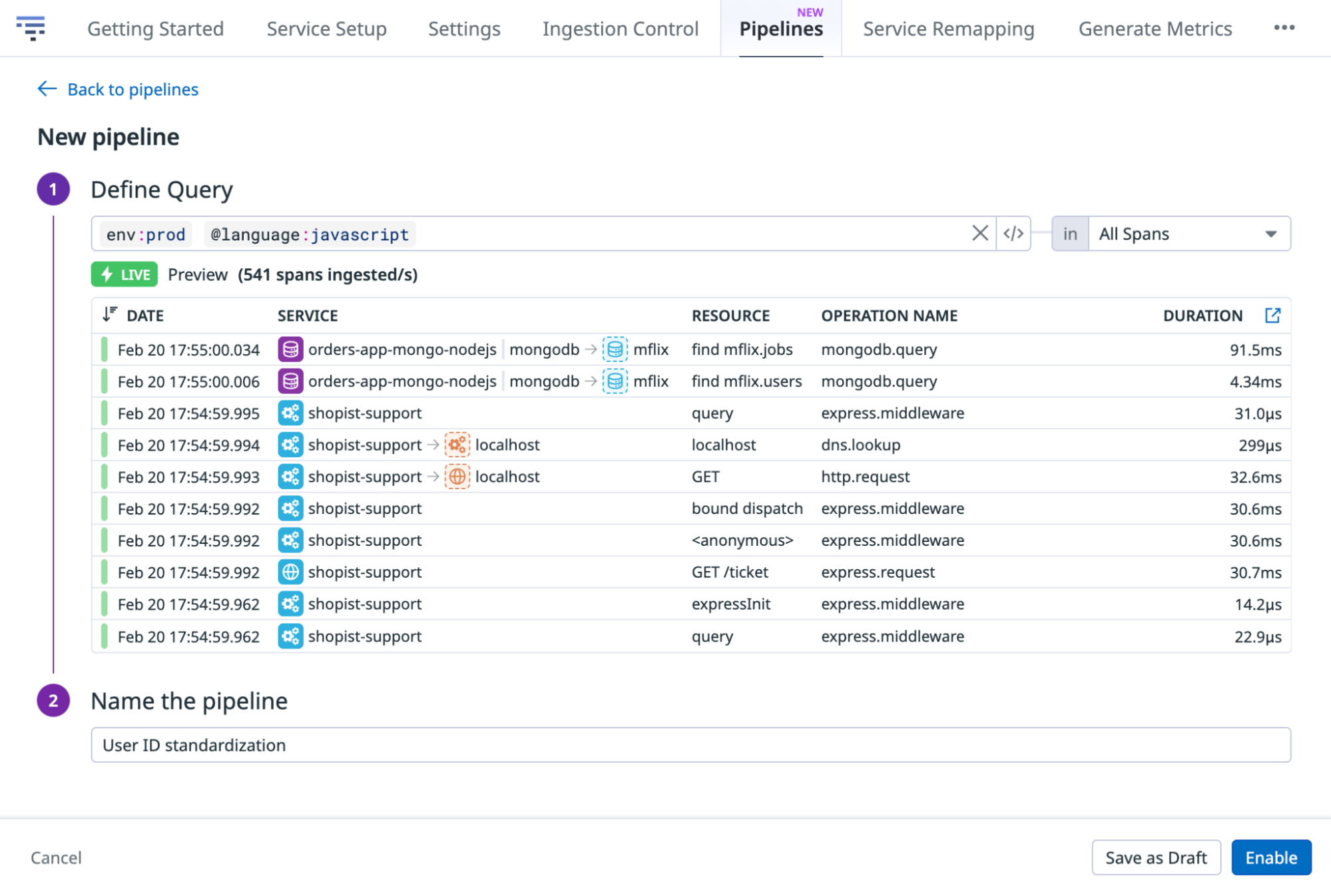

To create a pipeline:

- Navigate to APM > Settings > Pipelines.

- Click Add Pipeline.

- Define a filter query using query syntax. The pipeline only processes spans matching this filter.

- Name the pipeline.

- Click Enable.

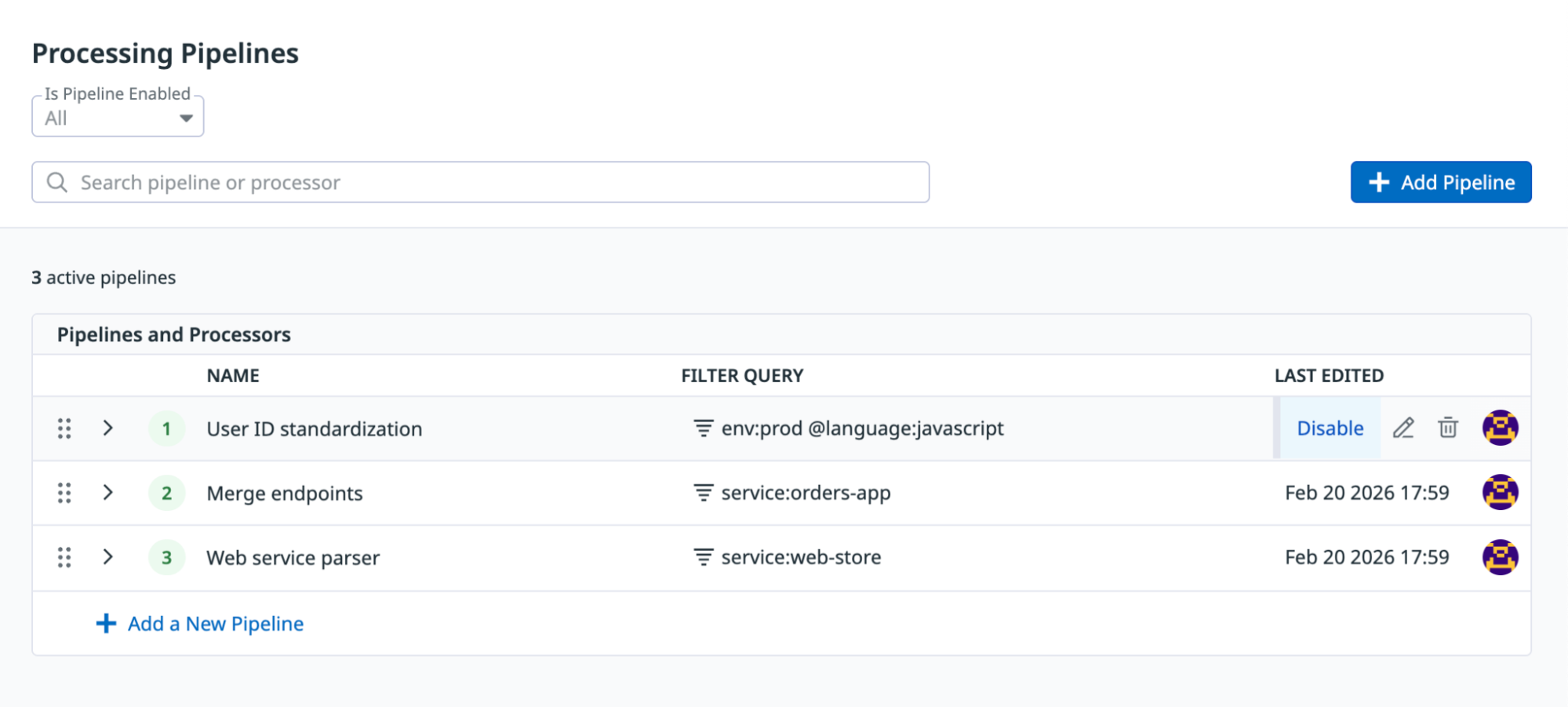

Manage pipelines

From the Pipelines page, you can:

- Enable or disable individual pipelines

- Reorder pipelines by dragging them

- Edit pipelines in draft mode

- Restrict access with RBAC

Disabling a pipeline stops it from processing newly ingested spans. It does not retroactively modify previously stored spans.

Processors

Processors define the transformations applied to matching spans. Within a pipeline, processors run sequentially. Attribute changes from one processor apply to all downstream processors in the same pipeline. To add a processor, expand a pipeline and click Add Processor.

Processors can only be applied to span attributes, not span tags.

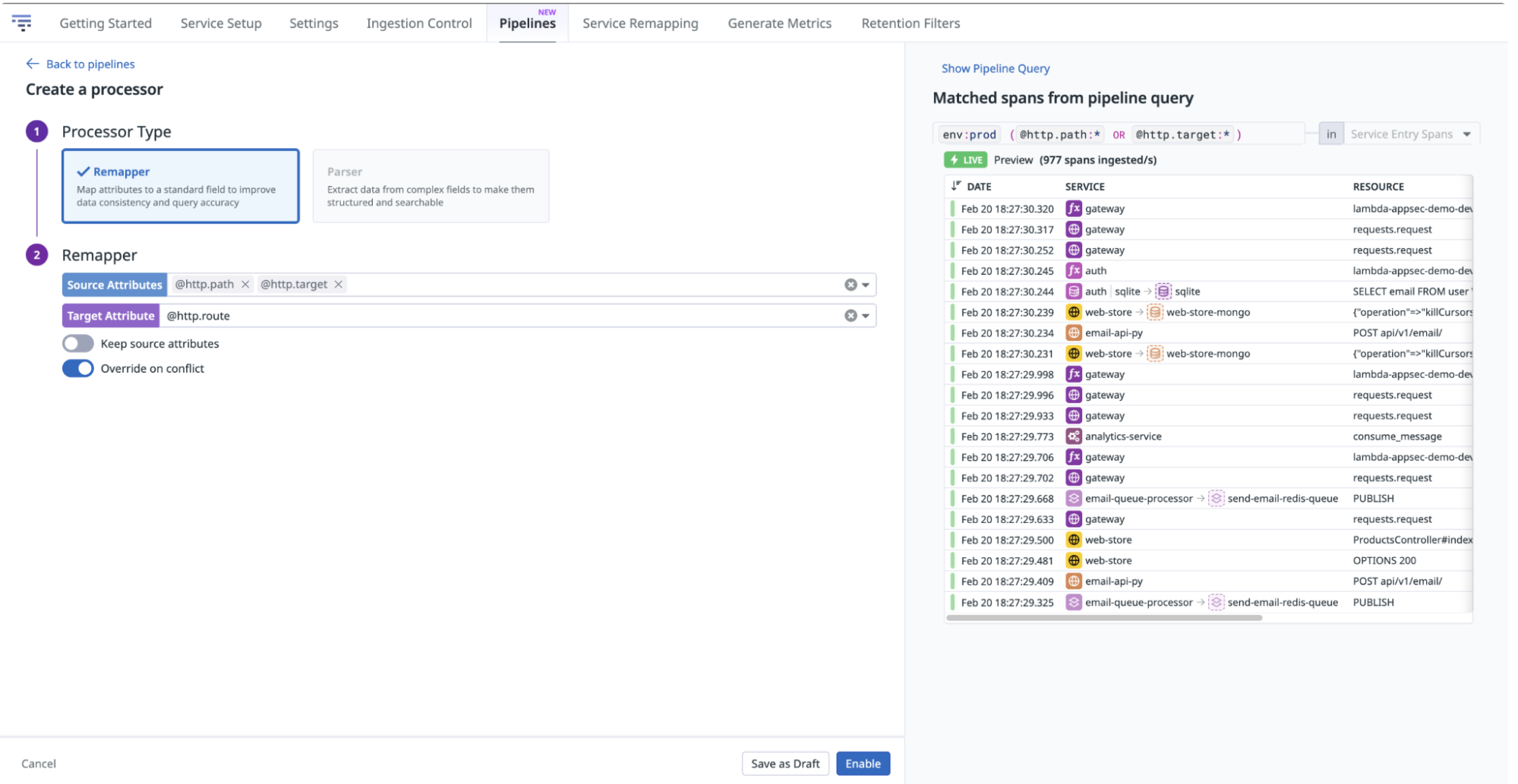

Remapper processor

The Remapper processor renames, merges, or removes span attributes to enforce consistent attribute naming across services. It modifies attribute keys, but does not extract new data from attribute values. To extract data from values, use the Parser processor.

The system attributes env, service, resource_name, operation_name, and @duration cannot be remapped. If you rename or remove attributes used in dashboards, monitors, or retention filters, update the affected dashboards, monitors, and retention filters accordingly.

For example, different services may emit http.route, http.path, or http.target for the same logical field. Use the Remapper to map all three to http.route so that every matching span contains a single, standardized attribute.

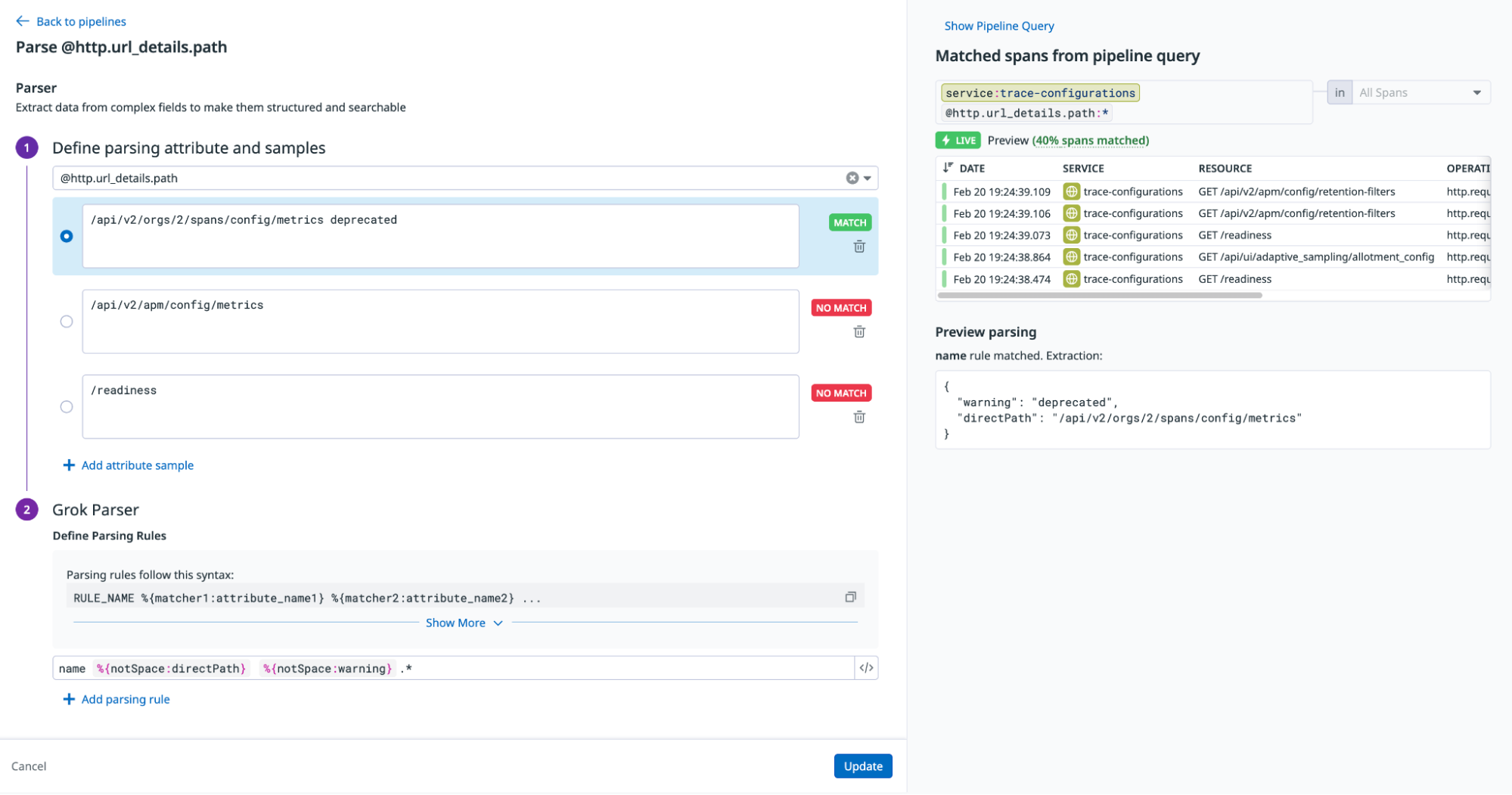

Parser processor

The Parser processor extracts structured attributes from existing span attribute values using Grok parsing rules. It uses the same Grok syntax as Log Management parsing, including all matchers and filters. Unlike the Remapper, the Parser creates new attributes based on parsed content. Use it to transform semi-structured text stored in span attributes into searchable, structured attributes. To rename or consolidate attribute keys, use the Remapper processor instead.

To configure Grok parsing rules:

- Define the parsing attribute and samples: Select the attribute that you want to parse, and add sample data for the selected attribute.

- Define parsing rules: Write your parsing rules in the rule editor.

- Preview parsing: Select a sample to evaluate it against the parsing rules. All samples show a status (

matchorno match) indicating whether one of the Grok rules matches the sample.

When multiple Grok rules match the same sample, only the first matching rule is applied.

Execution flow

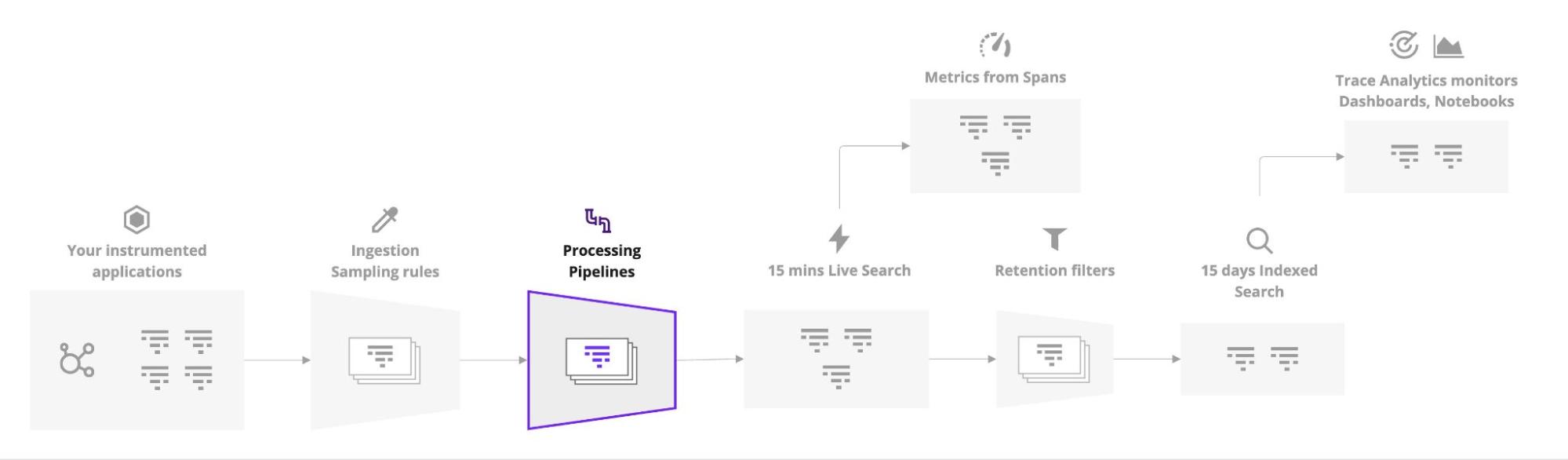

Processing Pipelines run at a specific point in the span processing life cycle:

- Spans are ingested.

- Datadog enrichments are applied (infrastructure, CI, source code metadata).

- Processing Pipelines run.

- Retention filters and metrics from spans are computed.

- Spans are stored and indexed.

Preprocessed attributes

Datadog preprocesses some span attributes before pipelines run. For example, Quantization of APM Data normalizes resource names by default and cannot be disabled. You can define additional pipelines if you need further customization of these attributes.

Further reading

Documentation, liens et articles supplémentaires utiles: