- Principales informations

- Getting Started

- Agent

- API

- Tracing

- Conteneurs

- Dashboards

- Database Monitoring

- Datadog

- Site Datadog

- DevSecOps

- Incident Management

- Intégrations

- Internal Developer Portal

- Logs

- Monitors

- OpenTelemetry

- Profileur

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Surveillance Synthetic

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Intégrations

- Développeurs

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Application mobile

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Alertes

- Watchdog

- Métriques

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Service Management

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- Hosts

- Conteneurs

- Processes

- Sans serveur

- Surveillance réseau

- Cloud Cost

- Application Performance

- APM

- Termes et concepts de l'APM

- Sending Traces to Datadog

- APM Metrics Collection

- Trace Pipeline Configuration

- Connect Traces with Other Telemetry

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilité des services

- Endpoint Observability

- Dynamic Instrumentation

- Live Debugger

- Suivi des erreurs

- Sécurité des données

- Guides

- Dépannage

- Profileur en continu

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Configuration de Postgres

- Configuration de MySQL

- Configuration de SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Connecting DBM and Traces

- Données collectées

- Exploring Database Hosts

- Explorer les métriques de requête

- Explorer des échantillons de requêtes

- Exploring Database Schemas

- Exploring Recommendations

- Dépannage

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Digital Experience

- RUM et Session Replay

- Surveillance Synthetic

- Continuous Testing

- Product Analytics

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Code Coverage

- Quality Gates

- DORA Metrics

- Feature Flags

- Securité

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Pipelines d'observabilité

- Log Management

- CloudPrem

- Administration

Tutorial - Enabling Tracing for a Java Application on Google Kubernetes Engine

Cette page n'est pas encore disponible en français, sa traduction est en cours.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

Overview

This tutorial walks you through the steps for enabling tracing on a sample Java application installed in a cluster on Google Kubernetes Engine (GKE). In this scenario, the Datadog Agent is also installed in the cluster.

For other scenarios, including on a host, in a container, on other cloud infrastructure, and on applications written in other languages, see the other Enabling Tracing tutorials.

See Tracing Java Applications for general comprehensive tracing setup documentation for Java.

Prerequisites

- A Datadog account and organization API key

- Git

- Kubectl

- GCloud - Set the

USE_GKE_GCLOUD_AUTH_PLUGINenvironment variable and configure additional properties for your GCloud project by running these commands:export USE_GKE_GCLOUD_AUTH_PLUGIN=True gcloud config set project <PROJECT_NAME> gcloud config set compute/zone <COMPUTE_ZONE> gcloud config set compute/region <COMPUTE_REGION> - Helm - Install by running these commands:Configure Helm by running these commands:

curl -fsSL -o get_helm.sh https://raw.githubusercontent.com/helm/helm/main/scripts/get-helm-3 chmod 700 get_helm.sh ./get_helm.shhelm repo add datadog-crds https://helm.datadoghq.com helm repo add kube-state-metrics https://prometheus-community.github.io/helm-charts helm repo add datadog https://helm.datadoghq.com helm repo update

Install the sample Kubernetes Java application

The code sample for this tutorial is on GitHub, at github.com/DataDog/apm-tutorial-java-host. To get started, clone the repository:

git clone https://github.com/DataDog/apm-tutorial-java-host.gitThe repository contains a multi-service Java application pre-configured to run inside a Kubernetes cluster. The sample app is a basic notes app with a REST API to add and change data. The docker-compose YAML files to make the containers for the Kubernetes pods are located in the docker directory. This tutorial uses the service-docker-compose-k8s.yaml file, which builds containers for the application.

In each of the notes and calendar directories, there are two sets of Dockerfiles for building the applications, either with Maven or with Gradle. This tutorial uses the Maven build, but if you are more familiar with Gradle, you can use it instead with the corresponding changes to build commands.

Kubernetes configuration files for the notes app, the calendar app, and the Datadog Agent are in the kubernetes directory.

The process of getting the sample application involves building the images from the docker folder, uploading them to a registry, and creating kubernetes resources from the kubernetes folder.

Starting the cluster

If you don’t already have a GKE cluster that you want to re-use, create one by running the following command, replacing the

<VARIABLES>with the values you want to use:gcloud container clusters create <CLUSTER_NAME> --num-nodes=1 --network=<NETWORK> --subnetwork=<SUBNETWORK>Note: For a list of available networks and subnetworks, use the following command:

gcloud compute networks subnets listConnect to the cluster by running:

gcloud container clusters get-credentials <CLUSTER_NAME> gcloud config set container/cluster <CLUSTER_NAME>To facilitate communication with the applications that will be deployed, edit the network’s firewall rules to ensure that the GKE cluster allows TCP traffic on ports

30080and30090.

Build and upload the application image

If you’re not familiar with Google Container Registry (GCR), it might be helpful to read Quickstart for Container Registry.

In the sample project’s /docker directory, run the following commands:

Authenticate with GCR by running:

gcloud auth configure-dockerBuild a Docker image for the sample app, adjusting the platform setting to match yours:

DOCKER_DEFAULT_PLATFORM=linux/amd64 docker-compose -f service-docker-compose-k8s.yaml build notesTag the container with the GCR destination:

docker tag docker-notes:latest gcr.io/<PROJECT_ID>/notes-tutorial:notesUpload the container to the GCR registry:

docker push gcr.io/<PROJECT_ID>/notes-tutorial:notes

Your application is containerized and available for GKE clusters to pull.

Configure the application locally and deploy

Open

kubernetes/notes-app.yamland update theimageentry with the URL for the GCR image, where you pushed the container in step 4 above:spec: containers: - name: notes-app image: gcr.io/<PROJECT_ID>/notes-tutorial:notes imagePullPolicy: AlwaysFrom the

/kubernetesdirectory, run the following command to deploy thenotesapp:kubectl create -f notes-app.yamlTo exercise the app, you need to know its external IP address to call its REST API. First, find the

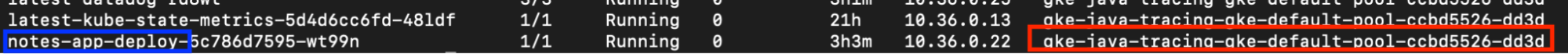

notes-app-deploypod in the list output by the following command, and note its node:kubectl get pods -o wideThen find that node name in the output from the following command, and note the external IP value:

kubectl get nodes -o wideIn the examples shown, the

notes-appis running on nodegke-java-tracing-gke-default-pool-ccbd5526-dd3d, which has an external IP of35.196.6.199.Open up another terminal and send API requests to exercise the app. The notes application is a REST API that stores data in an in-memory H2 database running on the same container. Send it a few commands:

curl '<EXTERNAL_IP>:30080/notes'[]curl -X POST '<EXTERNAL_IP>:30080/notes?desc=hello'{"id":1,"description":"hello"}curl '<EXTERNAL_IP>:30080/notes?id=1'{"id":1,"description":"hello"}curl '<EXTERNAL_IP>:30080/notes'[{"id":1,"description":"hello"}]

- After you’ve seen the application running, stop it so that you can enable tracing on it:

kubectl delete -f notes-app.yaml

Enable tracing

Now that you have a working Java application, configure it to enable tracing.

Add the Java tracing package to your project. Because the Agent runs in a GKE cluster, ensure that the Dockerfiles are configured properly, and there is no need to install anything. Open the

notes/dockerfile.notes.mavenfile and uncomment the line that downloadsdd-java-agent:RUN curl -Lo dd-java-agent.jar 'https://dtdg.co/latest-java-tracer'Within the same

notes/dockerfile.notes.mavenfile, comment out theENTRYPOINTline for running without tracing. Then uncomment theENTRYPOINTline, which runs the application with tracing enabled:ENTRYPOINT ["java" , "-javaagent:../dd-java-agent.jar", "-Ddd.trace.sample.rate=1", "-jar" , "target/notes-0.0.1-SNAPSHOT.jar"]This automatically instruments the application with Datadog services.

The flags on these sample commands, particularly the sample rate, are not necessarily appropriate for environments outside this tutorial. For information about what to use in your real environment, read Tracing configuration.Universal Service Tags identify traced services across different versions and deployment environments so that they can be correlated within Datadog, and so you can use them to search and filter. The three environment variables used for Unified Service Tagging are

DD_SERVICE,DD_ENV, andDD_VERSION. For applications deployed with Kubernetes, these environment variables can be added within the deployment YAML file, specifically for the deployment object, pod spec, and pod container template.For this tutorial, the

kubernetes/notes-app.yamlfile already has these environment variables defined for the notes application for the deployment object, the pod spec, and the pod container template, for example:... spec: replicas: 1 selector: matchLabels: name: notes-app-pod app: java-tutorial-app template: metadata: name: notes-app-pod labels: name: notes-app-pod app: java-tutorial-app tags.datadoghq.com/env: "dev" tags.datadoghq.com/service: "notes" tags.datadoghq.com/version: "0.0.1" ...

Rebuild and upload the application image

Rebuild the image with tracing enabled using the same steps as before in the docker directory:

gcloud auth configure-docker

DOCKER_DEFAULT_PLATFORM=linux/amd64 docker-compose -f service-docker-compose-k8s.yaml build notes

docker tag docker-notes:latest gcr.io/<PROJECT_ID>/notes-tutorial:notes

docker push gcr.io/<PROJECT_ID>/notes-tutorial:notesYour application with tracing enabled is containerized and available for GKE clusters to pull.

Install and run the Agent using Helm

Next, deploy the Agent to GKE to collect the trace data from your instrumented application:

Open

kubernetes/datadog-values.yamlto see the minimum required configuration for the Agent and APM on GKE. This configuration file is used by the command you run next.From the

/kubernetesdirectory, run the following command, inserting your API key and cluster name:helm upgrade -f datadog-values.yaml --install --debug latest --set datadog.apiKey=<DD_API_KEY> --set datadog.clusterName=<CLUSTER_NAME> --set datadog.site=datadoghq.com datadog/datadogFor more secure deployments that do not expose the API Key, read this guide on using secrets. Also, if you use a Datadog site other than

us1, replacedatadoghq.comwith your site.

Launch the app to see automatic tracing

Using the same steps as before, deploy the notes app with kubectl create -f notes-app.yaml and find the external IP address for the node it runs on.

Run some curl commands to exercise the app:

curl '<EXTERNAL_IP>:30080/notes'[]curl -X POST '<EXTERNAL_IP>:30080/notes?desc=hello'{"id":1,"description":"hello"}curl '<EXTERNAL_IP>:30080/notes?id=1'{"id":1,"description":"hello"}curl '<EXTERNAL_IP>:30080/notes'[{"id":1,"description":"hello"}]

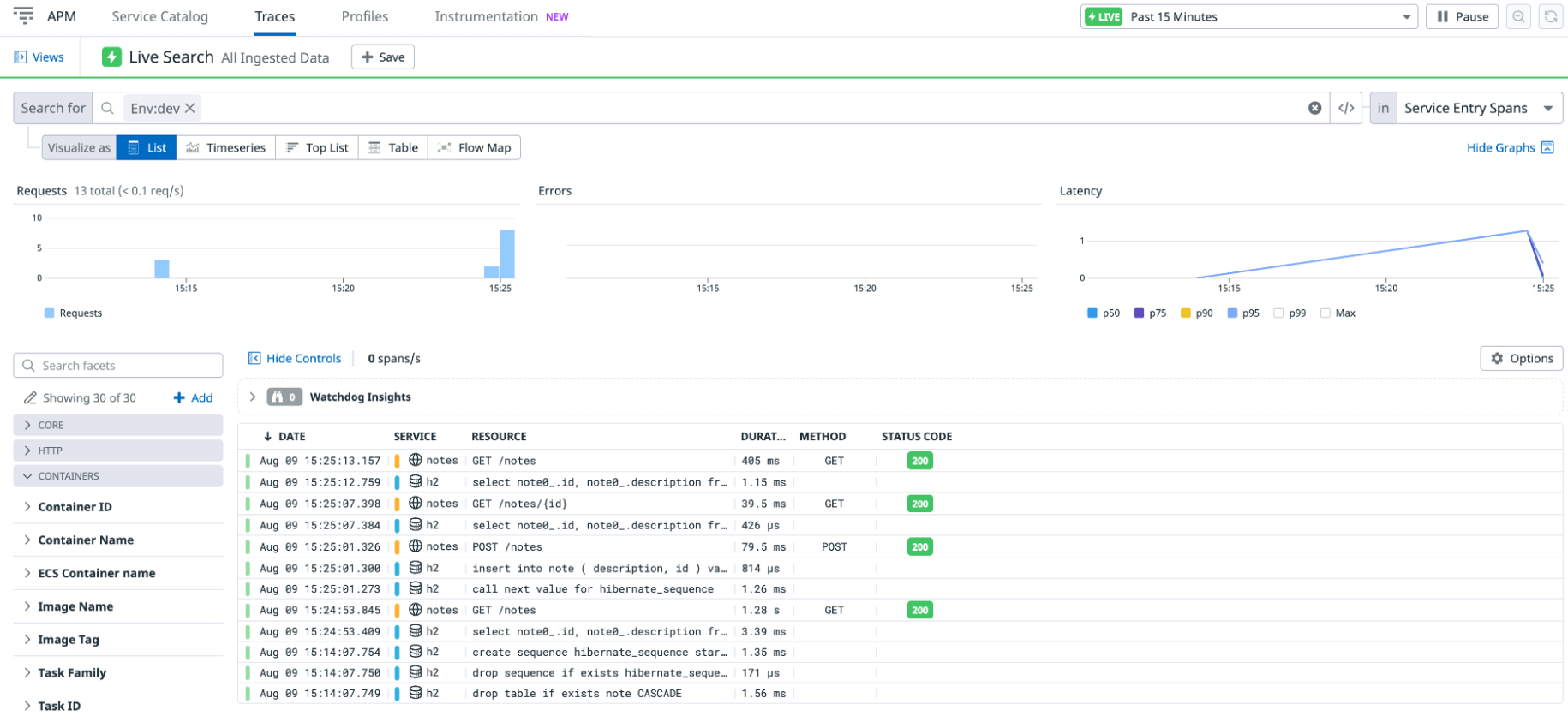

Wait a few moments, and go to APM > Traces in Datadog, where you can see a list of traces corresponding to your API calls:

The h2 is the embedded in-memory database for this tutorial, and notes is the Spring Boot application. The traces list shows all the spans, when they started, what resource was tracked with the span, and how long it took.

If you don’t see traces after several minutes, clear any filter in the Traces Search field (sometimes it filters on an environment variable such as ENV that you aren’t using).

Examine a trace

On the Traces page, click on a POST /notes trace to see a flame graph that shows how long each span took and what other spans occurred before a span completed. The bar at the top of the graph is the span you selected on the previous screen (in this case, the initial entry point into the notes application).

The width of a bar indicates how long it took to complete. A bar at a lower depth represents a span that completes during the lifetime of a bar at a higher depth.

The flame graph for a POST trace looks something like this:

A GET /notes trace looks something like this:

Tracing configuration

The Java tracing library uses Java’s built-in agent and monitoring support. The flag -javaagent:../dd-java-agent.jar in the Dockerfile tells the JVM where to find the Java tracing library so it can run as a Java Agent. Learn more about Java Agents at https://www.baeldung.com/java-instrumentation.

The dd.trace.sample.rate flag sets the sample rate for this application. The ENTRYPOINT command in the Dockerfile sets its value to 1, which means that 100% of all requests to the notes service are sent to the Datadog backend for analysis and display. For a low-volume test application, this is fine. Do not do this in production or in any high-volume environment, because this results in a very large volume of data. Instead, sample some of your requests. Pick a value between 0 and 1. For example, -Ddd.trace.sample.rate=0.1 sends traces for 10% of your requests to Datadog. Read more about tracing configuration settings and sampling mechanisms.

Notice that the sampling rate flag in the command appears before the -jar flag. That’s because this is a parameter for the Java Virtual Machine, not your application. Make sure that when you add the Java Agent to your application, you specify the flag in the right location.

Add manual instrumentation to the Java application

Automatic instrumentation is convenient, but sometimes you want more fine-grained spans. Datadog’s Java DD Trace API allows you to specify spans within your code using annotations or code.

The following steps walk you through modifying the build scripts to download the Java tracing library and adding some annotations to the code to trace into some sample methods.

Delete the current application deployments:

kubectl delete -f notes-app.yamlOpen

/notes/src/main/java/com/datadog/example/notes/NotesHelper.java. This example already contains commented-out code that demonstrates the different ways to set up custom tracing on the code.Uncomment the lines that import libraries to support manual tracing:

import datadog.trace.api.Trace; import datadog.trace.api.DDTags; import io.opentracing.Scope; import io.opentracing.Span; import io.opentracing.Tracer; import io.opentracing.tag.Tags; import io.opentracing.util.GlobalTracer; import java.io.PrintWriter; import java.io.StringWriterUncomment the lines that manually trace the two public processes. These demonstrate the use of

@Traceannotations to specify aspects such asoperationNameandresourceNamein a trace:@Trace(operationName = "traceMethod1", resourceName = "NotesHelper.doLongRunningProcess") // ... @Trace(operationName = "traceMethod2", resourceName = "NotesHelper.anotherProcess")You can also create a separate span for a specific code block in the application. Within the span, add service and resource name tags and error handling tags. These tags result in a flame graph showing the span and metrics in Datadog visualizations. Uncomment the lines that manually trace the private method:

Tracer tracer = GlobalTracer.get(); // Tags can be set when creating the span Span span = tracer.buildSpan("manualSpan1") .withTag(DDTags.SERVICE_NAME, "NotesHelper") .withTag(DDTags.RESOURCE_NAME, "privateMethod1") .start(); try (Scope scope = tracer.activateSpan(span)) { // Tags can also be set after creation span.setTag("postCreationTag", 1); Thread.sleep(30); Log.info("Hello from the custom privateMethod1");And also the lines that set tags on errors:

} catch (Exception e) { // Set error on span span.setTag(Tags.ERROR, true); span.setTag(DDTags.ERROR_MSG, e.getMessage()); span.setTag(DDTags.ERROR_TYPE, e.getClass().getName()); final StringWriter errorString = new StringWriter(); e.printStackTrace(new PrintWriter(errorString)); span.setTag(DDTags.ERROR_STACK, errorString.toString()); Log.info(errorString.toString()); } finally { span.finish(); }Update your Maven build by opening

notes/pom.xmland uncommenting the lines configuring dependencies for manual tracing. Thedd-trace-apilibrary is used for the@Traceannotations, andopentracing-utilandopentracing-apiare used for manual span creation.Rebuild the application and upload it to GCR following the same steps as before, running these commands in the

dockerdirectory:gcloud auth configure-docker DOCKER_DEFAULT_PLATFORM=linux/amd64 docker-compose -f service-docker-compose-k8s.yaml build notes docker tag docker-notes:latest gcr.io/<PROJECT_NAME>/notes-tutorial:notes docker push gcr.io/<PROJECT_NAME>/notes-tutorial:notesUsing the same steps as before, deploy the

notesapp withkubectl create -f notes-app.yamland find the external IP address for the node it runs on.Resend some HTTP requests, specifically some

GETrequests.On the Trace Explorer, click on one of the new

GETrequests, and see a flame graph like this:Note the higher level of detail in the stack trace now that the

getAllfunction has custom tracing.The

privateMethodaround which you created a manual span now shows up as a separate block from the other calls and is highlighted by a different color. The other methods where you used the@Traceannotation show under the same service and color as theGETrequest, which is thenotesapplication. Custom instrumentation is valuable when there are key parts of the code that need to be highlighted and monitored.

For more information, read Custom Instrumentation.

Add a second application to see distributed traces

Tracing a single application is a great start, but the real value in tracing is seeing how requests flow through your services. This is called distributed tracing.

The sample project includes a second application called calendar that returns a random date whenever it is invoked. The POST endpoint in the Notes application has a second query parameter named add_date. When it is set to y, Notes calls the calendar application to get a date to add to the note.

Configure the

calendarapp for tracing by addingdd-java-agentto the startup command in the Dockerfile, like you previously did for the notes app. Opencalendar/dockerfile.calendar.mavenand see that it is already downloadingdd-java-agent:RUN curl -Lo dd-java-agent.jar 'https://dtdg.co/latest-java-tracer'Within the same

calendar/dockerfile.calendar.mavenfile, comment out theENTRYPOINTline for running without tracing. Then uncomment theENTRYPOINTline, which runs the application with tracing enabled:ENTRYPOINT ["java" , "-javaagent:../dd-java-agent.jar", "-Ddd.trace.sample.rate=1", "-jar" , "target/calendar-0.0.1-SNAPSHOT.jar"]Again, the flags, particularly the sample rate, are not necessarily appropriate for environments outside this tutorial. For information about what to use in your real environment, read Tracing configuration.Build both applications and publish them to GCR. From the

dockerdirectory, run:gcloud auth configure-docker DOCKER_DEFAULT_PLATFORM=linux/amd64 docker-compose -f service-docker-compose-k8s.yaml build calendar docker tag docker-calendar:latest gcr.io/<PROJECT_NAME>/calendar-tutorial:calendar docker push gcr.io/<PROJECT_NAME>/calendar-tutorial:calendarOpen

kubernetes/calendar-app.yamland update theimageentry with the URL for the GCR image, where you pushed thecalendarapp in the previous step:spec: containers: - name: calendar-app image: gcr.io/<PROJECT_ID>/calendar-tutorial:calendar imagePullPolicy: AlwaysFrom the

kubernetesdirectory, deploy bothnotesandcalendarapps, now with custom instrumentation, on the cluster:kubectl create -f notes-app.yaml kubectl create -f calendar-app.yamlUsing the method you used before, find the external IP of the

notesapp.Send a POST request with the

add_dateparameter:

curl -X POST '<EXTERNAL_IP>:30080/notes?desc=hello_again&add_date=y'{"id":1,"description":"hello_again with date 2022-11-06"}

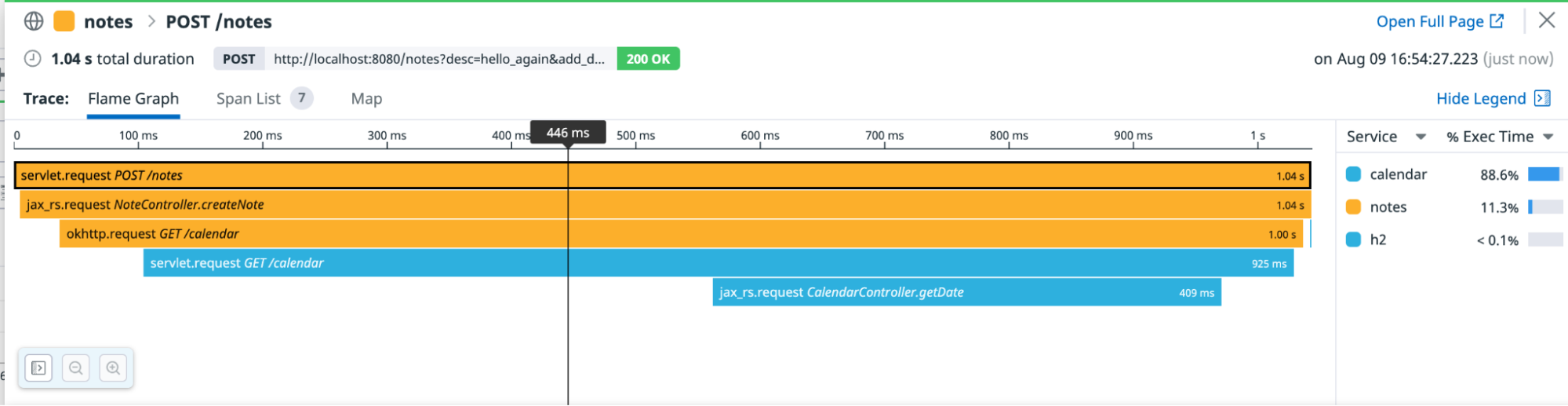

In the Trace Explorer, click this latest trace to see a distributed trace between the two services:

Note that you didn’t change anything in the

notesapplication. Datadog automatically instruments both theokHttplibrary used to make the HTTP call fromnotestocalendar, and the Jetty library used to listen for HTTP requests innotesandcalendar. This allows the trace information to be passed from one application to the other, capturing a distributed trace.When you’re done exploring, clean up all resources and delete the deployments:

kubectl delete -f notes-app.yaml kubectl delete -f calendar-app.yamlSee the documentation for GKE for information about deleting the cluster.

Troubleshooting

If you’re not receiving traces as expected, set up debug mode for the Java tracer. Read Enable debug mode to find out more.

Further reading

Documentation, liens et articles supplémentaires utiles: