- Principales informations

- Getting Started

- Agent

- API

- Tracing

- Conteneurs

- Dashboards

- Database Monitoring

- Datadog

- Site Datadog

- DevSecOps

- Incident Management

- Intégrations

- Internal Developer Portal

- Logs

- Monitors

- OpenTelemetry

- Profileur

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Surveillance Synthetic

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Intégrations

- Développeurs

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Application mobile

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Alertes

- Watchdog

- Métriques

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Service Management

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- Hosts

- Conteneurs

- Processes

- Sans serveur

- Surveillance réseau

- Cloud Cost

- Application Performance

- APM

- Termes et concepts de l'APM

- Sending Traces to Datadog

- APM Metrics Collection

- Trace Pipeline Configuration

- Connect Traces with Other Telemetry

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilité des services

- Endpoint Observability

- Dynamic Instrumentation

- Live Debugger

- Suivi des erreurs

- Sécurité des données

- Guides

- Dépannage

- Profileur en continu

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Configuration de Postgres

- Configuration de MySQL

- Configuration de SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Connecting DBM and Traces

- Données collectées

- Exploring Database Hosts

- Explorer les métriques de requête

- Explorer des échantillons de requêtes

- Exploring Database Schemas

- Exploring Recommendations

- Dépannage

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Digital Experience

- RUM et Session Replay

- Surveillance Synthetic

- Continuous Testing

- Product Analytics

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Code Coverage

- Quality Gates

- DORA Metrics

- Feature Flags

- Securité

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Pipelines d'observabilité

- Log Management

- CloudPrem

- Administration

Monitor outlier

Présentation

La détection de singularités est une fonction algorithmique qui vous permet de détecter lorsqu’un groupe spécifique se comporte différemment par rapport aux autres. Par exemple, il est possible de recevoir une alerte lorsqu’un serveur Web d’un pool traite un nombre de requêtes inhabituel. Vous pouvez également être alerté dès qu’une zone de disponibilité AWS produit plus d’erreurs 500 que les autres.

Création d’un monitor

Pour créer un monitor outlier dans Datadog, utilisez la navigation principale : Monitors –> New Monitor –> Outlier.

Définir la métrique

Toutes les métriques actuellement transmises à Datadog peuvent être surveillées. Pour obtenir des informations supplémentaires, consultez la page Monitor de métrique.

Le monitor outlier requiert une métrique avec un groupe (hosts, zones de disponibilité, partitions, etc.) dans lequel au moins trois membres affichent un comportement uniforme.

Définir vos conditions d’alerte

- Déclencher une alerte différente pour chaque

<GROUPE>anormal - sur un intervalle de

5 minutes,15 minutesou1 hourou encore lors d’une périodecustom(comprise entre 1 minute et 24 heures). - avec l’algorithme

MAD,DBSCAN,scaledMADouscaledDBSCAN - tolérance :

0.33,1.0,3.0, etc. - % :

10,20,30, etc. (seulement pour les algorithmesMAD)

Lors de la configuration d’un monitor outlier, l’intervalle de temps est un paramètre important. Si cet intervalle est trop grand, vous risquez de ne pas être alerté à temps. Si cet intervalle est trop court, les alertes ne seront pas en mesure d’omettre les pics ponctuels.

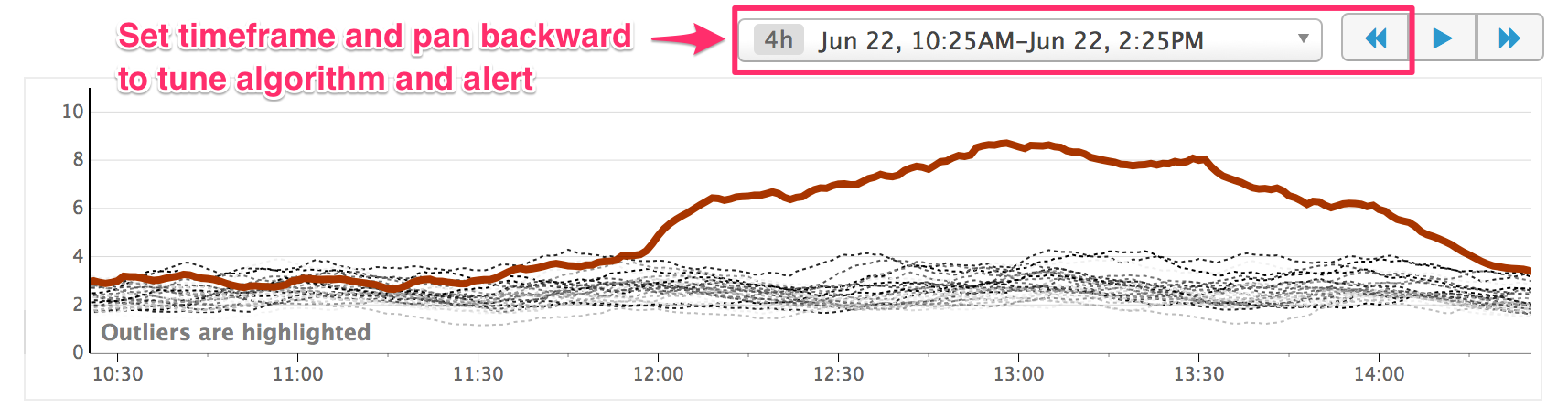

Pour vous assurer que votre alerte est correctement calibrée, vous pouvez définir l’intervalle dans l’aperçu du graphique et utiliser le bouton de retour en arrière («) pour regarder à quels moments des singularités auraient déclenché une alerte. De plus, vous pouvez utiliser cette fonctionnalité pour ajuster les paramètres en fonction d’un algorithme de détection spécifique.

Algorithmes

Datadog propose deux types d’algorithmes de détection des singularités : DBSCAN/scaledDBSCAN et MAD/scaledMAD. Il est conseillé d’utiliser l’algorithme par défaut DBSCAN. Si vous éprouvez des difficultés à détecter les singularités appropriées, vous pouvez ajuster les paramètres de DBSCAN ou essayer l’algorithme MAD. Les algorithmes mis à l’échelle peuvent être utiles si vos métriques sont homogènes et recueillies à grande échelle.

DBSCAN (Density-based spatial clustering of applications with noise) est un algorithme de clustering populaire. Généralement, DBSCAN prend en compte :

- Un paramètre

εcorrespondant au seuil de distance en dessous duquel deux points sont considérés comme proches. - Le nombre minimal de points qui doivent se trouver dans le rayon

ε-radiusd’un point avant que ce point commence à s’agglomérer.

Datadog utilise une forme simplifiée de DBSCAN pour détecter les singularités sur des séries temporelles. Chaque groupe est considéré comme un point en d dimensions, où d est le nombre d’éléments dans la série temporelle. N’importe quel point peut s’agglomérer, et n’importe quel point qui ne se trouve pas dans le plus grand cluster est considéré comme une singularité. Le seuil de distance initial est défini en créant une série temporelle médiane, qui est elle-même établie en prenant la médiane des valeurs des séries temporelles existantes à chaque point dans le temps. La distance euclidienne entre chaque groupe et la série médiane est calculée. Le seuil est défini comme la médiane de ces distances, multipliée par une constante de normalisation.

Paramètres

Cette implémentation de DBSCAN prend en compte un paramètre, la tolerance, qui correspond à la constante par laquelle le seuil initial est multiplié pour obtenir le paramètre de distance ε de DBSCAN. Définissez le paramètre de tolérance en fonction de l’homogénéité que vous attendez de la part de vos groupes : plus la valeur est importante, plus la tolérance aux écarts d’un groupe par rapport aux autres le sera également.

Le MAD (median absolute deviation) ou écart médian absolu est une mesure fiable de la variabilité et peut être considéré comme la version analogique fiable de l’écart-type. Les données sont décrites par des statistiques robustes de façon à limiter l’influence des singularités.

Paramètres

Pour appliquer l’algorithme MAD à votre monitor outlier, configurez les deux paramètres suivants : tolerance et %.

La tolérance correspond au nombre d’écarts devant séparer un point (indépendamment des groupes) de la médiane pour qu’il soit considéré comme une singularité. Le paramètre de tolérance doit être ajusté en fonction de la variabilité attendue des données. Par exemple, si les données se trouvent généralement dans une plage de valeurs restreinte, la tolérance doit être faible. En revanche, si les points varient parfois considérablement, nous vous conseillons de définir une échelle plus élevée afin d’éviter que ces variabilités ne déclenchent un faux positif.

Le pourcentage désigne le pourcentage de points d’un groupe considérés comme des singularités. Si ce pourcentage est dépassé, tout le groupe est considéré comme une singularité.

DBSCAN et MAD possèdent des versions mises à l’échelle (ScaledDBSCAN et ScaledMAD). Dans la plupart des situations, les algorithmes mis à l’échelle se comportent de la même façon que leur version standard. Cependant, si les algorithmes DBSCAN/MAD identifient des singularités au sein d’un groupe de métriques homogènes et que vous souhaitez que l’algorithme de détection des singularités tienne compte de l’amplitude globale des métriques, essayez les algorithmes mis à l’échelle.

DBSCAN et MAD

Quel algorithme faut-il donc utiliser ? Pour la plupart des singularités, chacun des deux algorithmes sera tout aussi efficace avec les paramètres par défaut. Cependant, il existe des cas spécifiques où l’un des deux algorithmes est plus approprié.

Dans l’image ci-dessous, plusieurs hosts vident leurs buffers en même temps, tandis qu’un host vide son buffer un peu plus tard. Avec DBSCAN, ce comportement est détecté comme une singularité, ce qui n’est pas le cas avec MAD. La synchronisation des groupes étant ici simplement le résultat d’un redémarrage simultané des hosts, il est probablement préférable d’opter pour MAD. En revanche, si au lieu des buffers vidés les métriques représentaient une tâche planifiée qui doit être effectuée de façon synchronisée par tous les hosts, DBSCAN serait le choix idéal.

Conditions d’alerte avancées

Pour obtenir des instructions détaillées concernant les options d’alerte avancées (résolution automatique, délai pour les nouveaux groupes, etc.), consultez la documentation relative à la configuration des monitors.

Notifications

Pour obtenir des instructions détaillées sur l’utilisation des sections Say what’s happening et Notify your team, consultez la page Notifications.

API

Pour automatiser la création de monitors outlier, consultez la documentation de référence sur l’API Datadog. Datadog vous conseille d’exporter le JSON d’un monitor pour créer la requête pour l’API.

Dépannage

Les algorithmes de détection des singularités sont conçus pour identifier les groupes qui affichent un comportement différent par rapport aux autres. Si le comportement de votre groupe prend la forme de « lignes » (peut-être que chaque ligne représente une partition différente), Datadog vous conseille de taguer chaque ligne avec un identificateur et de configurer des alertes de détection des singularités spécifiques pour chaque ligne.

Pour aller plus loin

Documentation, liens et articles supplémentaires utiles: