- Principales informations

- Getting Started

- Agent

- API

- Tracing

- Conteneurs

- Dashboards

- Database Monitoring

- Datadog

- Site Datadog

- DevSecOps

- Incident Management

- Intégrations

- Internal Developer Portal

- Logs

- Monitors

- OpenTelemetry

- Profileur

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Surveillance Synthetic

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Intégrations

- Développeurs

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Application mobile

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Alertes

- Watchdog

- Métriques

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Service Management

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- Hosts

- Conteneurs

- Processes

- Sans serveur

- Surveillance réseau

- Cloud Cost

- Application Performance

- APM

- Termes et concepts de l'APM

- Sending Traces to Datadog

- APM Metrics Collection

- Trace Pipeline Configuration

- Connect Traces with Other Telemetry

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilité des services

- Endpoint Observability

- Dynamic Instrumentation

- Live Debugger

- Suivi des erreurs

- Sécurité des données

- Guides

- Dépannage

- Profileur en continu

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Configuration de Postgres

- Configuration de MySQL

- Configuration de SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Connecting DBM and Traces

- Données collectées

- Exploring Database Hosts

- Explorer les métriques de requête

- Explorer des échantillons de requêtes

- Exploring Database Schemas

- Exploring Recommendations

- Dépannage

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Digital Experience

- RUM et Session Replay

- Surveillance Synthetic

- Continuous Testing

- Product Analytics

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Code Coverage

- Quality Gates

- DORA Metrics

- Feature Flags

- Securité

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Pipelines d'observabilité

- Log Management

- CloudPrem

- Administration

Configurer Data Streams Monitoring pour Go

Ce produit n'est pas pris en charge par le site Datadog que vous avez sélectionné. ().

Les types d’instrumentation suivants sont disponibles :

- Instrumentation automatique pour les workloads basés sur Kafka

- Instrumentation manuelle pour les workloads basés sur Kafka

- Instrumentation manuelle pour les autres technologies de file d’attente ou protocoles

Prérequis

Pour implémenter la solution Data Streams Monitoring, vous devez avoir installé la dernière version de l’Agent Datadog et des bibliothèques Data Streams Monitoring.

Note: This documentation uses v2 of the Go tracer, which Datadog recommends for all users. If you are using v1, see the migration guide to upgrade to v2.

Data Streams Monitoring n’a pas été modifié entre les versions v1 et v2 du traceur.

Bibliothèques compatibles

| Technologies | Bibliothèque | Version minimale du traceur | Version recommandée du traceur |

|---|---|---|---|

| Kafka | confluent-kafka-go | 1.56.1 | 1.66.0 or later |

| Kafka | Sarama | 1.56.1 | 1.66.0 or later |

| Kafka | kafka-go | 1.63.0 | 1.63.0 or later |

Installation

Monitoring Kafka Pipelines

Data Streams Monitoring uses message headers to propagate context through Kafka streams. If log.message.format.version is set in the Kafka broker configuration, it must be set to 0.11.0.0 or higher. Data Streams Monitoring is not supported for versions lower than this.

Monitoring RabbitMQ pipelines

The RabbitMQ integration can provide detailed monitoring and metrics of your RabbitMQ deployments. For full compatibility with Data Streams Monitoring, Datadog recommends configuring the integration as follows:

instances:

- prometheus_plugin:

url: http://<HOST>:15692

unaggregated_endpoint: detailed?family=queue_coarse_metrics&family=queue_consumer_count&family=channel_exchange_metrics&family=channel_queue_exchange_metrics&family=node_coarse_metrics

This ensures that all RabbitMQ graphs populate, and that you see detailed metrics for individual exchanges as well as queues.

Instrumentation automatique

L’instrumentation automatique utilise Orchestrion pour installer dd-trace-go et prend en charge les bibliothèques Sarama et Confluent Kafka.

Pour instrumenter automatiquement votre service :

- Suivez le guide Prise en main d’Orchestrion pour compiler ou exécuter votre service avec Orchestrion.

- Définissez la variable d’environnement

DD_DATA_STREAMS_ENABLED=true

Instrumentation manuelle

Client Sarama Kafka

Pour instrumenter manuellement le client Sarama Kafka avec Data Streams Monitoring :

- Importez la bibliothèque go

ddsarama

import (

ddsarama "github.com/DataDog/dd-trace-go/contrib/IBM/sarama/v2"

)

2. Wrap the producer with `ddsarama.WrapAsyncProducer`

...

config := sarama.NewConfig()

producer, err := sarama.NewAsyncProducer([]string{bootStrapServers}, config)

// ADD THIS LINE

producer = ddsarama.WrapAsyncProducer(config, producer, ddsarama.WithDataStreams())

Client Confluent Kafka

Pour instrumenter manuellement Confluent Kafka avec Data Streams Monitoring :

- Importez la bibliothèque go

ddkafka

import (

ddkafka "github.com/DataDog/dd-trace-go/contrib/confluentinc/confluent-kafka-go/kafka.v2/v2"

)

- Encapsulez la création du producteur avec

ddkafka.NewProduceret utilisez la configurationddkafka.WithDataStreams()

// CREATE PRODUCER WITH THIS WRAPPER

producer, err := ddkafka.NewProducer(&kafka.ConfigMap{

"bootstrap.servers": bootStrapServers,

}, ddkafka.WithDataStreams())

Si un service consomme des données en un point et en produit en un autre point, propagez le contexte entre ces deux points à l’aide de la structure du contexte Go :

Extrayez le contexte des en-têtes

ctx = datastreams.ExtractFromBase64Carrier(ctx, ddsarama.NewConsumerMessageCarrier(message))Injectez-le dans l’en-tête avant la production des données en aval

datastreams.InjectToBase64Carrier(ctx, ddsarama.NewProducerMessageCarrier(message))

Autres technologies de file d’attente ou protocoles

Vous pouvez également utiliser l’instrumentation manuelle. Par exemple, vous pouvez propager le contexte via Kinesis.

Instrumenter l’appel de production

- Assurez-vous que votre message prend en charge l’interface TextMapWriter.

- Injectez le contexte dans votre message et instrumentez l’appel de production comme suit :

ctx, ok := tracer.SetDataStreamsCheckpointWithParams(ctx, options.CheckpointParams{PayloadSize: getProducerMsgSize(msg)}, "direction:out", "type:kinesis", "topic:kinesis_arn")

if ok {

datastreams.InjectToBase64Carrier(ctx, message)

}

Instrumenter l’appel de consommation

- Assurez-vous que votre message prend en charge l’interface TextMapReader.

- Extrayez le contexte de votre message et instrumentez l’appel de consommation comme suit :

ctx, ok := tracer.SetDataStreamsCheckpointWithParams(datastreams.ExtractFromBase64Carrier(context.Background(), message), options.CheckpointParams{PayloadSize: payloadSize}, "direction:in", "type:kinesis", "topic:kinesis_arn")

Surveillance des connecteurs

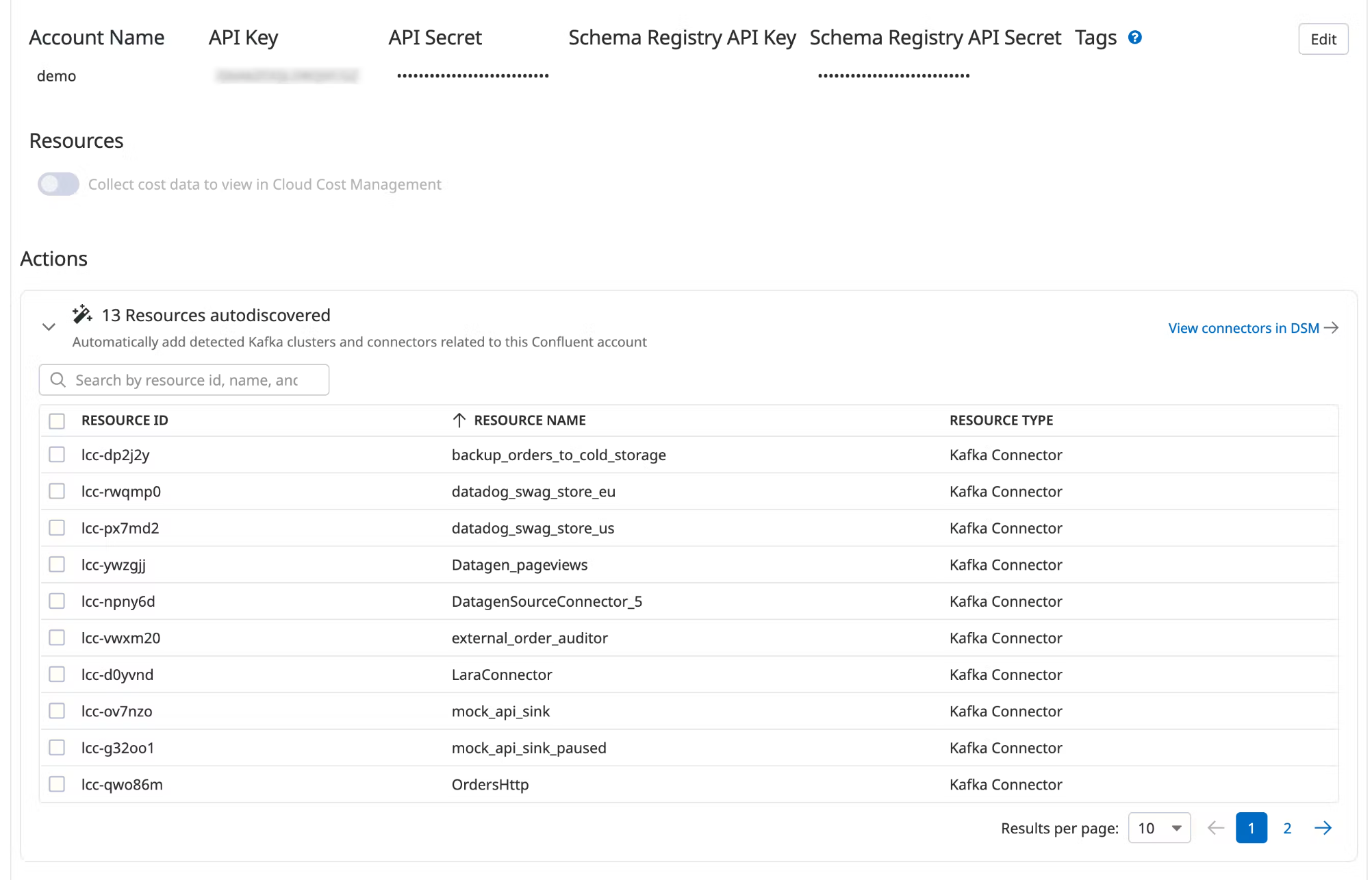

Connecteurs Confluent Cloud

Data Streams Monitoring can automatically discover your Confluent Cloud connectors and visualize them within the context of your end-to-end streaming data pipeline.

Setup

Install and configure the Datadog-Confluent Cloud integration.

In Datadog, open the Confluent Cloud integration tile.

Under Actions, a list of resources populates with detected clusters and connectors. Datadog attempts to discover new connectors every time you view this integration tile.

Select the resources you want to add.

Click Add Resources.

Navigate to Data Streams Monitoring to visualize the connectors and track connector status and throughput.

Pour aller plus loin

Documentation, liens et articles supplémentaires utiles: