- Esenciales

- Empezando

- Agent

- API

- Rastreo de APM

- Contenedores

- Dashboards

- Monitorización de bases de datos

- Datadog

- Sitio web de Datadog

- DevSecOps

- Gestión de incidencias

- Integraciones

- Internal Developer Portal

- Logs

- Monitores

- OpenTelemetry

- Generador de perfiles

- Session Replay

- Security

- Serverless para Lambda AWS

- Software Delivery

- Monitorización Synthetic

- Etiquetas (tags)

- Workflow Automation

- Centro de aprendizaje

- Compatibilidad

- Glosario

- Atributos estándar

- Guías

- Agent

- Arquitectura

- IoT

- Plataformas compatibles

- Recopilación de logs

- Configuración

- Automatización de flotas

- Solucionar problemas

- Detección de nombres de host en contenedores

- Modo de depuración

- Flare del Agent

- Estado del check del Agent

- Problemas de NTP

- Problemas de permisos

- Problemas de integraciones

- Problemas del sitio

- Problemas de Autodiscovery

- Problemas de contenedores de Windows

- Configuración del tiempo de ejecución del Agent

- Consumo elevado de memoria o CPU

- Guías

- Seguridad de datos

- Integraciones

- Desarrolladores

- Autorización

- DogStatsD

- Checks personalizados

- Integraciones

- Build an Integration with Datadog

- Crear una integración basada en el Agent

- Crear una integración API

- Crear un pipeline de logs

- Referencia de activos de integración

- Crear una oferta de mercado

- Crear un dashboard de integración

- Create a Monitor Template

- Crear una regla de detección Cloud SIEM

- Instalar la herramienta de desarrollo de integraciones del Agente

- Checks de servicio

- Complementos de IDE

- Comunidad

- Guías

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Aplicación móvil de Datadog

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- En la aplicación

- Dashboards

- Notebooks

- Editor DDSQL

- Reference Tables

- Hojas

- Monitores y alertas

- Watchdog

- Métricas

- Bits AI

- Internal Developer Portal

- Error Tracking

- Explorador

- Estados de problemas

- Detección de regresión

- Suspected Causes

- Error Grouping

- Bits AI Dev Agent

- Monitores

- Issue Correlation

- Identificar confirmaciones sospechosas

- Auto Assign

- Issue Team Ownership

- Rastrear errores del navegador y móviles

- Rastrear errores de backend

- Manage Data Collection

- Solucionar problemas

- Guides

- Change Tracking

- Gestión de servicios

- Objetivos de nivel de servicio (SLOs)

- Gestión de incidentes

- De guardia

- Status Pages

- Gestión de eventos

- Gestión de casos

- Actions & Remediations

- Infraestructura

- Cloudcraft

- Catálogo de recursos

- Universal Service Monitoring

- Hosts

- Contenedores

- Processes

- Serverless

- Monitorización de red

- Cloud Cost

- Rendimiento de las aplicaciones

- APM

- Términos y conceptos de APM

- Instrumentación de aplicación

- Recopilación de métricas de APM

- Configuración de pipelines de trazas

- Correlacionar trazas (traces) y otros datos de telemetría

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilidad del servicio

- Endpoint Observability

- Instrumentación dinámica

- Live Debugger

- Error Tracking

- Seguridad de los datos

- Guías

- Solucionar problemas

- Límites de tasa del Agent

- Métricas de APM del Agent

- Uso de recursos del Agent

- Logs correlacionados

- Stacks tecnológicos de llamada en profundidad PHP 5

- Herramienta de diagnóstico de .NET

- Cuantificación de APM

- Go Compile-Time Instrumentation

- Logs de inicio del rastreador

- Logs de depuración del rastreador

- Errores de conexión

- Continuous Profiler

- Database Monitoring

- Gastos generales de integración del Agent

- Arquitecturas de configuración

- Configuración de Postgres

- Configuración de MySQL

- Configuración de SQL Server

- Configuración de Oracle

- Configuración de MongoDB

- Setting Up Amazon DocumentDB

- Conexión de DBM y trazas

- Datos recopilados

- Explorar hosts de bases de datos

- Explorar métricas de consultas

- Explorar ejemplos de consulta

- Exploring Database Schemas

- Exploring Recommendations

- Solucionar problemas

- Guías

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Experiencia digital

- Real User Monitoring

- Pruebas y monitorización de Synthetics

- Continuous Testing

- Análisis de productos

- Entrega de software

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Configuración

- Network Settings

- Tests en contenedores

- Repositories

- Explorador

- Monitores

- Test Health

- Flaky Test Management

- Working with Flaky Tests

- Test Impact Analysis

- Flujos de trabajo de desarrolladores

- Cobertura de código

- Instrumentar tests de navegador con RUM

- Instrumentar tests de Swift con RUM

- Correlacionar logs y tests

- Guías

- Solucionar problemas

- Code Coverage

- Quality Gates

- Métricas de DORA

- Feature Flags

- Seguridad

- Información general de seguridad

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- Observabilidad de la IA

- Log Management

- Observability Pipelines

- Gestión de logs

- CloudPrem

- Administración

- Gestión de cuentas

- Seguridad de los datos

- Ayuda

Instrumenting a Node.js Cloud Run Job

Esta página aún no está disponible en español. Estamos trabajando en su traducción.

Si tienes alguna pregunta o comentario sobre nuestro actual proyecto de traducción, no dudes en ponerte en contacto con nosotros.

Si tienes alguna pregunta o comentario sobre nuestro actual proyecto de traducción, no dudes en ponerte en contacto con nosotros.

Setup

A sample application is available on GitHub.

For full visibility and access to all Datadog features in Cloud Run Jobs,

ensure you’ve installed the Google Cloud integration

and are using serverless-init version 1.9.0 or later.

Install the Datadog Node.js tracer.

In your main application, add

dd-trace-js.npm install dd-trace --saveAdd the following to your application code to initialize the tracer:

const tracer = require('dd-trace').init({ logInjection: true, });Set the following environment variable to specify that the

dd-trace/initmodule is required when the Node.js process starts:ENV NODE_OPTIONS="--require dd-trace/init"

Note: Cloud Run Jobs run to completion rather than serving requests, so auto instrumentation won’t create a top-level “job” span. For end-to-end visibility, create your own root span. See the Node.js Custom Instrumentation instructions.

For more information, see Tracing Node.js applications.

Install serverless-init.

Serverless-init automatically creates a span for the duration of a task, even if the tracer is not installed. You can disable this by settingDD_APM_ENABLED=false. However, tracing is recommended because it is required for task-level visibility.Cloud Run Jobs requiresDatadog publishes new releases of theserverless-initversion 1.9.0 or later.serverless-initcontainer image to Google's gcr.io, AWS's ECR, and on Docker Hub:hub.docker.com gcr.io public.ecr.aws datadog/serverless-init gcr.io/datadoghq/serverless-init public.ecr.aws/datadog/serverless-init Images are tagged based on semantic versioning, with each new version receiving three relevant tags:

1,1-alpine: use these to track the latest minor releases, without breaking changes1.x.x,1.x.x-alpine: use these to pin to a precise version of the librarylatest,latest-alpine: use these to follow the latest version release, which may include breaking changes

Add the following instructions and arguments to your Dockerfile.

COPY --from=datadog/serverless-init:<YOUR_TAG> /datadog-init /app/datadog-init ENTRYPOINT ["/app/datadog-init"] CMD ["/nodejs/bin/node", "/path/to/your/app.js"]Alternative configuration

Datadog expects

serverless-initto be the top-level application, with the rest of your app’s command line passed in forserverless-initto execute.If you already have an entrypoint defined inside your Dockerfile, you can instead modify the CMD argument.

CMD ["/app/datadog-init", "/nodejs/bin/node", "/path/to/your/app.js"]If you require your entrypoint to be instrumented as well, you can instead swap your entrypoint and CMD arguments.

ENTRYPOINT ["/app/datadog-init"] CMD ["/your_entrypoint.sh", "/nodejs/bin/node", "/path/to/your/app.js"]As long as your command to run is passed as an argument to

datadog-init, you will receive full instrumentation.Set up logs.

To enable logging, set the environment variable

DD_LOGS_ENABLED=true. This allowsserverless-initto read logs from stdout and stderr.Datadog also recommends setting the environment variable

DD_LOGS_INJECTION=trueandDD_SOURCE=nodejsto enable advanced Datadog log parsing.If you want multiline logs to be preserved in a single log message, Datadog recommends writing your logs in JSON format. For example, you can use a third-party logging library such as

winston:const tracer = require('dd-trace').init({ logInjection: true, }); const { createLogger, format, transports } = require('winston'); const logger = createLogger({ level: 'info', exitOnError: false, format: format.json(), transports: [ new transports.Console() ], }); logger.info('Hello world!');For more information, see Correlating Node.js Logs and Traces.

Configure your application.

After the container is built and pushed to your registry, set the required environment variables for the Datadog Agent:

DD_API_KEY: Your Datadog API key, used to send data to your Datadog account. For privacy and safety, configure this API key as a Google Cloud Secret.DD_SITE: Your Datadog site. For example,datadoghq.com.

For more environment variables, see the Environment variables section on this page.

Add a service label in Google Cloud. In your Cloud Run service’s info panel, add a label with the following key and value:

Key Value serviceThe name of your service. Matches the value provided as the DD_SERVICEenvironment variable.See Configure labels for services in the Cloud Run documentation for instructions.

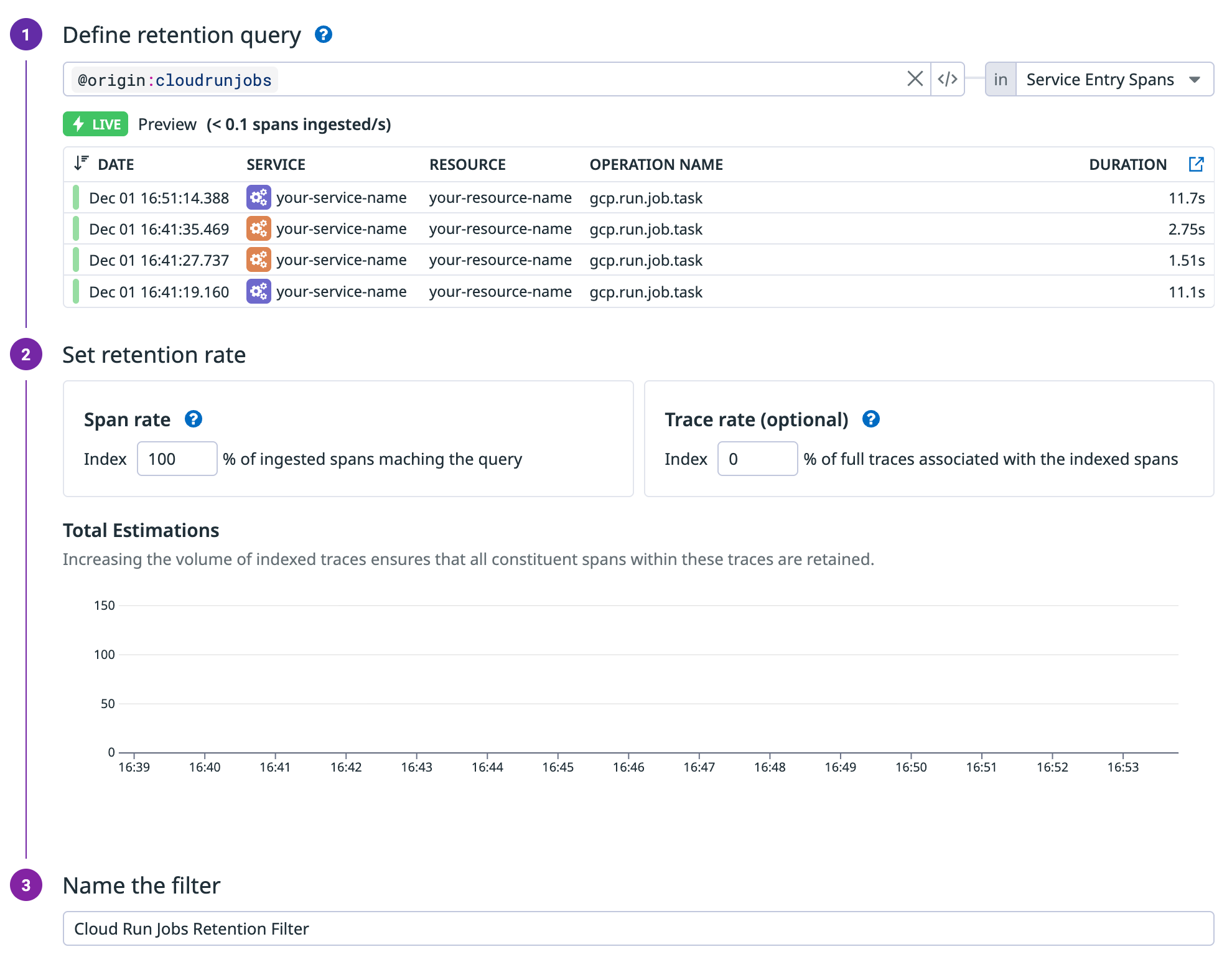

Set up a retention filter for Cloud Run Jobs traces. Datadog relies on traces to display executions and tasks in the UI. To ensure traces are retained, create a retention filter with the query

@origin:cloudrunjobsand set the span retention rate to 100%.

Send custom metrics.

To send custom metrics, view code examples. In serverless, only the distribution metric type is supported.

Environment variables

| Variable | Description |

|---|---|

DD_API_KEY | Datadog API key - Required |

DD_SITE | Datadog site - Required |

DD_SERVICE | Datadog Service name. Required |

| ‘DD_AZURE_SUBSCRIPTION_ID’ | Azure Subscription ID. Required |

| ‘DD_AZURE_RESOURCE_GROUP’ | Azure Resource Group name. Required |

DD_LOGS_ENABLED | When true, send logs (stdout and stderr) to Datadog. Defaults to false. |

DD_LOGS_INJECTION | When true, enrich all logs with trace data for supported loggers. See Correlate Logs and Traces for more information. |

DD_VERSION | See Unified Service Tagging. |

DD_ENV | See Unified Service Tagging. |

DD_SOURCE | Set the log source to enable a Log Pipeline for advanced parsing. To automatically apply language-specific parsing rules, set to nodejs, or use your custom pipeline. Defaults to cloudrun. |

DD_TAGS | Add custom tags to your logs, metrics, and traces. Tags should be comma separated in key/value format (for example: key1:value1,key2:value2). |

Distributed tracing with Pub/Sub

To get end-to-end distributed traces between Pub/Sub producers and Cloud Run jobs, configure your push subscriptions with the --push-no-wrapper and --push-no-wrapper-write-metadata flags. This moves message attributes from the JSON body to HTTP headers, allowing Datadog to extract producer trace context and create proper span links.

For more information, see Producer-aware tracing for Google Cloud Pub/Sub and Cloud Run and Payload unwrapping in the Google Cloud documentation.

Configure push subscriptions for full trace visibility

Create a new push subscription:

gcloud pubsub subscriptions create order-processor-sub \

--topic=orders \

--push-endpoint=https://order-processor-xyz.run.app/pubsub \

--push-no-wrapper \

--push-no-wrapper-write-metadataUpdate an existing push subscription:

gcloud pubsub subscriptions update order-processor-sub \

--push-no-wrapper \

--push-no-wrapper-write-metadataConfigure Eventarc Pub/Sub triggers

Eventarc Pub/Sub triggers use push subscriptions as the underlying delivery mechanism. When you create an Eventarc trigger, GCP automatically creates a managed push subscription. However, Eventarc does not expose --push-no-wrapper-write-metadata as a trigger creation parameter, so you must manually update the auto-created subscription.

Create the Eventarc trigger:

gcloud eventarc triggers create order-processor-trigger \ --destination-run-service=order-processor \ --destination-run-region=us-central1 \ --event-filters="type=google.cloud.pubsub.topic.v1.messagePublished" \ --event-filters="topic=projects/my-project/topics/orders" \ --location=us-central1Find the auto-created subscription:

gcloud pubsub subscriptions list \ --filter="topic:projects/my-project/topics/orders" \ --format="table(name,pushConfig.pushEndpoint)"Example output:

NAME PUSH_ENDPOINT eventarc-us-central1-order-processor-trigger-abc-sub-def https://order-processor-xyz.run.appUpdate the subscription for trace propagation:

gcloud pubsub subscriptions update \ eventarc-us-central1-order-processor-trigger-abc-sub-def \ --push-no-wrapper \ --push-no-wrapper-write-metadata

Troubleshooting

This integration depends on your runtime having a full SSL implementation. If you are using a slim image, you may need to add the following command to your Dockerfile to include certificates:

RUN apt-get update && apt-get install -y ca-certificates

To have your Cloud Run services appear in the software catalog, you must set the DD_SERVICE, DD_VERSION, and DD_ENV environment variables.

Further reading

Más enlaces, artículos y documentación útiles: