- Essentials

- Getting Started

- Agent

- API

- APM Tracing

- Containers

- Dashboards

- Database Monitoring

- Datadog

- Datadog Site

- DevSecOps

- Incident Management

- Integrations

- Internal Developer Portal

- Logs

- Monitors

- OpenTelemetry

- Profiler

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Synthetic Monitoring and Testing

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- Developers

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Create an Agent-based Integration

- Create an API Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create a Tile

- Create an Integration Dashboard

- Create a Monitor Template

- Create a Cloud SIEM Detection Rule

- OAuth for Integrations

- Install Agent Integration Developer Tool

- Service Checks

- IDE Plugins

- Community

- Guides

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Datadog Mobile App

- DDSQL Reference

- CoScreen

- CoTerm

- Cloudcraft (Standalone)

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Monitors and Alerting

- Metrics

- Watchdog

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Service Management

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- Hosts

- Containers

- Processes

- Serverless

- Network Monitoring

- Cloud Cost

- Application Performance

- APM

- APM Terms and Concepts

- Application Instrumentation

- APM Metrics Collection

- Trace Pipeline Configuration

- Correlate Traces with Other Telemetry

- Trace Explorer

- Recommendations

- Code Origins for Spans

- Service Observability

- Endpoint Observability

- Dynamic Instrumentation

- Live Debugger

- Error Tracking

- Data Security

- Guides

- Troubleshooting

- Continuous Profiler

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Setting Up Postgres

- Setting Up MySQL

- Setting Up SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Connecting DBM and Traces

- Data Collected

- Exploring Database Hosts

- Exploring Query Metrics

- Exploring Query Samples

- Exploring Database Schemas

- Exploring Recommendations

- Troubleshooting

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Digital Experience

- Real User Monitoring

- Synthetic Testing and Monitoring

- Continuous Testing

- Product Analytics

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Optimization

- Quality Gates

- DORA Metrics

- Security

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security

- App and API Protection

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Observability Pipelines

- Log Management

- Administration

Set up LLM Observability

This product is not supported for your selected Datadog site. ().

Overview

To start sending data to LLM Observability, instrument your application with the LLM Observability SDK for Python or by calling the LLM Observability API.

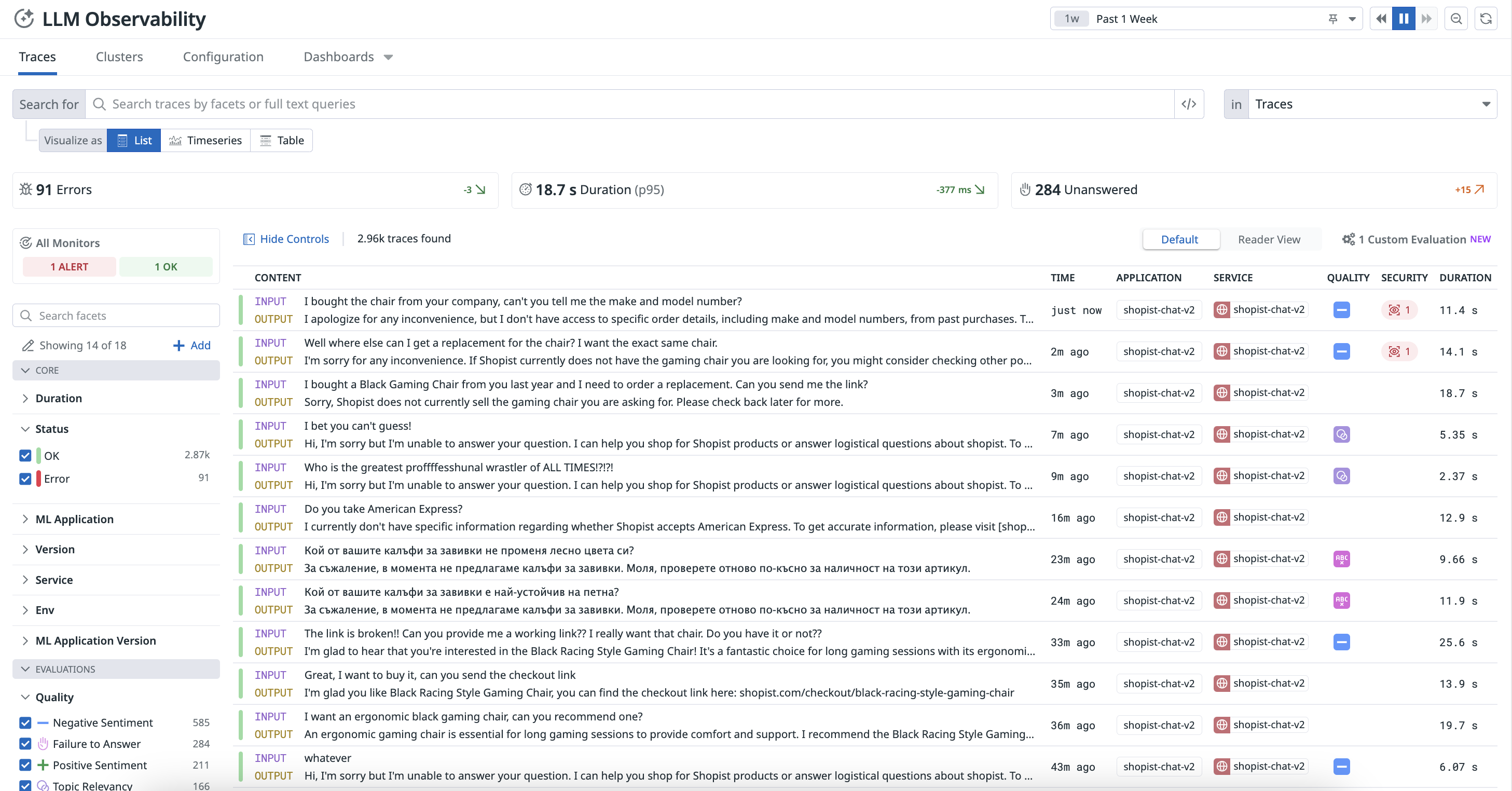

You can visualize the interactions and performance data of your LLM applications on the LLM Observability Traces page, where each request fulfilled by your application is represented as a trace.

For more information about traces, see Terms and Concepts and decide which instrumentation option best suits your application’s needs.

Instrument an LLM application

Datadog provides auto-instrumentation to capture LLM calls for specific LLM provider libraries. However, manually instrumenting your LLM application using the LLM Observability SDK for Python enables access to additional LLM Observability features.

These instructions use the LLM Observability SDK for Python. If your application is running in a serverless environment, follow the serverless setup instructions.If your application is not written in Python, you can complete the steps below with API requests instead of SDK function calls.

To instrument an LLM application:

- Install the LLM Observability SDK for Python.

- Configure the SDK by providing the required environment variables in your application startup command, or programmatically in-code. Ensure you have configured your configure your Datadog API key, Datadog site, and machine learning (ML) app name.

Trace an LLM application

To trace an LLM application:

Create spans in your LLM application code to represent your application’s operations. For more information about spans, see Terms and Concepts.

You can nest spans to create more useful traces. For additional examples and detailed usage, see Trace an LLM Application and the SDK documentation.

Annotate your spans with input data, output data, metadata (such as

temperature), metrics (such asinput_tokens), and key-value tags (such asversion:1.0.0).Optionally, add advanced tracing features, such as user sessions.

Run your LLM application.

- If you used the command-line setup method, the command to run your application should use

ddtrace-run, as described in those instructions. - If you used the in-code setup method, run your application as you normally would.

- If you used the command-line setup method, the command to run your application should use

You can access the resulting traces in the Traces tab on the LLM Observability Traces page and the resulting metrics in the out-of-the-box LLM Observability Overview dashboard.

Creating spans

To create a span, the LLM Observability SDK provides two options: using a function decorator or using a context manager inline.

Using a function decorator is the preferred method. Using a context manager is more advanced and allows more fine-grained control over tracing.

- Decorators

- Use

ddtrace.llmobs.decorators.<SPAN_KIND>()as a decorator on the function you’d like to trace, replacing<SPAN_KIND>with the desired span kind. - Inline

- Use

ddtrace.llmobs.LLMObs.<SPAN_KIND>()as a context manager to trace any inline code, replacing<SPAN_KIND>with the desired span kind.

The examples below create a workflow span.

from ddtrace.llmobs.decorators import workflow

@workflow

def extract_data(document):

... # LLM-powered workflow that extracts structure data from a document

returnfrom ddtrace.llmobs import LLMObs

def extract_data(document):

with LLMObs.workflow(name="extract_data") as span:

... # LLM-powered workflow that extracts structure data from a document

returnAnnotating spans

To add extra information to a span such as inputs, outputs, metadata, metrics, or tags, use the LLM Observability SDK’s LLMObs.annotate() method.

The examples below annotate the workflow span created in the example above:

from ddtrace.llmobs import LLMObs

from ddtrace.llmobs.decorators import workflow

@workflow

def extract_data(document: str, generate_summary: bool):

extracted_data = ... # user application logic

LLMObs.annotate(

input_data=document,

output_data=extracted_data,

metadata={"generate_summary": generate_summary},

tags={"env": "dev"},

)

return extracted_datafrom ddtrace.llmobs import LLMObs

def extract_data(document: str, generate_summary: bool):

with LLMObs.workflow(name="extract_data") as span:

... # user application logic

extracted_data = ... # user application logic

LLMObs.annotate(

input_data=document,

output_data=extracted_data,

metadata={"generate_summary": generate_summary},

tags={"env": "dev"},

)

return extracted_dataNesting spans

Starting a new span before the current span is finished automatically traces a parent-child relationship between the two spans. The parent span represents the larger operation, while the child span represents a smaller nested sub-operation within it.

The examples below create a trace with two spans.

from ddtrace.llmobs.decorators import task, workflow

@workflow

def extract_data(document):

preprocess_document(document)

... # performs data extraction on the document

return

@task

def preprocess_document():

... # preprocesses a document for data extraction

returnfrom ddtrace.llmobs import LLMObs

def extract_data():

with LLMObs.workflow(name="extract_data") as workflow_span:

with LLMObs.task(name="preprocess_document") as task_span:

... # preprocesses a document for data extraction

... # performs data extraction on the document

returnFor more information on alternative tracing methods and tracing features, see the SDK documentation.

Advanced tracing

Depending on the complexity of your LLM application, you can also:

- Track user sessions by specifying a

session_id. - Persist a span between contexts or scopes by manually starting and stopping it.

- Track multiple LLM applications when starting a new trace, which can be useful for differentiating between services or running multiple experiments.

- Submit custom evaluations such as feedback from the users of your LLM application (for example, rating from 1 to 5) with the SDK or the API.

Permissions

By default, only users with the Datadog Read role can view LLM Observability. For more information, see the Permissions documentation.

Further Reading

Additional helpful documentation, links, and articles: