- Essentials

- Getting Started

- Datadog

- Datadog Site

- DevSecOps

- Serverless for AWS Lambda

- Agent

- Integrations

- Containers

- Dashboards

- Monitors

- Logs

- APM Tracing

- Profiler

- Tags

- API

- Service Catalog

- Session Replay

- Continuous Testing

- Synthetic Monitoring

- Incident Management

- Database Monitoring

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- CI Visibility

- Test Visibility

- Test Impact Analysis

- Code Analysis

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- OpenTelemetry

- Developers

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Create an Agent-based Integration

- Create an API Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create a Tile

- Create an Integration Dashboard

- Create a Recommended Monitor

- Create a Cloud SIEM Detection Rule

- OAuth for Integrations

- Install Agent Integration Developer Tool

- Service Checks

- IDE Plugins

- Community

- Guides

- Administrator's Guide

- API

- Datadog Mobile App

- CoScreen

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Sheets

- Monitors and Alerting

- Infrastructure

- Metrics

- Watchdog

- Bits AI

- Service Catalog

- API Catalog

- Error Tracking

- Change Tracking

- Service Management

- Infrastructure

- Application Performance

- APM

- Continuous Profiler

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Setting Up Postgres

- Setting Up MySQL

- Setting Up SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Connecting DBM and Traces

- Data Collected

- Exploring Database Hosts

- Exploring Query Metrics

- Exploring Query Samples

- Exploring Recommendations

- Troubleshooting

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Digital Experience

- Real User Monitoring

- Product Analytics

- Synthetic Testing and Monitoring

- Continuous Testing

- Software Delivery

- CI Visibility

- CD Visibility

- Test Optimization

- Code Analysis

- Quality Gates

- DORA Metrics

- Security

- Security Overview

- Cloud SIEM

- Cloud Security Management

- Application Security Management

- AI Observability

- Log Management

- Observability Pipelines

- Log Management

- Administration

Ingestion Sampling with OpenTelemetry

Overview

OpenTelemetry SDKs and the OpenTelemetry Collector provide sampling capabilities, as ingesting 100% of traces is often unnecessary to gain visibility into the health of your applications. Configure sampling rates before sending traces to Datadog to ingest data that is most relevant to your business and observability goals, while controlling and managing overall costs.

This document demonstrates two primary methods for sending traces to Datadog with OpenTelemetry:

- Send traces to the OpenTelemetry Collector, and use the Datadog Exporter to forward them to Datadog.

- Send traces to the Datadog Agent OTLP ingest, which forwards them to Datadog.

Note: Datadog doesn’t support running the OpenTelemetry Collector and the Datadog Agent on the same host.

Using the OpenTelemetry Collector

With this method, the OpenTelemetry Collector receives traces from OpenTelemetry SDKs and exports them to Datadog using the Datadog Exporter. In this scenario, APM trace metrics are computed by the Datadog Connector:

Choose this method if you require the advanced processing capabilities of the OpenTelemetry Collector, such as tail-based sampling. To configure the Collector to receive traces, follow the instructions on OpenTelemetry Collector and Datadog Exporter.

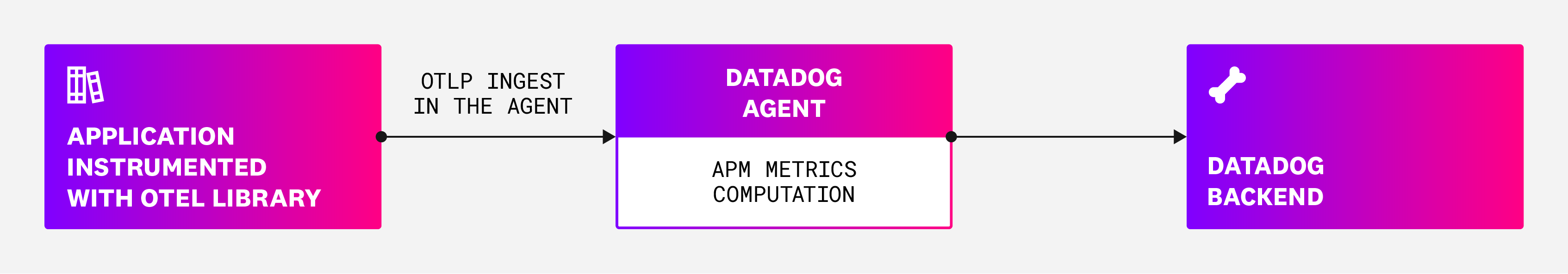

Using Datadog Agent OTLP ingestion

With this method, the Datadog Agent receives traces directly from OpenTelemetry SDKs using the OTLP protocol. This allows you to send traces to Datadog without running a separate OpenTelemetry Collector service. In this scenario, APM trace metrics are computed by the Agent:

Choose this method if you prefer a simpler setup without the need for a separate OpenTelemetry Collector service. To configure the Datadog Agent to receive traces using OTLP, follow the instructions on OTLP Ingestion by the Datadog Agent.

Reducing ingestion volume

With OpenTelemetry, you can configure sampling both in the OpenTelemetry libraries and in the OpenTelemetry Collector:

- Head-based sampling in the OpenTelemetry SDKs

- Tail-based sampling in the OpenTelemetry Collector

- Probabilistic sampling in the Datadog Agent

Head-based sampling

At the SDK level, you can implement head-based sampling. This is when the sampling decision is made at the beginning of the trace. This type of sampling is particularly useful for high-throughput applications, where you have a clear understanding of which traces are most important to ingest and want to make sampling decisions early in the tracing process.

Configuring

To configure head-based sampling, use the TraceIdRatioBased or ParentBased samplers provided by the OpenTelemetry SDKs. These allow you to implement deterministic head-based sampling based on the trace_id at the SDK level.

Considerations

Head-based sampling affects the computation of APM metrics. Only sampled traces are sent to the OpenTelemetry Collector or Datadog Agent, which perform metrics computation.

To approximate unsampled metrics from sampled metrics, use formulas and functions with the sampling rate configured in the SDK.

Use the ingestion volume control guide to read more about the implications of setting up trace sampling on trace analytics monitors and metrics from spans.

Tail-based sampling

At the OpenTelemetry Collector level, you can do tail-based sampling, which allows you to define more advanced rules to maintain visibility over traces with errors or high latency.

Configuring

To configure tail-based sampling, use the Tail Sampling Processor or Probabilistic Sampling Processor to sample traces based on a set of rules at the collector level.

Considerations

A limitation of tail-based sampling is that all spans for a given trace must be received by the same collector instance for effective sampling decisions. If a trace is distributed across multiple collector instances, and tail-based sampling is used, some parts of that trace may not be sent to Datadog.

To ensure that APM metrics are computed based on 100% of the applications’ traffic while using collector-level tail-based sampling, use the Datadog Connector.

The Datadog Connector is available starting v0.83.0. Read Switch from Datadog Processor to Datadog Connector for OpenTelemetry APM Metrics if migrating from an older version.

See the ingestion volume control guide for information about the implications of setting up trace sampling on trace analytics monitors and metrics from spans.

Probabilistic sampling

When using Datadog Agent OTLP ingest, a probabilistic sampler is available starting with Agent v7.54.0.

Configuring

To configure probabilistic sampling, do one of the following:

Set

DD_APM_PROBABILISTIC_SAMPLER_ENABLEDtotrueandDD_APM_PROBABILISTIC_SAMPLER_SAMPLING_PERCENTAGEto the percentage of traces you’d like to sample (between0and100).Add the following YAML to your Agent’s configuration file:

apm_config: # ... probabilistic_sampler: enabled: true sampling_percentage: 51 #In this example, 51% of traces are captured. hash_seed: 22 #A seed used for the hash algorithm. This must match other agents and OTel

If you use a mixed setup of Datadog tracing libraries and OTel SDKs:

- Probabilistic sampling will apply to spans originating from both Datadog and OTel tracing libraries.

- If you send spans both to the Datadog Agent and OTel collector instances, set the same seed between Datadog Agent (

DD_APM_PROBABILISTIC_SAMPLER_HASH_SEED) and OTel collector (hash_seed) to ensure consistent sampling.

DD_OTLP_CONFIG_TRACES_PROBABILISTIC_SAMPLER_SAMPLING_PERCENTAGE is deprecated and has been replaced by DD_APM_PROBABILISTIC_SAMPLER_SAMPLING_PERCENTAGE.Considerations

- The probabilistic sampler will ignore the sampling priority of spans that are set at the tracing library level. As a result, probabilistic sampling is incompatible with head-based sampling. This means that head-based sampled traces might still be dropped by probabilistic sampling.

- Spans not captured by the probabilistic sampler may still be captured by the Datadog Agent’s error and rare samplers.

- For consistent sampling all tracers must support 128-bit trace IDs.

Monitoring ingested volumes in Datadog

Use the APM Estimated Usage dashboard and the datadog.estimated_usage.apm.ingested_bytes metric to get visibility into your ingested volumes over a specific time period. Filter the dashboard to specific environments and services to see which services are responsible for the largest shares of the ingested volume.

If the ingestion volume is higher than expected, consider adjusting your sampling rates.

Unified service tagging

When sending data from OpenTelemetry to Datadog, it’s important to tie trace data together with unified service tagging.

Setting unified service tags ensures that traces are accurately linked to their corresponding services and environments. This prevents hosts from being misattributed, which can lead to unexpected increases in usage and costs.

For more information, see Unified Service Tagging.

Further reading

Additional helpful documentation, links, and articles: