- Agents

- Essentials

- Getting Started

- Agent

- API

- APM Tracing

- Containers

- Dashboards

- Database Monitoring

- Datadog

- Datadog Site

- DevSecOps

- Incident Management

- Integrations

- Internal Developer Portal

- Logs

- Monitors

- Notebooks

- OpenTelemetry

- Profiler

- Search

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Synthetic Monitoring and Testing

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- Extend Datadog

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Build an Integration with Datadog

- Create an Agent-based Integration

- Create an API-based Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create an Integration Dashboard

- Create a Monitor Template

- Create a Cloud SIEM Detection Rule

- Install Agent Integration Developer Tool

- Service Checks

- Community

- Guides

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Datadog Mobile App

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft (Standalone)

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Monitors and Alerting

- Service Level Objectives

- Metrics

- Watchdog

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Event Management

- Incident Response

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- End User Device Monitoring

- Hosts

- Containers

- Processes

- Serverless

- Network Monitoring

- Storage Management

- Cloud Cost

- Application Performance

- APM

- Continuous Profiler

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Setting Up Postgres

- Setting Up MySQL

- Setting Up SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Setting Up ClickHouse

- Connecting DBM and Traces

- Data Collected

- Exploring Database Hosts

- Exploring Query Metrics

- Exploring Query Samples

- Exploring Database Schemas

- Exploring Recommendations

- Troubleshooting

- Guides

- Data Streams Monitoring

- Data Observability

- Digital Experience

- Real User Monitoring

- Synthetic Testing and Monitoring

- Continuous Testing

- Experiments

- Product Analytics

- Session Replay

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Optimization

- Code Coverage

- PR Gates

- DORA Metrics

- Feature Flags

- Developer Integrations

- Security

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security

- App and API Protection

- AI Guard

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Observability Pipelines

- Configuration

- Sources

- Processors

- Destinations

- Amazon OpenSearch

- Amazon S3

- Amazon Security Lake

- Azure Storage

- CrowdStrike NG-SIEM

- Datadog Archives

- Datadog CloudPrem

- Datadog Logs

- Datadog Metrics

- Elasticsearch

- Google Cloud Storage

- Google Pub/Sub

- Google SecOps

- HTTP Client

- Kafka

- Microsoft Sentinel

- New Relic

- OpenSearch

- SentinelOne

- Socket

- Splunk HEC

- Sumo Logic Hosted Collector

- Syslog

- Packs

- Akamai CDN

- Amazon CloudFront

- Amazon VPC Flow Logs

- AWS Application Load Balancer Logs

- AWS CloudTrail

- AWS Elastic Load Balancer Logs

- AWS Network Load Balancer Logs

- Cisco ASA

- Cloudflare

- F5

- Fastly

- Fortinet Firewall

- HAProxy Ingress

- Istio Proxy

- Juniper SRX Firewall Traffic Logs

- Netskope

- NGINX

- Okta

- Palo Alto Firewall

- Windows XML

- ZScaler ZIA DNS

- Zscaler ZIA Firewall

- Zscaler ZIA Tunnel

- Zscaler ZIA Web Logs

- Search Syntax

- Scaling and Performance

- Monitoring and Troubleshooting

- Guides and Resources

- Log Management

- CloudPrem

- Administration

Sensitive Data Scanner Processor

This product is not supported for your selected Datadog site. ().

Overview

The Sensitive Data Scanner processor scans logs to detect and redact or hash sensitive information such as PII, PCI, and custom sensitive data. You can pick from Datadog’s library of predefined rules, or input custom Regex rules to scan for sensitive data.

You can set up the pipeline and processor in the UI, API, or Terraform.

Set up the processor in the UI

To set up the processor:

- Define a filter query. Only logs that match the specified filter query are scanned and processed. All logs are sent to the next step in the pipeline, regardless of whether they match the filter query. See Search Syntax for more information.

- Click Add Scanning Rule.

- Select one of the following:

- In the dropdown menu, select the library rule you want to use.

- Recommended keywords are automatically added based on the library rule selected. After the scanning rule has been added, you can add additional keywords or remove recommended keywords.

- In the Define rule target and action section, select if you want to scan the Entire Event, Specific Attributes, or Exclude Attributes in the dropdown menu.

- If you are scanning the entire event, you can optionally exclude specific attributes from getting scanned. Use path notation (

outer_key.inner_key) to access nested keys. For specified attributes with nested data, all nested data is excluded. - If you are scanning specific attributes, specify which attributes you want to scan. Use path notation (

outer_key.inner_key) to access nested keys. For specified attributes with nested data, all nested data is scanned.

- If you are scanning the entire event, you can optionally exclude specific attributes from getting scanned. Use path notation (

- For Define actions on match, select the action you want to take for the matched information. Note: Redaction, partial redaction, and hashing are all irreversible actions.

- Redact: Replaces all matching values with the text you specify in the Replacement text field.

- Partially Redact: Replaces a specified portion of all matched data. In the Redact section, specify the number of characters you want to redact and which part of the matched data to redact.

- Hash: Replaces all matched data with a unique identifier. The UTF-8 bytes of the match are hashed with the 64-bit fingerprint of FarmHash.

- Optionally, click Add Field to add tags you want to associate with the matched events.

- Add a name for the scanning rule.

- Optionally, add a description for the rule.

- Click Save.

Add additional keywords

After adding scanning rules from the library, you can edit each rule separately and add additional keywords to the keyword dictionary.

- Navigate to your pipeline.

- In the Sensitive Data Scanner processor with the rule you want to edit, click Manage Scanning Rules.

- Toggle Use recommended keywords if you want the rule to use them. Otherwise, add your own keywords to the Create keyword dictionary field. You can also require that these keywords be within a specified number of characters of a match. By default, keywords must be within 30 characters before a matched value.

- Click Update.

- In the Define match conditions section, specify the regex pattern to use for matching against events in the Define the regex field. See Writing Effective Grok Parsing Rules with Regular Expressions for more information.

Sensitive Data Scanner supports Perl Compatible Regular Expressions (PCRE), but the following patterns are not supported:

- Backreferences and capturing sub-expressions (lookarounds)

- Arbitrary zero-width assertions

- Subroutine references and recursive patterns

- Conditional patterns

- Backtracking control verbs

- The

\C“single-byte” directive (which breaks UTF-8 sequences) - The

\Rnewline match - The

\Kstart of match reset directive - Callouts and embedded code

- Atomic grouping and possessive quantifiers

- Enter sample data in the Add sample data field to verify that your regex pattern is valid.

- For Create keyword dictionary, add keywords to refine detection accuracy when matching regex conditions. For example, if you are scanning for a sixteen-digit Visa credit card number, you can add keywords like

visa,credit, andcard. You can also require that these keywords be within a specified number of characters of a match. By default, keywords must be within 30 characters before a matched value. - In the Define rule target and action section, select if you want to scan the Entire Event, Specific Attributes, or Exclude Attributes in the dropdown menu.

- If you are scanning the entire event, you can optionally exclude specific attributes from getting scanned. Use path notation (

outer_key.inner_key) to access nested keys. For specified attributes with nested data, all nested data is excluded. - If you are scanning specific attributes, specify which attributes you want to scan. Use path notation (

outer_key.inner_key) to access nested keys. For specified attributes with nested data, all nested data is scanned.

- If you are scanning the entire event, you can optionally exclude specific attributes from getting scanned. Use path notation (

- For Define actions on match, select the action you want to take for the matched information. Note: Redaction, partial redaction, and hashing are all irreversible actions.

- Redact: Replaces all matching values with the text you specify in the Replacement text field.

- Partially Redact: Replaces a specified portion of all matched data. In the Redact section, specify the number of characters you want to redact and which part of the matched data to redact.

- Hash: Replaces all matched data with a unique identifier. The UTF-8 bytes of the match is hashed with the 64-bit fingerprint of FarmHash.

- Optionally, click Add Field to add tags you want to associate with the matched events.

- Add a name for the scanning rule.

- Optionally, add a description for the rule.

- Click Add Rule.

Path notation example

For the following message structure:

{

"outer_key": {

"inner_key": "inner_value",

"a": {

"double_inner_key": "double_inner_value",

"b": "b value"

},

"c": "c value"

},

"d": "d value"

}

- Use

outer_key.inner_keyto refer to the key with the valueinner_value. - Use

outer_key.inner_key.double_inner_keyto refer to the key with the valuedouble_inner_value.

Set up the processor using Terraform

You can use the Datadog Observability Pipeline Terraform resource to set up a pipeline with the Sensitive Data Scanner processor. To add a rule to the Sensitive Data Scanner processor using Terraform:

Use the Datadog Sensitive Data Scanner Standard Pattern data source to retrieve the rule ID of the Sensitive Data Scanner library rule.

data "datadog_sensitive_data_scanner_standard_pattern" "<RULE_IDENTIFIER>" { filter = "<RULE_NAME>" }Replace the placeholders:

<RULE_IDENTIFIER>with a name to use when you later set up the Sensitive Data Scanner processor in the Observability Pipeline resource.<RULE_NAME>with the exact name of the rule. See Library Rules for the full list of rules.

For example, if you want to use the AWS Access Key ID Scanner, configure the data source as follows:

See the full configuration example on how to add data sources for multiple rules.data "datadog_sensitive_data_scanner_standard_pattern" "aws_access_key" { filter = "AWS Access Key ID Scanner" }Add a rule block in your Observability Pipeline resource for the library rule.

... sensitive_data_scanner { rule { name = "<YOUR_RULE_NAME>" tags = [] on_match { redact { replace = "***" } } pattern { library { id = data.datadog_sensitive_data_scanner_standard_pattern.<RULE_IDENTIFIER>.id use_recommended_keywords = true } } scope { all = true } } }Replace the placeholders:

<YOUR_RULE_NAME>with a name for the rule. This name is shown in the Pipelines UI.<RULE_IDENTIFIER>with the rule identifier you used in the data source in step 1.

For example, if you use the AWS Access Key ID Scanner data source from step 1, configure the rule block as follows:

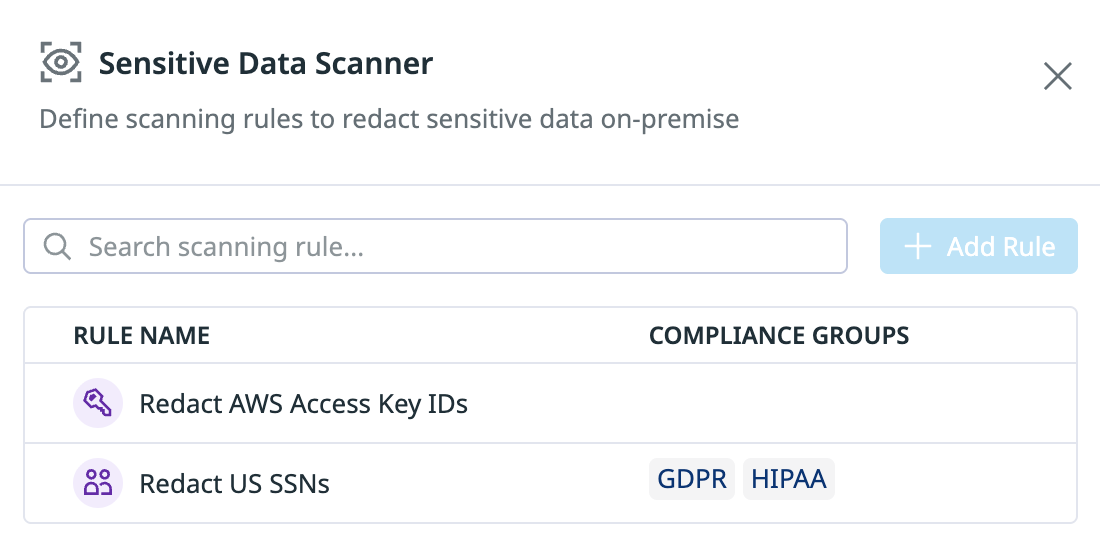

... sensitive_data_scanner { rule { name = "Redact AWS Access Key IDs" tags = [] on_match { redact { replace = "***" } } pattern { library { id = data.datadog_sensitive_data_scanner_standard_pattern.aws_access_key.id use_recommended_keywords = true } } scope { all = true } } }See the full configuration example on how to add multiple rules.

Repeat steps 1 and 2 for all library rules you want to add.

Full configuration example

If you want to use the Sensitive Data Scanner processor to scan for AWS Access Key IDs and US Social Security Numbers, and redact them by replacing them with the string ***:

- Use the Datadog Sensitive Data Scanner Standard Pattern data source to retrieve the rule IDs for the AWS Access Key ID Scanner and the US Social Security Number Scanner.

- In your Datadog Observability Pipeline resource’s Sensitive Data Scanner processor, use the Sensitive Data Scanner rules defined in the data sources.

data "datadog_sensitive_data_scanner_standard_pattern" "aws_access_key" {

filter = "AWS Access Key ID Scanner"

}

data "datadog_sensitive_data_scanner_standard_pattern" "us_ssn" {

filter = "US Social Security Number Scanner"

}

resource "datadog_observability_pipeline" "sensitive_data_pipeline" {

name = "Sensitive Data Pipeline"

config {

source {

id = "source-0"

datadog_agent {}

}

processor_group {

display_name = "Processors"

enabled = true

id = "group-0"

include = "*"

inputs = ["source-0"]

processor {

display_name = "Sensitive Data Scanner"

enabled = true

id = "processor-sds-0"

include = "*"

sensitive_data_scanner {

rule {

name = "Redact AWS Access Key IDs"

tags = []

on_match {

redact {

replace = "***"

}

}

pattern {

library {

id = data.datadog_sensitive_data_scanner_standard_pattern.aws_access_key.id

use_recommended_keywords = true

}

}

scope {

all = true

}

}

rule {

name = "Redact US SSNs"

tags = []

on_match {

redact {

replace = "***"

}

}

pattern {

library {

id = data.datadog_sensitive_data_scanner_standard_pattern.us_ssn.id

use_recommended_keywords = true

}

}

scope {

all = true

}

}

}

}

}

destination {

id = "destination-0"

inputs = ["group-0"]

datadog_logs {}

}

}

}Further reading

Additional helpful documentation, links, and articles: