- Agents

- Essentials

- Getting Started

- Agent

- API

- APM Tracing

- Containers

- Dashboards

- Database Monitoring

- Datadog

- Datadog Site

- DevSecOps

- Incident Management

- Integrations

- Internal Developer Portal

- Logs

- Monitors

- Notebooks

- OpenTelemetry

- Profiler

- Search

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Synthetic Monitoring and Testing

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- Extend Datadog

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Build an Integration with Datadog

- Create an Agent-based Integration

- Create an API-based Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create an Integration Dashboard

- Create a Monitor Template

- Create a Cloud SIEM Detection Rule

- Install Agent Integration Developer Tool

- Service Checks

- Community

- Guides

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Datadog Mobile App

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft (Standalone)

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Monitors and Alerting

- Service Level Objectives

- Metrics

- Watchdog

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Event Management

- Incident Response

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- End User Device Monitoring

- Hosts

- Containers

- Processes

- Serverless

- Network Monitoring

- Storage Management

- Cloud Cost

- Application Performance

- APM

- Continuous Profiler

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Setting Up Postgres

- Setting Up MySQL

- Setting Up SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Setting Up ClickHouse

- Connecting DBM and Traces

- Data Collected

- Collecting Custom Metrics

- Exploring Database Hosts

- Exploring Query Metrics

- Exploring Query Samples

- Exploring Database Schemas

- Exploring Recommendations

- Troubleshooting

- Guides

- Data Streams Monitoring

- Data Observability

- Digital Experience

- Real User Monitoring

- Synthetic Testing and Monitoring

- Continuous Testing

- Experiments

- Product Analytics

- Session Replay

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Optimization

- Code Coverage

- PR Gates

- DORA Metrics

- Feature Flags

- Developer Integrations

- Security

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security

- App and API Protection

- AI Guard

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Observability Pipelines

- Configuration

- Sources

- Processors

- Destinations

- Amazon OpenSearch

- Amazon S3

- Amazon Security Lake

- Azure Storage

- CrowdStrike NG-SIEM

- Datadog Archives

- Datadog CloudPrem

- Datadog Logs

- Datadog Metrics

- Elasticsearch

- Google Cloud Storage

- Google Pub/Sub

- Google SecOps

- HTTP Client

- Kafka

- Microsoft Sentinel

- New Relic

- OpenSearch

- SentinelOne

- Socket

- Splunk HEC

- Sumo Logic Hosted Collector

- Syslog

- Packs

- Akamai CDN

- Amazon CloudFront

- Amazon VPC Flow Logs

- AWS Application Load Balancer Logs

- AWS CloudTrail

- AWS Elastic Load Balancer Logs

- AWS Network Load Balancer Logs

- Cisco ASA

- Cloudflare

- F5

- Fastly

- Fortinet Firewall

- HAProxy Ingress

- Istio Proxy

- Juniper SRX Firewall Traffic Logs

- Netskope

- NGINX

- Okta

- Palo Alto Firewall

- Windows XML

- ZScaler ZIA DNS

- Zscaler ZIA Firewall

- Zscaler ZIA Tunnel

- Zscaler ZIA Web Logs

- Search Syntax

- Scaling and Performance

- Monitoring and Troubleshooting

- Guides and Resources

- Log Management

- CloudPrem

- Administration

JMeter

Supported OS

Integration version1.0.0

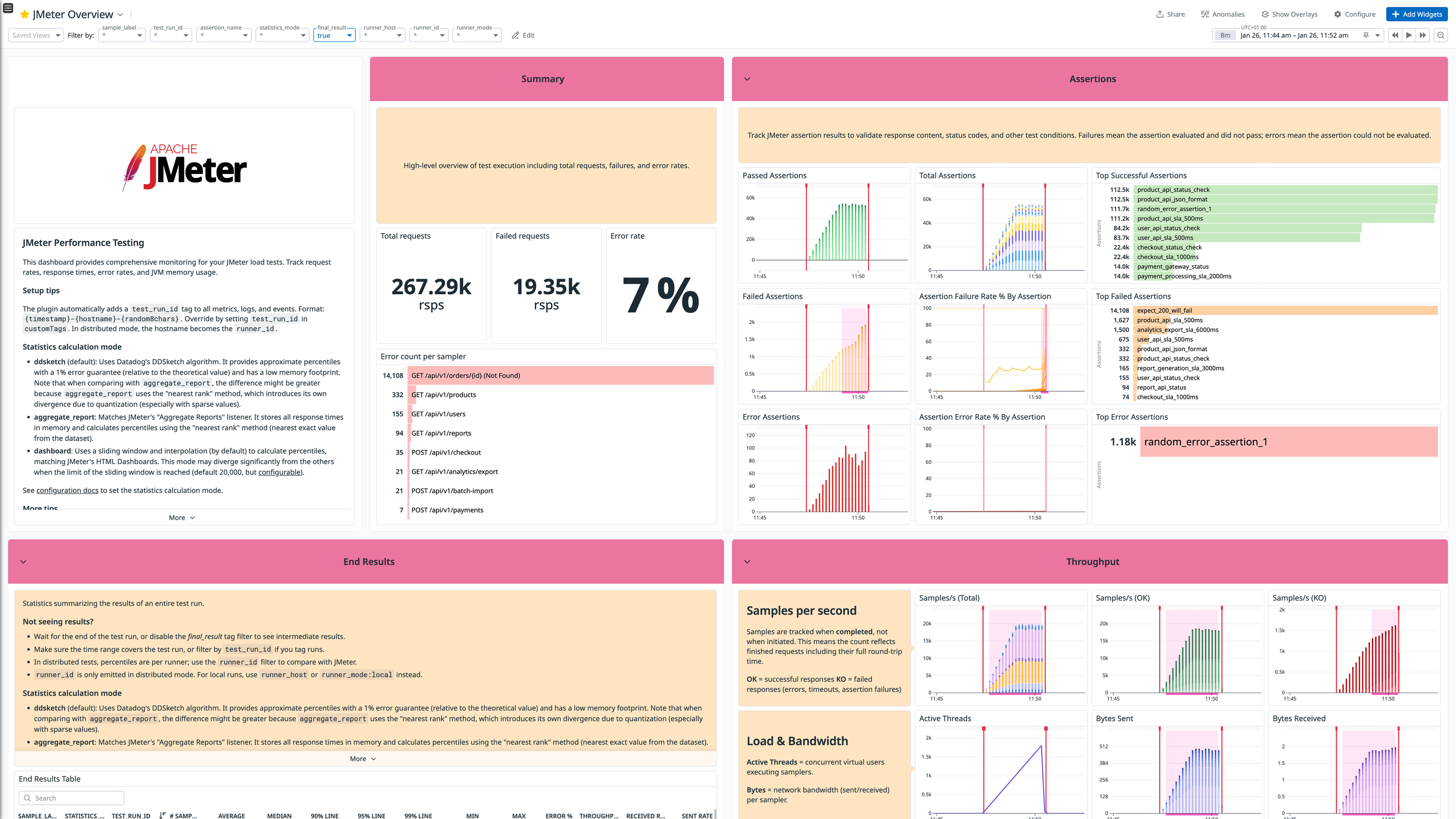

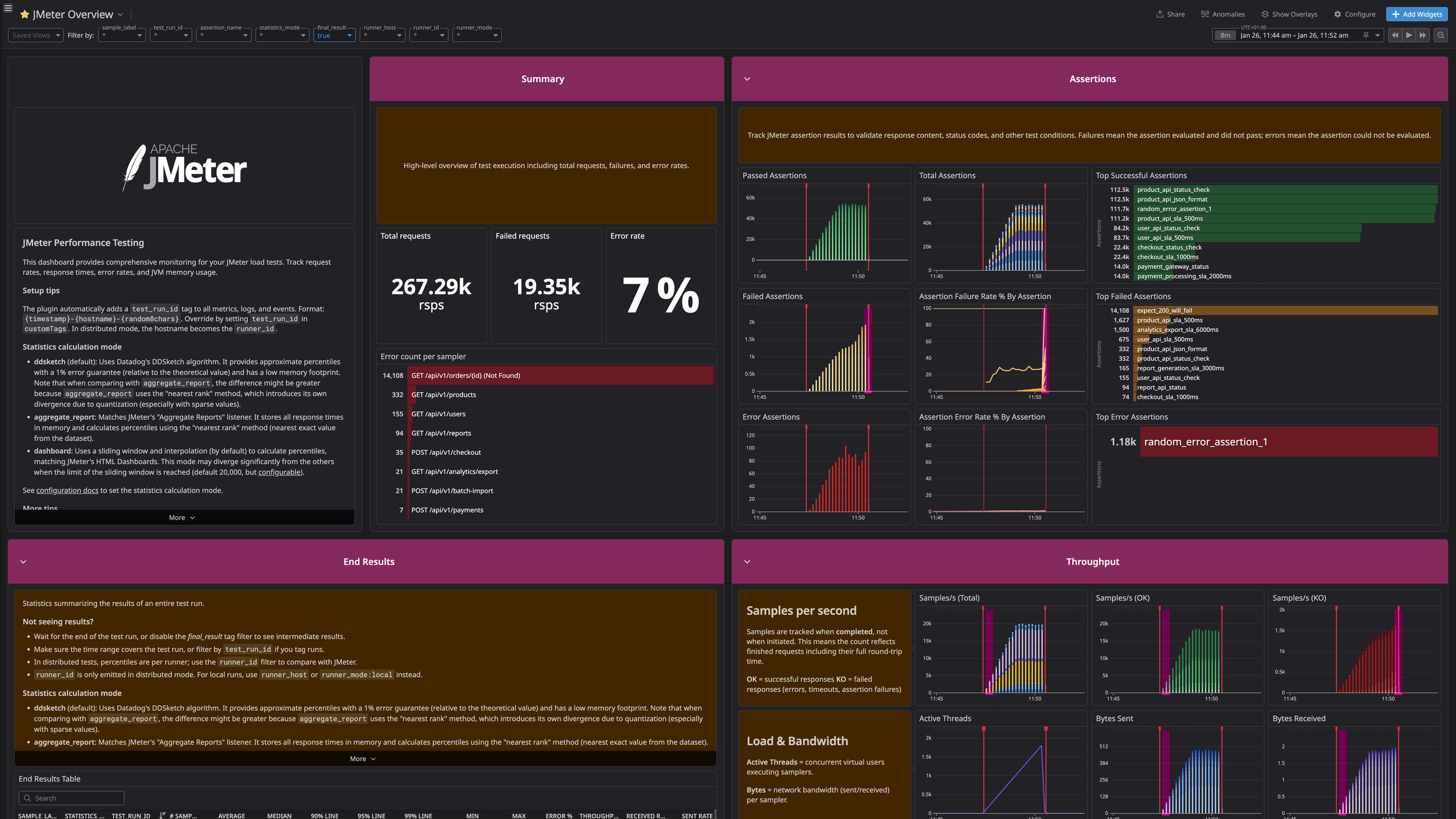

JMeter Overview Dashboard - Monitor your load test results in real-time

JMeter Overview Dashboard (Dark Theme) - Monitor your load test results in real-time

Overview

Datadog Backend Listener for Apache JMeter is a JMeter plugin used to send test results to the Datadog platform. It includes the following features:

- Real-time reporting of test metrics (latency, bytes sent and more). See the Metrics section.

- Real-time reporting of test results as Datadog log events.

- Ability to include sub results.

Setup

Installation

The Datadog Backend Listener plugin needs to be installed manually. See the latest release and more up-to-date installation instructions on its GitHub repository.

You can install the plugin either manually or with JMeter Plugins Manager.

No Datadog Agent is necessary.

Manual installation

- Download the Datadog plugin JAR file from the release page.

- Place the JAR in the

lib/extdirectory within your JMeter installation. - Launch JMeter (or quit and re-open the application).

JMeter Plugins Manager

- If not already configured, download the JMeter Plugins Manager JAR.

- Once you’ve completed the download, place the

.jarin thelib/extdirectory within your JMeter installation. - Launch JMeter (or quit and re-open the application).

- Go to

Options > Plugins Manager > Available Plugins. - Search for “Datadog Backend Listener”.

- Click the checkbox next to the Datadog Backend Listener plugin.

- Click “Apply Changes and Restart JMeter”.

Configuration

To start reporting metrics to Datadog:

- Right-click on the thread group or the test plan for which you want to send metrics to Datadog.

- Go to

Add > Listener > Backend Listener. - Modify the

Backend Listener Implementationand selectorg.datadog.jmeter.plugins.DatadogBackendClientfrom the drop-down. - Set the

apiKeyvariable to your Datadog API key. - Run your test and validate that metrics have appeared in Datadog.

The plugin has the following configuration options:

| Name | Required | Default value | Description |

|---|---|---|---|

| apiKey | true | NA | Your Datadog API key. |

| datadogUrl | false | https://api.datadoghq.com/api/ | You can configure a different endpoint, for instance https://api.datadoghq.eu/api/ if your Datadog instance is in the EU. |

| logIntakeUrl | false | https://http-intake.logs.datadoghq.com/v1/input/ | You can configure a different endpoint, for instance https://http-intake.logs.datadoghq.eu/v1/input/ if your Datadog instance is in the EU. |

| metricsMaxBatchSize | false | 200 | Metrics are submitted every 10 seconds in batches of size metricsMaxBatchSize. |

| logsBatchSize | false | 500 | Logs are submitted in batches of size logsBatchSize as soon as this size is reached. |

| sendResultsAsLogs | false | true | By default, individual test results are reported as log events. Set to false to disable log reporting. |

| includeSubresults | false | false | A subresult is for instance when an individual HTTP request has to follow redirects. By default subresults are ignored. |

| excludeLogsResponseCodeRegex | false | "" | Setting sendResultsAsLogs will submit all results as logs to Datadog by default. This option lets you exclude results whose response code matches a given regex. For example, you may set this option to [123][0-5][0-9] to only submit errors. |

| samplersRegex | false | "" | Regex to filter which samplers to include. By default all samplers are included. |

| customTags | false | "" | Comma-separated list of tags to add to every metric. |

| statisticsCalculationMode | false | ddsketch | Algorithm for percentile calculation: ddsketch (default), aggregate_report (matches JMeter Aggregate Reports), or dashboard (matches JMeter HTML Dashboards). |

Statistics Calculation Modes

- ddsketch (default): Uses Datadog’s DDSketch algorithm. It provides approximate percentiles with a 1% error guarantee (relative to the theoretical value) and has a low memory footprint. Note that when comparing with

aggregate_report, the difference might be greater becauseaggregate_reportuses the “nearest rank” method, which introduces its own divergence due to quantization (especially with sparse values). - aggregate_report: Matches JMeter’s “Aggregate Reports” listener. It stores all response times in memory and calculates percentiles using the “nearest rank” method (nearest exact value from the dataset).

- dashboard: Uses a sliding window and interpolation (by default) to calculate percentiles, matching JMeter’s HTML Dashboards. This mode may diverge significantly from the others when the limit of the sliding window is reached (default 20,000, but configurable).

Test Run Tagging

The plugin automatically adds a test_run_id tag to all metrics, logs, and events (Test Started/Ended) to help you isolate and filter specific test executions in Datadog.

- Format:

{timestamp}-{hostname}-{random8chars}- Example:

2026-01-24T14:30:25Z-myhost-a1b2c3d4 - In distributed mode, the

hostnameprefix becomes therunner_id(the JMeter distributed prefix) when present.

- Example:

You can override this by providing your own test_run_id in the customTags configuration (e.g., test_run_id:my-custom-run-id). Any additional tags you add to customTags will also be included alongside the test_run_id.

Assertion Failures vs Errors

JMeter distinguishes between assertion failures and assertion errors. A failure means the assertion evaluated and did not pass. An error means the assertion could not be evaluated (for example, a null response or a script error). These map to jmeter.assertions.failed and jmeter.assertions.error.

Getting Final Results in Datadog Notebooks

To match JMeter’s Aggregate Reports in a Datadog notebook, set statisticsCalculationMode=aggregate_report and query the jmeter.final_result.* metrics. These are emitted once at test end, so they are ideal for a single, authoritative snapshot.

Note: Since these metrics are emitted only once at the end of the test, ensure your selected time interval includes the test completion time.

Example queries (adjust tags as needed):

avg:jmeter.final_result.response_time.p95{sample_label:total,test_run_id:YOUR_RUN_ID}

avg:jmeter.final_result.responses.error_percent{sample_label:total,test_run_id:YOUR_RUN_ID}

avg:jmeter.final_result.throughput.rps{sample_label:total,test_run_id:YOUR_RUN_ID}

Data Collected

Metrics

| jmeter.active_threads.avg (gauge) | Average number of active threads during the interval. Shown as thread |

| jmeter.active_threads.max (gauge) | Maximum number of active threads during the interval. Shown as thread |

| jmeter.active_threads.min (gauge) | Minimum number of active threads during the interval. Shown as thread |

| jmeter.assertions.count (count) | Total number of assertion checks evaluated. Shown as assertion |

| jmeter.assertions.error (count) | Number of errors during assertion execution. Shown as assertion |

| jmeter.assertions.failed (count) | Number of failed assertion checks. Shown as assertion |

| jmeter.bytes_received.avg (gauge) | Average bytes received. Shown as byte |

| jmeter.bytes_received.count (count) | Number of samples used to compute the bytes received distribution. Shown as request |

| jmeter.bytes_received.max (gauge) | Maximum bytes received. Shown as byte |

| jmeter.bytes_received.median (gauge) | Median bytes received. Shown as byte |

| jmeter.bytes_received.min (gauge) | Minimum bytes received. Shown as byte |

| jmeter.bytes_received.p90 (gauge) | 90th percentile of bytes received. Shown as byte |

| jmeter.bytes_received.p95 (gauge) | 95th percentile of bytes received. Shown as byte |

| jmeter.bytes_received.p99 (gauge) | 99th percentile of bytes received. Shown as byte |

| jmeter.bytes_received.total (count) | Total bytes received during the interval. Shown as byte |

| jmeter.bytes_sent.avg (gauge) | Average bytes sent. Shown as byte |

| jmeter.bytes_sent.count (count) | Number of samples used to compute the bytes sent distribution. Shown as request |

| jmeter.bytes_sent.max (gauge) | Maximum bytes sent. Shown as byte |

| jmeter.bytes_sent.median (gauge) | Median bytes sent. Shown as byte |

| jmeter.bytes_sent.min (gauge) | Minimum bytes sent. Shown as byte |

| jmeter.bytes_sent.p90 (gauge) | 90th percentile of bytes sent. Shown as byte |

| jmeter.bytes_sent.p95 (gauge) | 95th percentile of bytes sent. Shown as byte |

| jmeter.bytes_sent.p99 (gauge) | 99th percentile of bytes sent. Shown as byte |

| jmeter.bytes_sent.total (count) | Total number of bytes sent during the interval (rate per second). Shown as byte |

| jmeter.cumulative.bytes_received.rate (gauge) | Bytes received per second for a label. Shown as byte |

| jmeter.cumulative.bytes_sent.rate (gauge) | Bytes sent per second for a label. Shown as byte |

| jmeter.cumulative.response_time.avg (gauge) | Average response time across all samples for a label. Shown as second |

| jmeter.cumulative.response_time.max (gauge) | Maximum response time across all samples for a label. Shown as second |

| jmeter.cumulative.response_time.median (gauge) | Median (p50) response time across all samples for a label. Shown as second |

| jmeter.cumulative.response_time.min (gauge) | Minimum response time across all samples for a label. Shown as second |

| jmeter.cumulative.response_time.p90 (gauge) | 90th percentile response time across all samples for a label. Shown as second |

| jmeter.cumulative.response_time.p95 (gauge) | 95th percentile response time across all samples for a label. Shown as second |

| jmeter.cumulative.response_time.p99 (gauge) | 99th percentile response time across all samples for a label. Shown as second |

| jmeter.cumulative.responses.error_percent (gauge) | Percentage of failed samples for a label (0-100). Shown as percent |

| jmeter.cumulative.responses_count (gauge) | Total number of samples collected for a label. Shown as response |

| jmeter.cumulative.throughput.rps (gauge) | Requests per second for a label. Shown as request |

| jmeter.final_result.bytes_received.rate (gauge) | Bytes received per second for a label (final test result only). Shown as byte |

| jmeter.final_result.bytes_sent.rate (gauge) | Bytes sent per second for a label (final test result only). Shown as byte |

| jmeter.final_result.response_time.avg (gauge) | Average response time across all samples for a label (final test result only). Shown as second |

| jmeter.final_result.response_time.max (gauge) | Maximum response time across all samples for a label (final test result only). Shown as second |

| jmeter.final_result.response_time.median (gauge) | Median (p50) response time across all samples for a label (final test result only). Shown as second |

| jmeter.final_result.response_time.min (gauge) | Minimum response time across all samples for a label (final test result only). Shown as second |

| jmeter.final_result.response_time.p90 (gauge) | 90th percentile response time across all samples for a label (final test result only). Shown as second |

| jmeter.final_result.response_time.p95 (gauge) | 95th percentile response time across all samples for a label (final test result only). Shown as second |

| jmeter.final_result.response_time.p99 (gauge) | 99th percentile response time across all samples for a label (final test result only). Shown as second |

| jmeter.final_result.responses.error_percent (gauge) | Percentage of failed samples for a label (final test result only). Shown as percent |

| jmeter.final_result.responses_count (gauge) | Total number of samples collected for a label (final test result only). Shown as response |

| jmeter.final_result.throughput.rps (gauge) | Requests per second for a label (final test result only). Shown as request |

| jmeter.latency.avg (gauge) | Average latency. Shown as second |

| jmeter.latency.count (count) | Number of samples used to compute the latency distribution. Shown as request |

| jmeter.latency.max (gauge) | Maximum latency. Shown as second |

| jmeter.latency.median (gauge) | Median latency. Shown as second |

| jmeter.latency.min (gauge) | Minimum latency. Shown as second |

| jmeter.latency.p90 (gauge) | P90 value of the latency. Shown as second |

| jmeter.latency.p95 (gauge) | P95 value of the latency. Shown as second |

| jmeter.latency.p99 (gauge) | P99 value of the latency. Shown as second |

| jmeter.response_time.avg (gauge) | Average value of the response time. Shown as second |

| jmeter.response_time.count (count) | Number of samples used to compute the response time distribution. Shown as request |

| jmeter.response_time.max (gauge) | Maximum value of the response time. Shown as second |

| jmeter.response_time.median (gauge) | Median value of the response time. Shown as second |

| jmeter.response_time.min (gauge) | Minimum value of the response time. Shown as second |

| jmeter.response_time.p90 (gauge) | P90 value of the response time. Shown as second |

| jmeter.response_time.p95 (gauge) | P95 value of the response time. Shown as second |

| jmeter.response_time.p99 (gauge) | P99 value of the response time. Shown as second |

| jmeter.responses_count (count) | Count of the number of responses received by sampler and by status. Shown as response |

| jmeter.threads.finished (gauge) | Total number of threads finished. Shown as thread |

| jmeter.threads.started (gauge) | Total number of threads started. Shown as thread |

The plugin emits three types of metrics:

- Interval metrics (

jmeter.*): Real-time metrics reset each reporting interval, useful for monitoring during test execution. - Cumulative metrics (

jmeter.cumulative.*): Aggregate statistics over the entire test duration, similar to JMeter’s Aggregate Reports. These include afinal_resulttag (trueat test end,falseduring execution). - Final result metrics (

jmeter.final_result.*): Emitted only once at test completion, providing an unambiguous way to query final test results without filtering by tag.

Service Checks

JMeter does not include any service checks.

Events

The plugin sends Datadog Events at the start and end of each test run:

- JMeter Test Started: Sent when the test begins

- JMeter Test Ended: Sent when the test completes

These events appear in the Datadog Event Explorer and can be used to correlate metrics with test execution windows.

Troubleshooting

If you’re not seeing JMeter metrics in Datadog, check your jmeter.log file, which should be in the /bin folder of your JMeter installation.

Troubleshoot missing runner_id tag

This is normal in local mode. The runner_id tag is only emitted in distributed tests, where JMeter provides a distributed prefix. In local runs, use runner_host or runner_mode:local for filtering instead.

Need help? Contact Datadog support.

Further Reading

Additional helpful documentation, links, and articles: