- Principales informations

- Getting Started

- Agent

- API

- Tracing

- Conteneurs

- Dashboards

- Database Monitoring

- Datadog

- Site Datadog

- DevSecOps

- Incident Management

- Intégrations

- Internal Developer Portal

- Logs

- Monitors

- OpenTelemetry

- Profileur

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Surveillance Synthetic

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Intégrations

- Développeurs

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Application mobile

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Alertes

- Watchdog

- Métriques

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Service Management

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- Hosts

- Conteneurs

- Processes

- Sans serveur

- Surveillance réseau

- Cloud Cost

- Application Performance

- APM

- Termes et concepts de l'APM

- Sending Traces to Datadog

- APM Metrics Collection

- Trace Pipeline Configuration

- Connect Traces with Other Telemetry

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilité des services

- Endpoint Observability

- Dynamic Instrumentation

- Live Debugger

- Suivi des erreurs

- Sécurité des données

- Guides

- Dépannage

- Profileur en continu

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Configuration de Postgres

- Configuration de MySQL

- Configuration de SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Connecting DBM and Traces

- Données collectées

- Exploring Database Hosts

- Explorer les métriques de requête

- Explorer des échantillons de requêtes

- Exploring Database Schemas

- Exploring Recommendations

- Dépannage

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Digital Experience

- RUM et Session Replay

- Surveillance Synthetic

- Continuous Testing

- Product Analytics

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Code Coverage

- Quality Gates

- DORA Metrics

- Feature Flags

- Securité

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Pipelines d'observabilité

- Log Management

- CloudPrem

- Administration

Connect Databricks for Warehouse-Native Experiment Analysis

Ce produit n'est pas pris en charge par le site Datadog que vous avez sélectionné. ().

Cette page n'est pas encore disponible en français, sa traduction est en cours.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

Overview

Warehouse-native experiment analysis lets you run statistical computations directly in your data warehouse.

To set this up for Databricks, connect a Databricks service account to Datadog and configure your experiment settings. This guide covers:

- Granting permissions to the service principal

- Connecting Databricks to Datadog

- Configuring experiment settings in Datadog

Prerequisites

Datadog Experiments connects to Databricks through the Datadog Databricks integration. If you already have a Databricks integration configured for the workspace you plan to use, skip to Step 1. Otherwise, expand the section below to create a service principal.

Create a Databricks service principal

Create a Databricks service principal

In your Databricks Workspace:

- Click your profile in the top right corner and select Settings.

- In the Settings menu, click Identity and access.

- On the Service principals row, click Manage, then:

- Click Add service principal, then Add new.

- Enter a service principal name and click Add.

- Click the name of the new service principal to open its details page.

- Select the Permissions tab, then:

- Click Grant access.

- Under User, Group or Service Principal, enter the service principal name.

- Using the Permission dropdown, select Manage.

- Click Save.

- Select the Secrets tab, then:

- Click Generate secret.

- Set the Lifetime (days) value to the maximum allowed (for example, 730).

- Click Generate.

- Note your Secret and Client ID.

- Click Done.

- In the Settings menu, click Identity and access.

- On the Groups row, click Manage, then:

- Click admins, then Add members.

- Enter the service principal name and click Add.

After you create the service principal, continue to Step 1 to grant the required permissions.

If you plan to use other warehouse observability functionality in Datadog, see Datadog's Databricks integration documentation to determine which resources to enable.

Step 1: Grant permissions to the service principal

You must be an account admin to grant these permissions.

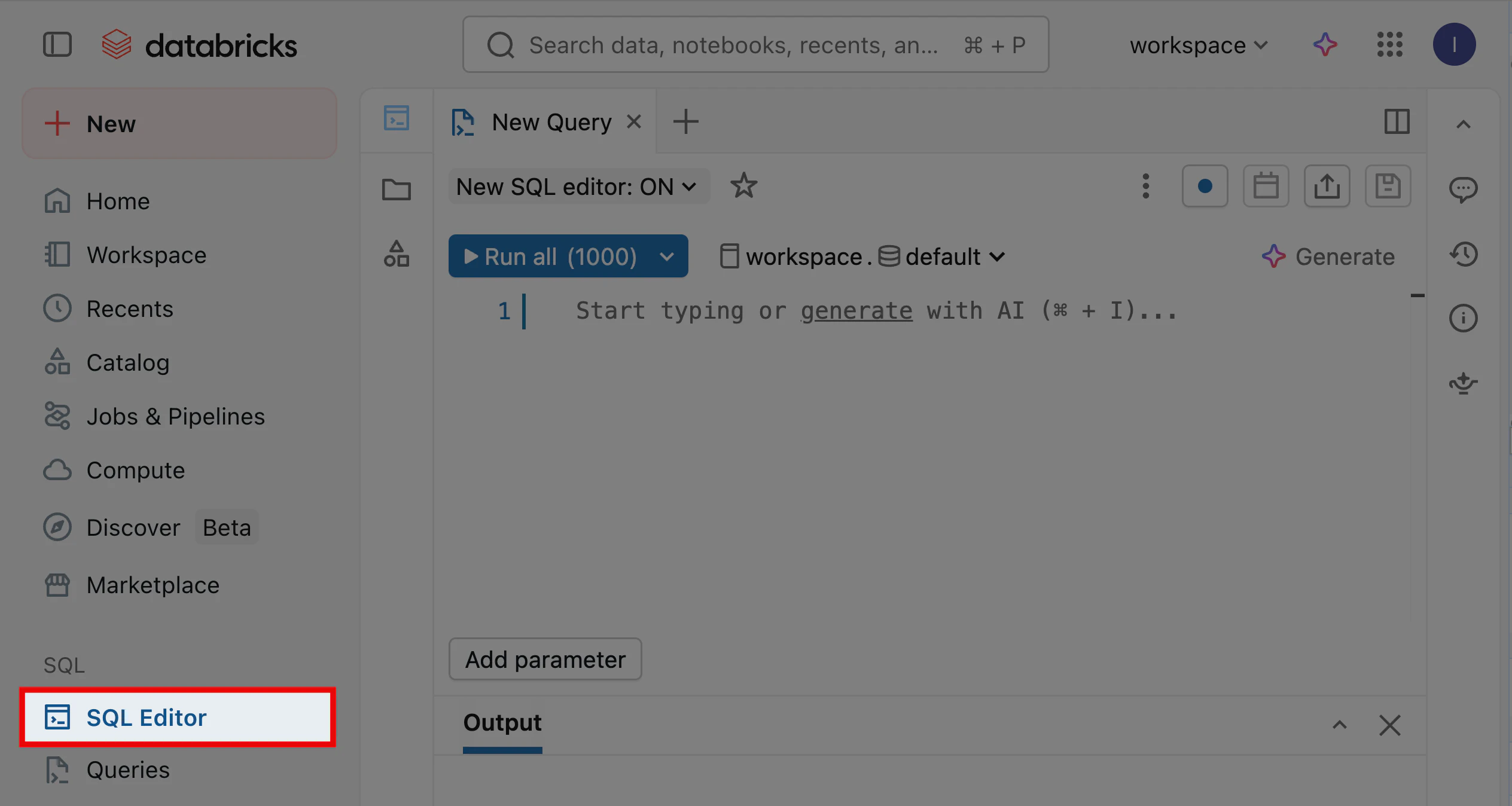

In your Databricks Workspace, open the SQL Editor to run the following commands and grant the service principal permissions for warehouse-native experiment analysis.

Grant read access to source tables

Grant the service principal read access to the tables containing your experiment metrics. Run both GRANT USE commands, then run the GRANT SELECT option that matches your access needs. Replace <catalog>, <schema>, <table>, and <principal> with the appropriate values.

GRANT USE CATALOG ON CATALOG <catalog> TO `<principal>`;

GRANT USE SCHEMA ON SCHEMA <catalog>.<schema> TO `<principal>`;

-- Option 1: Give read access to a single table

GRANT SELECT ON TABLE <catalog>.<schema>.<table> TO `<principal>`;

-- Option 2: Give read access to all tables in the schema

GRANT SELECT ON ALL TABLES IN SCHEMA <catalog>.<schema> TO `<principal>`;

Create an output schema

Run the following commands to create a schema where Datadog Experiments can write intermediate results and temporary tables. Replace datadog_experiments_output with your output schema name, and <catalog> and <principal> with the appropriate values.

CREATE SCHEMA IF NOT EXISTS <catalog>.datadog_experiments_output;

GRANT USE SCHEMA ON SCHEMA <catalog>.datadog_experiments_output TO `<principal>`;

GRANT CREATE TABLE ON SCHEMA <catalog>.datadog_experiments_output TO `<principal>`;

Configure a volume for temporary data staging

Datadog Experiments uses a volume to temporarily save exposure data before copying it into a Databricks table. Run the following commands to create and grant access to this volume. Replace datadog_experiments_output with your output schema name, and <catalog> and <principal> with the appropriate values.

CREATE VOLUME IF NOT EXISTS <catalog>.datadog_experiments_output.datadog_experiments_volume;

GRANT READ VOLUME ON VOLUME <catalog>.datadog_experiments_output.datadog_experiments_volume TO `<principal>`;

GRANT WRITE VOLUME ON VOLUME <catalog>.datadog_experiments_output.datadog_experiments_volume TO `<principal>`;

Grant SQL warehouse access

Grant the service principal access to the SQL warehouse that Datadog Experiments uses to run queries.

- Navigate to SQL Warehouses in your Databricks Workspace.

- Select the warehouse for Datadog Experiments.

- At the top right corner, click Permissions.

- Grant the service principal the Can use permission.

- Close the Manage permissions modal.

Step 2: Connect Databricks to Datadog

To connect your Databricks Workspace to Datadog for warehouse-native experiment analysis:

- Navigate to Datadog’s integrations page and search for Databricks.

- Click the Databricks tile to open its modal.

- Select the Configure tab and click Add Databricks Workspace. If this is your first Databricks account, the setup form appears automatically.

- Under the Connect a new Databricks Workspace section, enter:

- Workspace Name.

- Workspace URL.

- Client ID.

- Client Secret.

- System Tables SQL Warehouse ID.

- Toggle off Jobs Monitoring and all other products.

- Toggle off the Metrics - Model Serving resource.

- Click Save Databricks Workspace.

Step 3: Configure experiment settings

Datadog supports one warehouse connection per organization. Connecting Databricks replaces any existing warehouse connection (for example, Snowflake).

After you set up your Databricks integration and workspace, configure the experiment settings in Datadog:

- Open Datadog Product Analytics.

- In the left navigation, hover over Settings and click Experiments.

- Select the Warehouse Connections tab.

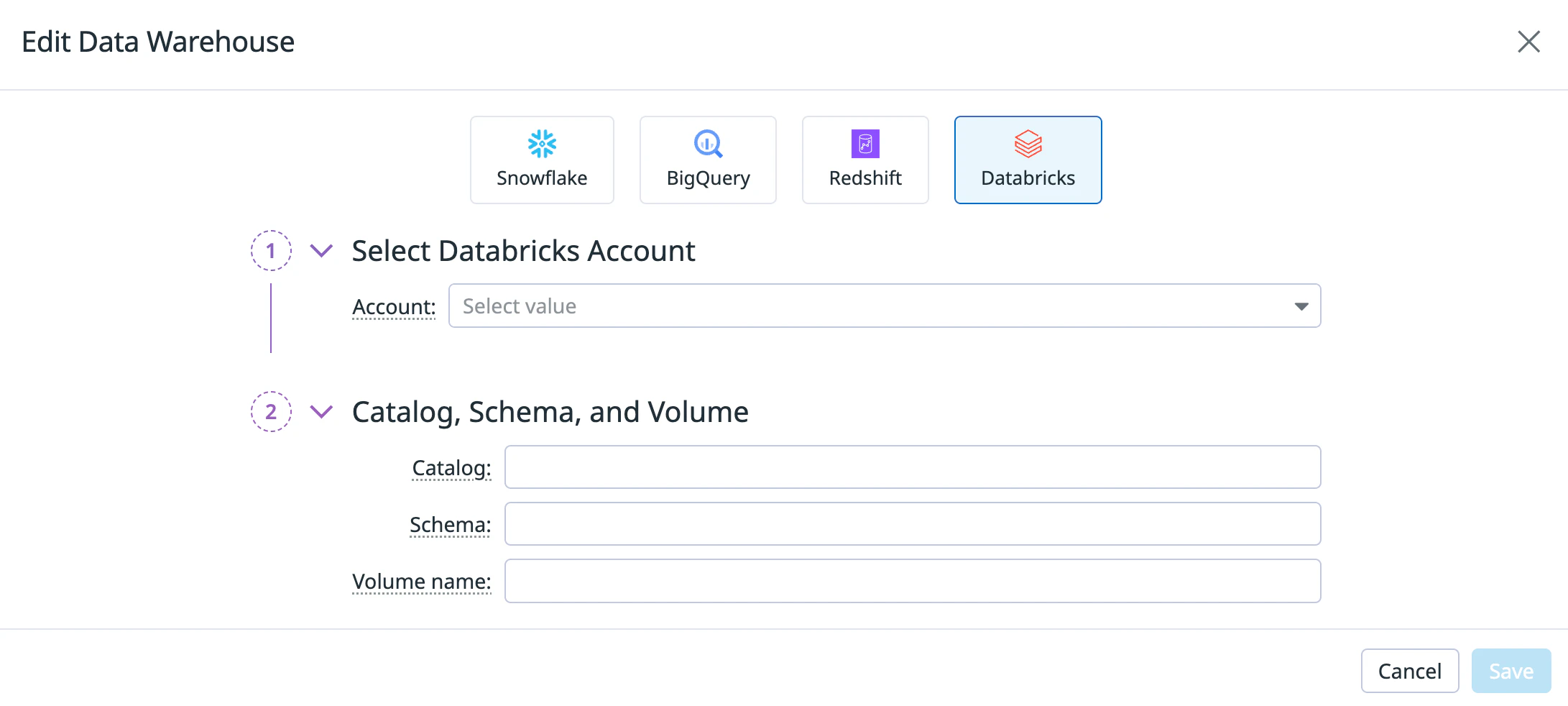

- Click Connect a data warehouse. If you already have a warehouse connected, click Edit instead.

- Select the Databricks tile.

- Using the Account dropdown, select the Databricks Workspace you configured in Step 2.

- Enter the Catalog, Schema, and Volume name you configured in Step 1. If your catalog and schema do not appear in the dropdown, enter them manually to add them to the list.

- Click Save.

After you save your warehouse connection, create experiment metrics using your Databricks data.

Further reading

Documentation, liens et articles supplémentaires utiles: