- Esenciales

- Empezando

- Agent

- API

- Rastreo de APM

- Contenedores

- Dashboards

- Monitorización de bases de datos

- Datadog

- Sitio web de Datadog

- DevSecOps

- Gestión de incidencias

- Integraciones

- Internal Developer Portal

- Logs

- Monitores

- OpenTelemetry

- Generador de perfiles

- Session Replay

- Security

- Serverless para Lambda AWS

- Software Delivery

- Monitorización Synthetic

- Etiquetas (tags)

- Workflow Automation

- Centro de aprendizaje

- Compatibilidad

- Glosario

- Atributos estándar

- Guías

- Agent

- Arquitectura

- IoT

- Plataformas compatibles

- Recopilación de logs

- Configuración

- Automatización de flotas

- Solucionar problemas

- Detección de nombres de host en contenedores

- Modo de depuración

- Flare del Agent

- Estado del check del Agent

- Problemas de NTP

- Problemas de permisos

- Problemas de integraciones

- Problemas del sitio

- Problemas de Autodiscovery

- Problemas de contenedores de Windows

- Configuración del tiempo de ejecución del Agent

- Consumo elevado de memoria o CPU

- Guías

- Seguridad de datos

- Integraciones

- Desarrolladores

- Autorización

- DogStatsD

- Checks personalizados

- Integraciones

- Build an Integration with Datadog

- Crear una integración basada en el Agent

- Crear una integración API

- Crear un pipeline de logs

- Referencia de activos de integración

- Crear una oferta de mercado

- Crear un dashboard de integración

- Create a Monitor Template

- Crear una regla de detección Cloud SIEM

- Instalar la herramienta de desarrollo de integraciones del Agente

- Checks de servicio

- Complementos de IDE

- Comunidad

- Guías

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Aplicación móvil de Datadog

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- En la aplicación

- Dashboards

- Notebooks

- Editor DDSQL

- Reference Tables

- Hojas

- Monitores y alertas

- Watchdog

- Métricas

- Bits AI

- Internal Developer Portal

- Error Tracking

- Explorador

- Estados de problemas

- Detección de regresión

- Suspected Causes

- Error Grouping

- Bits AI Dev Agent

- Monitores

- Issue Correlation

- Identificar confirmaciones sospechosas

- Auto Assign

- Issue Team Ownership

- Rastrear errores del navegador y móviles

- Rastrear errores de backend

- Manage Data Collection

- Solucionar problemas

- Guides

- Change Tracking

- Gestión de servicios

- Objetivos de nivel de servicio (SLOs)

- Gestión de incidentes

- De guardia

- Status Pages

- Gestión de eventos

- Gestión de casos

- Actions & Remediations

- Infraestructura

- Cloudcraft

- Catálogo de recursos

- Universal Service Monitoring

- Hosts

- Contenedores

- Processes

- Serverless

- Monitorización de red

- Cloud Cost

- Rendimiento de las aplicaciones

- APM

- Términos y conceptos de APM

- Instrumentación de aplicación

- Recopilación de métricas de APM

- Configuración de pipelines de trazas

- Correlacionar trazas (traces) y otros datos de telemetría

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilidad del servicio

- Endpoint Observability

- Instrumentación dinámica

- Live Debugger

- Error Tracking

- Seguridad de los datos

- Guías

- Solucionar problemas

- Límites de tasa del Agent

- Métricas de APM del Agent

- Uso de recursos del Agent

- Logs correlacionados

- Stacks tecnológicos de llamada en profundidad PHP 5

- Herramienta de diagnóstico de .NET

- Cuantificación de APM

- Go Compile-Time Instrumentation

- Logs de inicio del rastreador

- Logs de depuración del rastreador

- Errores de conexión

- Continuous Profiler

- Database Monitoring

- Gastos generales de integración del Agent

- Arquitecturas de configuración

- Configuración de Postgres

- Configuración de MySQL

- Configuración de SQL Server

- Configuración de Oracle

- Configuración de MongoDB

- Setting Up Amazon DocumentDB

- Conexión de DBM y trazas

- Datos recopilados

- Explorar hosts de bases de datos

- Explorar métricas de consultas

- Explorar ejemplos de consulta

- Exploring Database Schemas

- Exploring Recommendations

- Solucionar problemas

- Guías

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Experiencia digital

- Real User Monitoring

- Pruebas y monitorización de Synthetics

- Continuous Testing

- Análisis de productos

- Entrega de software

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Configuración

- Network Settings

- Tests en contenedores

- Repositories

- Explorador

- Monitores

- Test Health

- Flaky Test Management

- Working with Flaky Tests

- Test Impact Analysis

- Flujos de trabajo de desarrolladores

- Cobertura de código

- Instrumentar tests de navegador con RUM

- Instrumentar tests de Swift con RUM

- Correlacionar logs y tests

- Guías

- Solucionar problemas

- Code Coverage

- Quality Gates

- Métricas de DORA

- Feature Flags

- Seguridad

- Información general de seguridad

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- Observabilidad de la IA

- Log Management

- Observability Pipelines

- Gestión de logs

- CloudPrem

- Administración

- Gestión de cuentas

- Seguridad de los datos

- Ayuda

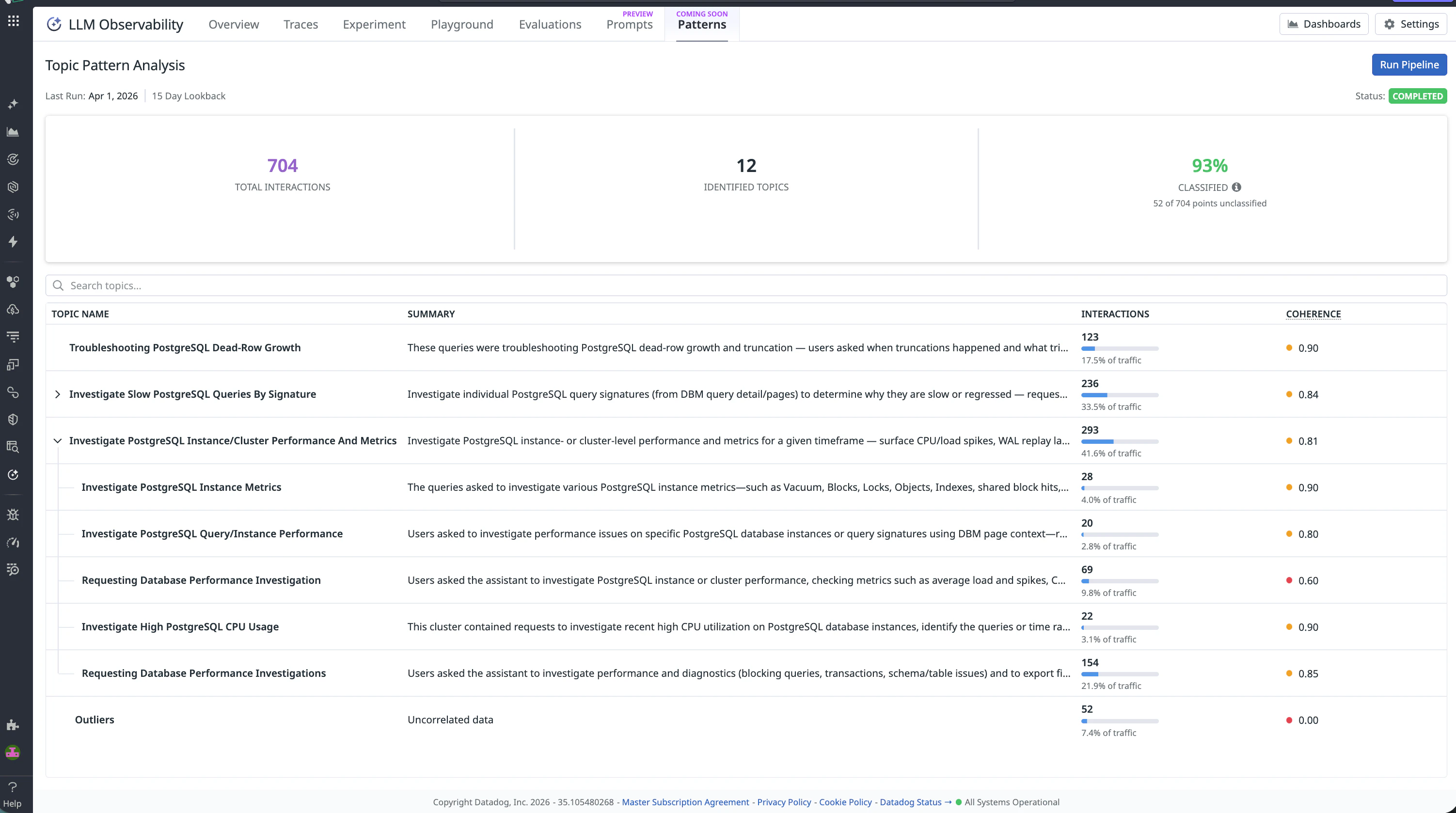

Patterns

Este producto no es compatible con el sitio Datadog seleccionado. ().

Esta página aún no está disponible en español. Estamos trabajando en su traducción.

Si tienes alguna pregunta o comentario sobre nuestro actual proyecto de traducción, no dudes en ponerte en contacto con nosotros.

Si tienes alguna pregunta o comentario sobre nuestro actual proyecto de traducción, no dudes en ponerte en contacto con nosotros.

Overview

Patterns automatically clusters your LLM application’s production traffic into meaningful topics, helping you understand what users are asking, identify coverage gaps, and diagnose failure modes.

How it works

Patterns uses text embeddings to group your application’s inputs into hierarchical topics. Topic labels are automatically generated using an LLM, giving you an interpretable view of production behavior without manual tagging.

When you run a pipeline, Patterns:

- Pulls LLM interactions from your production traffic based on your filter and sampling configuration

- Embeds interactions semantically and clusters them

- Names each cluster with an AI-generated label and summary using Datadog’s in-house models

- Organizes clusters into a parent-child topic hierarchy

Each topic shows its interaction volume, share of total traffic, and a coherence score — a measure of how semantically similar the interactions within the topic are to each other (0.0–1.0). Interactions that don’t fit any cluster are collected into an Outliers group.

Explore your Patterns

Read the summary metrics

The top of the Patterns page shows three metrics from your most recent run:

- Total interactions: How many interactions were analyzed

- Identified topics: The total number of distinct topics found, including parent and child topics

- Classified: The percentage of analyzed interactions assigned to a named topic — interactions in Outliers count as unclassified

A high Classified percentage (above 80%) means the pipeline found meaningful structure in your traffic. A low percentage suggests high variance across interaction types or a filter that spans very different use cases.

The topic table provides a hierarchical view of all discovered topics. Each topic shows:

- Topic name — auto-generated based on the interactions in the cluster

- Summary — a plain-language description of what the topic represents

- Interactions — count and percentage of total traffic

- Coherence — a measure of how semantically similar the interactions within the topic are to each other (0.0 – 1.0)

Expand parent topics to see their sub-topics and examine specific areas of your application’s traffic.

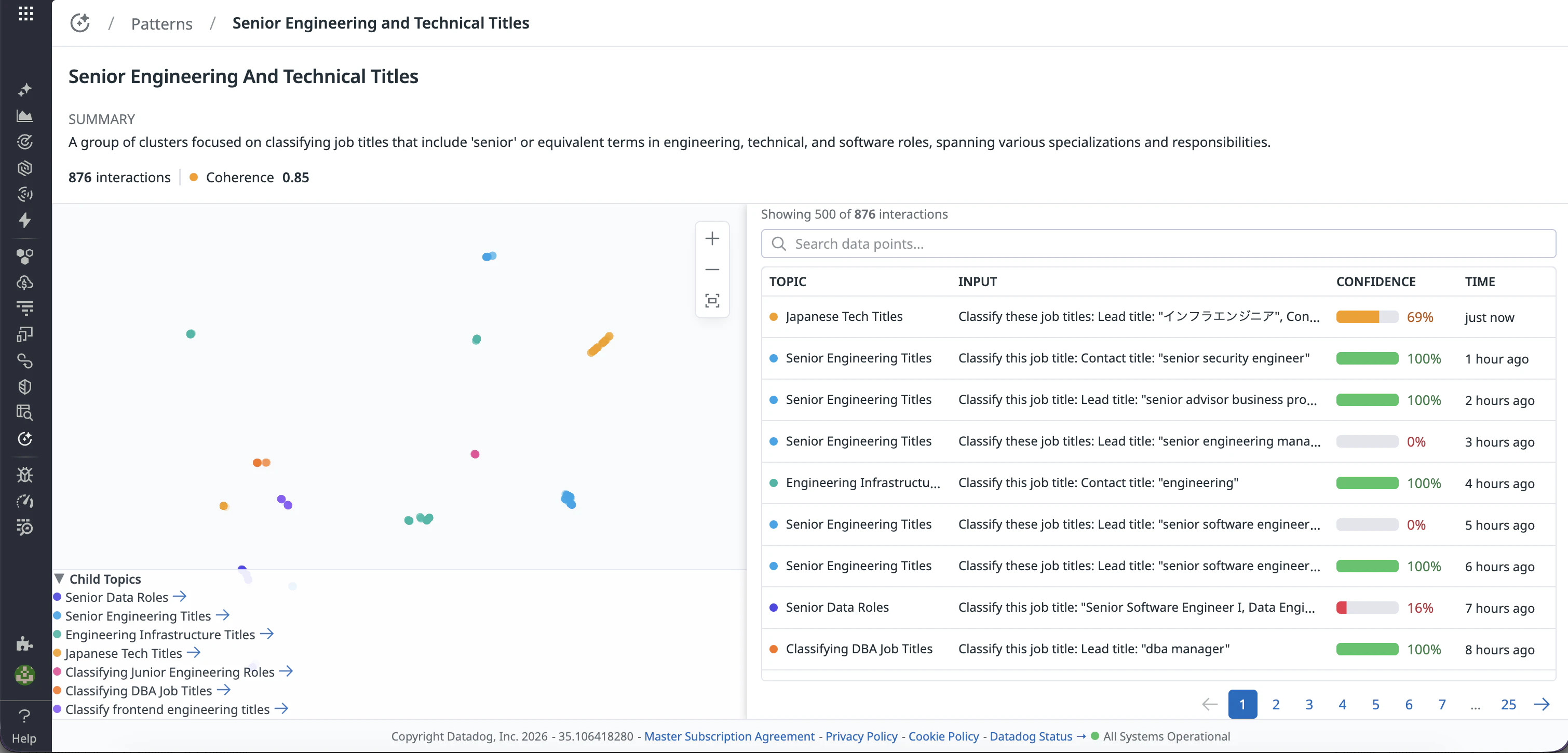

Open a topic’s detail view

Click any topic name to open the detail view. Here, you can:

- Read the summary of what this topic represents

- View the scatter plot. Each dot is an interaction, plotted by semantic similarity. Tighter clusters mean higher coherence.

- Browse the interactions table: real user inputs and outputs from production, with the sub-topic label and a confidence score for each

- Navigate to child topics listed below the scatter plot

Trigger a new run

You can trigger a new clustering pipeline run to re-analyze your production traffic.

- Click Run Pipeline.

- Configure your analysis:

- Filter: Scope to a specific application, environment, or span type.

- Sampling rate: Set what percentage of matching interactions to include. The pipeline processes up to 10,000 records per run; if your filter matches more than that, records are randomly sampled down to the cap.

- Minimum Cluster Size (Advanced): Set the minium threshold for topic formation

- Click Run. The pipeline runs in the background and takes 5 to 10 minutes. You can close the page, or visualize the progress of the pipeline hovering on the status pill.

When the pipeline completes, the Patterns page updates with the run date, lookback window, and status.

Use topics to improve your application

Understand your production traffic

Use the topic list to see what are users actually doing with their application.

Use traffic percentage to identify your most common use cases. The parent-child hierarchy helps you move from a high-level pattern down to the specific sub-patterns underneath.

Find evaluation coverage gaps

Compare your topic distribution against what your golden datasets actually cover. Look at topics that represent high production volume but have no corresponding evaluation cases: this is where your test coverage has gaps, and where model regressions are least likely to be caught before they reach users.

Diagnose failure patterns

Scope your pipeline filter to spans with poor quality scores or failed evaluations, then run the pipeline. The resulting topic taxonomy shows which types of requests are failing most, giving you a structured way to prioritize fixes instead of debugging trace by trace.

Track how traffic evolves

Re-run the pipeline periodically and compare topic distributions over time. When a new topic appears near the top that wasn’t there last month, this indicates that your users have found a new use case (or a new failure mode).