- Esenciales

- Empezando

- Agent

- API

- Rastreo de APM

- Contenedores

- Dashboards

- Monitorización de bases de datos

- Datadog

- Sitio web de Datadog

- DevSecOps

- Gestión de incidencias

- Integraciones

- Internal Developer Portal

- Logs

- Monitores

- OpenTelemetry

- Generador de perfiles

- Session Replay

- Security

- Serverless para Lambda AWS

- Software Delivery

- Monitorización Synthetic

- Etiquetas (tags)

- Workflow Automation

- Centro de aprendizaje

- Compatibilidad

- Glosario

- Atributos estándar

- Guías

- Agent

- Arquitectura

- IoT

- Plataformas compatibles

- Recopilación de logs

- Configuración

- Automatización de flotas

- Solucionar problemas

- Detección de nombres de host en contenedores

- Modo de depuración

- Flare del Agent

- Estado del check del Agent

- Problemas de NTP

- Problemas de permisos

- Problemas de integraciones

- Problemas del sitio

- Problemas de Autodiscovery

- Problemas de contenedores de Windows

- Configuración del tiempo de ejecución del Agent

- Consumo elevado de memoria o CPU

- Guías

- Seguridad de datos

- Integraciones

- Desarrolladores

- Autorización

- DogStatsD

- Checks personalizados

- Integraciones

- Build an Integration with Datadog

- Crear una integración basada en el Agent

- Crear una integración API

- Crear un pipeline de logs

- Referencia de activos de integración

- Crear una oferta de mercado

- Crear un dashboard de integración

- Create a Monitor Template

- Crear una regla de detección Cloud SIEM

- Instalar la herramienta de desarrollo de integraciones del Agente

- Checks de servicio

- Complementos de IDE

- Comunidad

- Guías

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Aplicación móvil de Datadog

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft

- En la aplicación

- Dashboards

- Notebooks

- Editor DDSQL

- Reference Tables

- Hojas

- Monitores y alertas

- Watchdog

- Métricas

- Bits AI

- Internal Developer Portal

- Error Tracking

- Explorador

- Estados de problemas

- Detección de regresión

- Suspected Causes

- Error Grouping

- Bits AI Dev Agent

- Monitores

- Issue Correlation

- Identificar confirmaciones sospechosas

- Auto Assign

- Issue Team Ownership

- Rastrear errores del navegador y móviles

- Rastrear errores de backend

- Manage Data Collection

- Solucionar problemas

- Guides

- Change Tracking

- Gestión de servicios

- Objetivos de nivel de servicio (SLOs)

- Gestión de incidentes

- De guardia

- Status Pages

- Gestión de eventos

- Gestión de casos

- Actions & Remediations

- Infraestructura

- Cloudcraft

- Catálogo de recursos

- Universal Service Monitoring

- Hosts

- Contenedores

- Processes

- Serverless

- Monitorización de red

- Cloud Cost

- Rendimiento de las aplicaciones

- APM

- Términos y conceptos de APM

- Instrumentación de aplicación

- Recopilación de métricas de APM

- Configuración de pipelines de trazas

- Correlacionar trazas (traces) y otros datos de telemetría

- Trace Explorer

- Recommendations

- Code Origin for Spans

- Observabilidad del servicio

- Endpoint Observability

- Instrumentación dinámica

- Live Debugger

- Error Tracking

- Seguridad de los datos

- Guías

- Solucionar problemas

- Límites de tasa del Agent

- Métricas de APM del Agent

- Uso de recursos del Agent

- Logs correlacionados

- Stacks tecnológicos de llamada en profundidad PHP 5

- Herramienta de diagnóstico de .NET

- Cuantificación de APM

- Go Compile-Time Instrumentation

- Logs de inicio del rastreador

- Logs de depuración del rastreador

- Errores de conexión

- Continuous Profiler

- Database Monitoring

- Gastos generales de integración del Agent

- Arquitecturas de configuración

- Configuración de Postgres

- Configuración de MySQL

- Configuración de SQL Server

- Configuración de Oracle

- Configuración de MongoDB

- Setting Up Amazon DocumentDB

- Conexión de DBM y trazas

- Datos recopilados

- Explorar hosts de bases de datos

- Explorar métricas de consultas

- Explorar ejemplos de consulta

- Exploring Database Schemas

- Exploring Recommendations

- Solucionar problemas

- Guías

- Data Streams Monitoring

- Data Jobs Monitoring

- Data Observability

- Experiencia digital

- Real User Monitoring

- Pruebas y monitorización de Synthetics

- Continuous Testing

- Análisis de productos

- Entrega de software

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Visibility

- Configuración

- Network Settings

- Tests en contenedores

- Repositories

- Explorador

- Monitores

- Test Health

- Flaky Test Management

- Working with Flaky Tests

- Test Impact Analysis

- Flujos de trabajo de desarrolladores

- Cobertura de código

- Instrumentar tests de navegador con RUM

- Instrumentar tests de Swift con RUM

- Correlacionar logs y tests

- Guías

- Solucionar problemas

- Code Coverage

- Quality Gates

- Métricas de DORA

- Feature Flags

- Seguridad

- Información general de seguridad

- Cloud SIEM

- Code Security

- Cloud Security Management

- Application Security Management

- Workload Protection

- Sensitive Data Scanner

- Observabilidad de la IA

- Log Management

- Observability Pipelines

- Gestión de logs

- CloudPrem

- Administración

- Gestión de cuentas

- Seguridad de los datos

- Ayuda

Google Cloud Platform

La suplantación de cuenta de servicio no está disponible para el sitio de Datadog seleccionado ()

Resumen

Utiliza esta guía para empezar a monitorizar tu entorno de Google Cloud. Este enfoque simplifica la configuración de los entornos de Google Cloud con varios proyectos, lo que permite maximizar la cobertura de la monitorización.

Ver la lista completa de integraciones de Google Cloud

Ver la lista completa de integraciones de Google Cloud

La integración de Google Cloud de Datadog recopila todas las métricas de Google Cloud. Datadog actualiza continuamente los documentos para mostrar todas las integraciones dependientes, pero la lista de integraciones a veces no está actualizada con las últimas métricas y servicios en la nube.

Si no ves una integración para un servicio específico de Google Cloud, ponte en contacto con el servicio de asistencia de Datadog .

| Integración | Descripción |

|---|---|

| App Engine | PaaS (plataforma como servicio) para crear aplicaciones escalables |

| BigQuery | Almacén de datos empresariales |

| Bigtable | Servicio de base de datos NoSQL Big Data |

| Cloud SQL | Servicio de base de datos MySQL |

| Cloud APIs | Interfaces programáticas para todos los servicios de Google Cloud Platform |

| Cloud Armor | Servicio de seguridad de red para proteger contra ataques de denegación de servicio y ataques web |

| Cloud Composer | Servicio de orquestación del flujo de trabajo totalmente gestionado |

| Cloud Dataproc | Servicio de nube para la ejecución de clústeres Apache Spark y Apache Hadoop |

| Cloud Dataflow | Servicio totalmente gestionado para transformar y enriquecer datos en los modos de flujo (stream) y lote. |

| Cloud Filestore | Almacenamiento de archivos totalmente gestionado y de alto rendimiento |

| Cloud Firestore | Base de datos flexible y escalable para el desarrollo móvil, web y de servidor |

| Cloud Interconnect | Conectividad híbrida |

| Cloud IoT | Conexión y gestión seguras de dispositivos |

| Cloud Load Balancing | Distribuir recursos informáticos de carga balanceada |

| Cloud Logging | Gestión y análisis de logs en tiempo real |

| Cloud Memorystore para Redis | Servicio de almacenamiento de datos en memoria totalmente gestionado |

| Cloud Router | Intercambiar rutas entre tu VPC y las redes on-premises mediante BGP |

| Cloud Run | Plataforma de computación gestionada que ejecuta contenedores sin estado a través de HTTP |

| Cloud Security Command Center | Security Command Center es un servicio de información sobre amenazas |

| Cloud Tasks | Colas de tareas distribuidas |

| Cloud TPU | Entrenar y ejecutar modelos de Machine Learning |

| Compute Engine | Máquinas virtuales de alto rendimiento |

| Container Engine | Kubernetes, gestionado por Google |

| Datastore | Base de datos NoSQL |

| Firebase | Plataforma móvil para el desarrollo de aplicaciones |

| Functions | Plataforma serverless para crear microservicios basados en eventos |

| Kubernetes Engine | Gestor de clústeres y sistema de orquestación |

| Machine Learning | Servicios de Machine Learning |

| Private Service Connect | Servicios de acceso gestionado con conexiones VPC privadas |

| Pub/Sub | Servicio de mensajería en tiempo real |

| Spanner | Servicio de base de datos relacional de escalabilidad horizontal y coherencia global |

| Almacenamiento | Almacenamiento unificado de objetos |

| Vertex AI | Crear, entrenar y desplegar modelos personalizados de Machine Learning (ML) |

| VPN | Funcionalidad gestionada de red |

Configuración

Configura la integración Google Cloud en Datadog para recopilar métricas y logs de tus servicios Google Cloud.

Requisitos previos

Si tu organización restringe las identidades por dominio, debes añadir la identidad del cliente de Datadog como valor permitido en tu política. Identidad del cliente de Datadog:

C0147pk0iLa suplantación de cuentas de servicio y la detección automática de proyectos dependen de que tengas habilitados ciertos roles y ciertas API para monitorizar proyectos. Antes de empezar, asegúrate de que las siguientes API están habilitadas para cada uno de los proyectos que quieres monitorizar:

- API de Cloud Monitoring

- Permite a Datadog consultar tus datos de métricas de Google Cloud.

- API de Compute Engine

- Permite a Datadog detectar datos de instancias de computación.

- API de Cloud Asset

- Permite a Datadog solicitar recursos de Google Cloud y vincular etiquetas (labels) relevantes a métricas como etiquetas (tags).

- API de Cloud Resource Manager

- Permite a Datadog añadir métricas con los recursos y las etiquetas (tags) correctos.

- API de IAM

- Permite a Datadog autenticarse con Google Cloud.

- API de Cloud Billing

- Permite a los desarrolladores gestionar la facturación de sus proyectos de Google Cloud Platform mediante programación. Para obtener más información, consulta la documentación de Cloud Cost Management (CCM).

- Asegúrate de que los proyectos monitorizados no están configurados como proyectos de contexto que extraen métricas de muchos otros proyectos.

Recopilación de métricas

Instalación

La recopilación de métricas a nivel de organización no está disponible para el sitio .

Se recomienda la monitorización a nivel de organización (o a nivel de carpeta) para una cobertura completa de todos los proyectos, incluyendo los futuros proyectos que puedan crearse en una organización o carpeta.

Nota: Tu cuenta de usuario de Google Cloud Identity debe tener asignado el rol Admin en el contexto deseado para completar la configuración en Google Cloud (por ejemplo, Organization Admin).

1. Crear una cuenta de servicio de Google Cloud en el proyecto por defecto

1. Crear una cuenta de servicio de Google Cloud en el proyecto por defecto

- Abre tu consola de Google Cloud.

- Ve a IAM & Admin > Cuentas de servicio.

- Haz clic en Create service account (Crear cuenta de servicio) en la parte superior.

- Asigna un nombre único a la cuenta de servicio.

- Haz clic en Done (Listo) para finalizar la creación de la cuenta de servicio.

2. Añadir la cuenta de servicio a nivel de organización o de carpeta

2. Añadir la cuenta de servicio a nivel de organización o de carpeta

- En la consola de Google Cloud, ve a la página IAM.

- Selecciona una carpeta u organización.

- Para conceder un rol a una entidad que aún no tenga otros roles en el recurso, haz clic en Grant Access (Conceder acceso) e introduce el correo electrónico de la cuenta de servicio que creaste anteriormente.

- Introduce la dirección de correo electrónico de la cuenta de servicio.

- Asigna los siguientes roles:

- Visor de cálculos proporciona acceso de sólo lectura a los recursos Get y List de Compute Engine

- Visor de monitorización proporciona acceso de sólo lectura a los datos de monitorización disponibles en su entorno Google Cloud

- Visor de recursos en la nube proporciona acceso de sólo lectura a los metadatos de recursos en la nube

- Navegador proporciona acceso de sólo lectura para navegar por la jerarquía de un proyecto

- Consumidor de uso de servicios (opcional, para entornos con varios proyectos) proporciona una asignación de costes y cuotas de API por proyecto después de que esta función haya sido activada por el servicio de asistencia de Datadog.

- Haz clic en Save (Guardar).

Nota: El rol Browser sólo es necesario en el proyecto por defecto proyecto de la cuenta de servicio. Otros proyectos sólo requieren los otros roles mencionados.

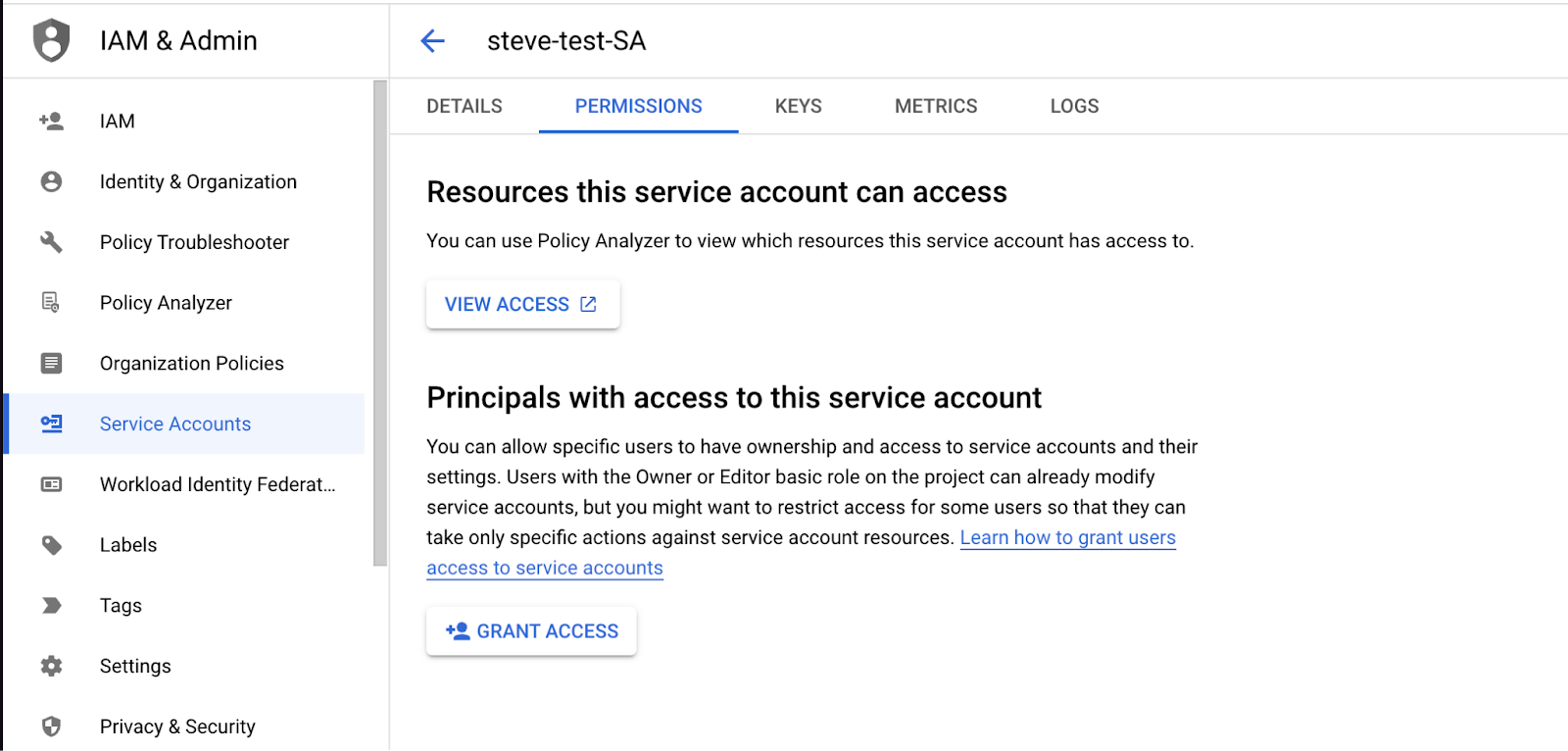

3. Añadir la entidad de Datadog a tu cuenta de servicio

3. Añadir la entidad de Datadog a tu cuenta de servicio

Nota: Si previamente configuraste el acceso utilizando una entidad compartida de Datadog, puedes revocar el permiso de esa entidad después de completar estos pasos.

- En Datadog, ve a Integraciones > Google Cloud Platform.

- Haz clic en Add Google Cloud Account (Añadir cuenta de Google Cloud). Si no tienes proyectos configurados, se te redirigirá automáticamente a esta página.

- Copia tu entidad de Datadog y guárdala para la siguiente sección.

Nota: Mantén esta ventana abierta para la sección 4.

- En la consola de Google Cloud, en el menú Cuentas de servicio, busca la cuenta de servicio que creaste en la sección 1.

- Ve a la pestaña Permisos y haz clic en Grant Access (Conceder acceso).

- Pega tu entidad de Datadog en el cuadro de texto Nuevas entidades.

- Asigna el rol de Creador de token de cuenta de servicio.

- Haz clic en Save (Guardar).

4. Finalizar la configuración de la integración en Datadog

4. Finalizar la configuración de la integración en Datadog

- En su consola de Google Cloud, ve a la pestaña Cuenta de servicio > Detalles. En esta página, busca el correo electrónico asociado a esta cuenta de servicio de Google. Tiene el formato

<SA_NAME>@<PROJECT_ID>.iam.gserviceaccount.com. - Copia este correo electrónico.

- Vuelve al cuadro de configuración de la integración en Datadog (donde copiaste tu entidad de Datadog en la sección anterior).

- Pega el correo electrónico que copiaste en Añadir correo electrónico de cuenta de servicio.

- Haz clic en Verify and Save Account (Verificar y guardar cuenta).

Las métricas aparecen en Datadog aproximadamente 15 minutos después de la configuración.

Prácticas recomendadas para monitorizar varios proyectos

Permitir la asignación de costes y cuotas de API por proyecto

Por defecto, Google Cloud asigna el coste de monitorización de llamadas de API, así como el uso de cuotas de API, al proyecto que contiene la cuenta de servicio de esta integración. Como práctica recomendada para entornos Google Cloud con varios proyectos, habilita la asignación de costes por proyecto de monitorización de las llamadas de API y del uso de cuotas de API. Con esta opción habilitada, los costes y el uso de cuotas se asignan al proyecto que se consulta, en lugar del proyecto que contiene la cuenta de servicio. Esto proporciona visibilidad de los costes de monitorización generados por cada proyecto y también ayuda a prevenir que se alcancen los límites de tasa de API.

Para habilitar esta función:

- Asegúrate de que la cuenta de servicio Datadog tiene el rol Consumidor de uso de servicios en el contexto deseado (carpeta u organización).

- Haz clic en el conmutador Enable Per Project Quota (Habilitar cuota por proyecto) en la pestaña Proyectos de la página de la integración Google Cloud.

La integración Datadog Google Cloud para el sitio utiliza cuentas de servicio para crear una conexión de API entre Google Cloud y Datadog. Sigue las instrucciones que se indican a continuación para crear una cuenta de servicio y proporcionar a Datadog las credenciales de la cuenta de servicio para que comience a realizar llamadas de API en tu nombre.

La suplantación de cuentas de servicio no está disponible para el sitio .

Nota: La facturación de Google Cloud, la API de Cloud Monitoring, la API de Compute Engine y la API de Cloud Asset deben estar habilitadas para cualquier proyecto que quieras monitorizar.

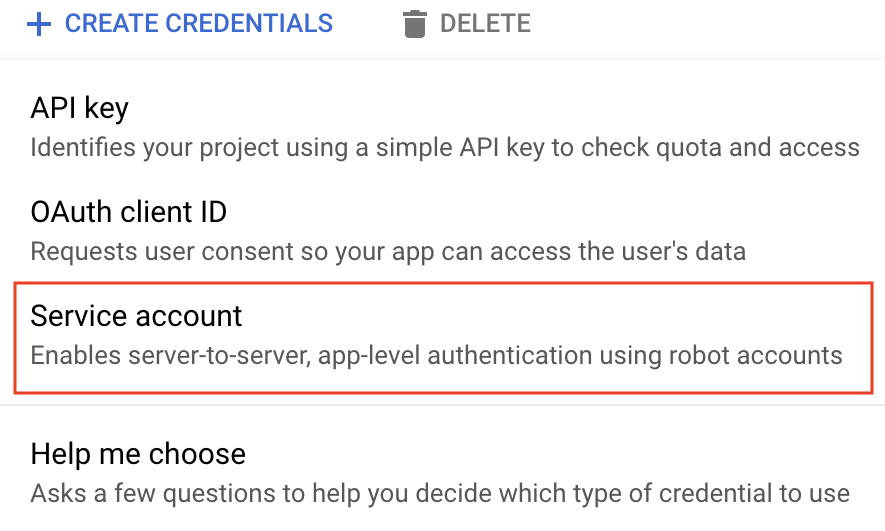

Ve a la página de credenciales de Google Cloud del proyecto de Google Cloud que quieres integrar con Datadog.

Haz clic en Create credentials (Crear credenciales).

Selecciona Cuenta de servicio.

Asigna a la cuenta de servicio un nombre único y una descripción opcional.

Haz clic en Create and continue (Crear y continuar).

Añade los siguientes roles:

- Visor de cálculos

- Visor de monitorización

- Visor de recursos en la nube

Haz clic en Done (Listo). Nota: Debes tener el rol de administrador de claves de cuentas de servicio para seleccionar los roles Compute Engine y Cloud Asset. Todos los roles seleccionados permiten a Datadog recopilar métricas, etiquetas (tags), eventos y etiquetas (labels) de usuario en tu nombre.

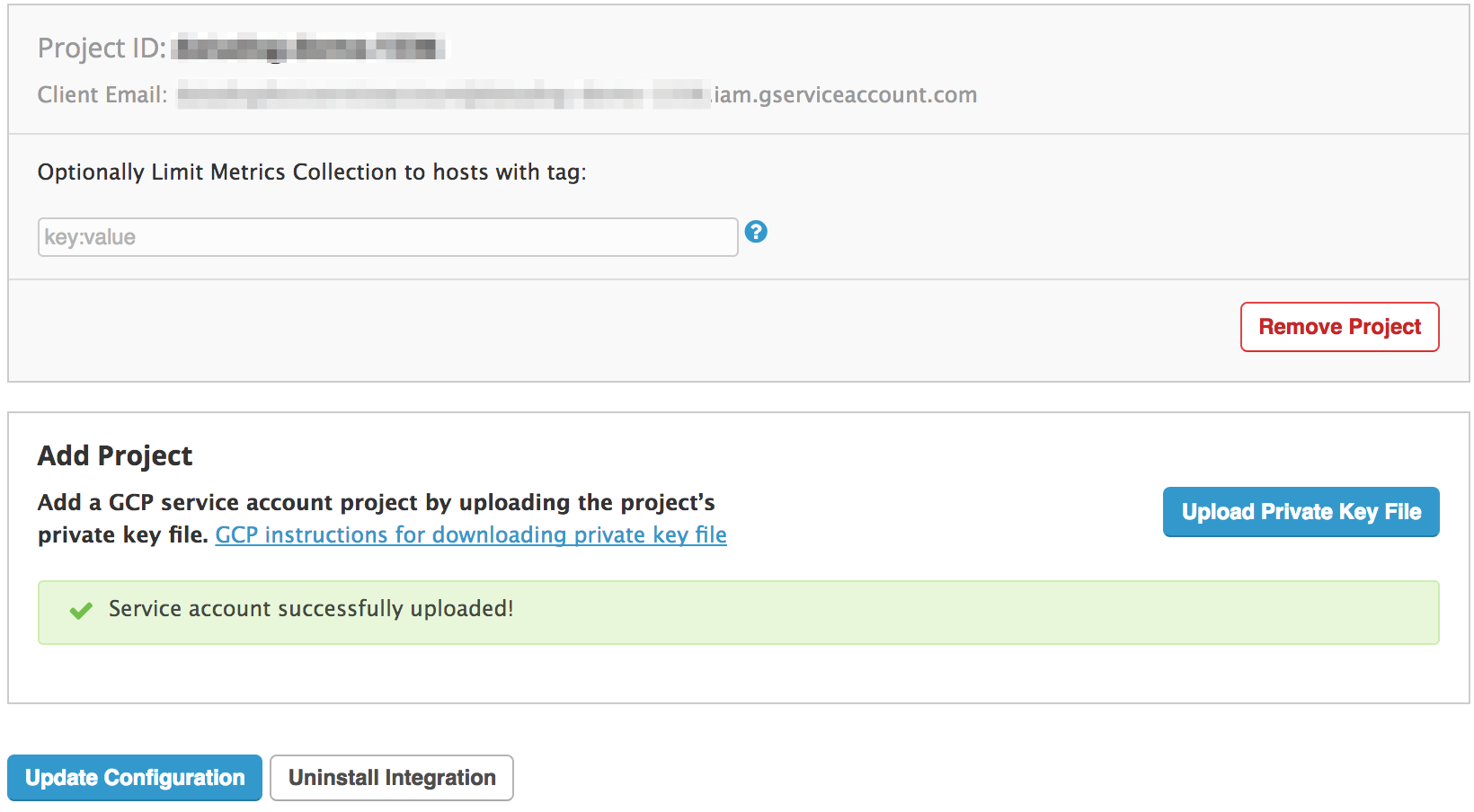

En la parte inferior de la página, busca tus cuentas de servicio y selecciona la que acabas de crear.

Haz clic en Add Key -> Create new key (Añadir clave -> Crear nueva clave) y elige JSON como tipo.

Haz clic en Create (Crear). Se descargará un archivo de clave JSON en tu ordenador. Recuerda dónde se guarda, ya que es necesario para finalizar la instalación.

En la pestaña Configuración, selecciona Cargar archivo de clave para integrar este proyecto con Datadog.

Opcionalmente, puedes utilizar etiquetas (tags) para filtrar hosts y evitar que se incluyan en esta integración. Encontrarás instrucciones detalladas en la sección de configuration.

Haz clic en Install/Update (Instalar/Actualizar).

Si quieres monitorizar varios proyectos, utiliza uno de los siguientes métodos:

- Repite el proceso anterior para utilizar varias cuentas de servicio.

- Utiliza la misma cuenta de servicio actualizando el

project_iden el archivo JSON descargado en el paso 10. A continuación, carga el archivo en Datadog como se describe en los pasos 11 a 14.

Puedes utilizar la suplantación de cuentas de servicio y la detección automática de proyectos para integrar Datadog con Google Cloud.

Este método te permite monitorizar todos los proyectos visibles para una cuenta de servicio, asignando roles IAM en los proyectos pertinentes. Puedes asignar estos roles a proyectos individualmente o puedes configurar Datadog para monitorizar grupos de proyectos, asignando estos roles a nivel de organización o de carpeta. Asignar roles de esta manera permite a Datadog detectar automáticamente y monitorizar todos los proyectos en el contexto determinado, incluyendo los nuevos proyectos que puedan añadirse al grupo en el futuro.

1. Crear una cuenta de servicio de Google Cloud

1. Crear una cuenta de servicio de Google Cloud

- Abre tu consola de Google Cloud.

- Ve a IAM & Admin > Cuentas de servicio.

- Haz clic en Create service account (Crear cuenta de servicio) en la parte superior.

- Asigna un nombre único a la cuenta de servicio y haz clic en Create and continue (Crear y continuar).

- Añade los siguientes roles a la cuenta de servicio:

- Visor de monitorización

- Visor de cálculos

- Visor de recursos en la nube

- Navegador

- Haz clic en Continue (Continuar) y luego en Done (Listo) para finalizar la creación de la cuenta de servicio.

2. Añadir la entidad de Datadog a tu cuenta de servicio

2. Añadir la entidad de Datadog a tu cuenta de servicio

En Datadog, ve a Integraciones > Google Cloud Platform.

Haz clic en Add GCP Account (Añadir cuenta de GCP). Si no tienes proyectos configurados, se te redirigirá automáticamente a esta página.

Si no generaste una entidad de Datadog para tu organización, haz clic en el botón Generate Principal (Generar entidad).

Copia tu entidad de Datadog y guárdala para la siguiente sección.

Nota: Mantén esta ventana abierta para la siguiente sección.

En la consola de Google Cloud, en el menú Cuentas de servicio, busca la cuenta de servicio que creaste en la primera sección.

Ve a la pestaña Permisos y haz clic en Grant Access (Conceder acceso).

Pega tu entidad de Datadog en el cuadro de texto Nuevas entidades.

Asigna el rol de Creador de token de cuenta de servicio y haz clic en SAVE (Guardar).

Nota: Si previamente configuraste el acceso utilizando una entidad compartida de Datadog, puedes revocar el permiso de esa entidad después de completar estos pasos.

3. Finalizar la configuración de la integración en Datadog

3. Finalizar la configuración de la integración en Datadog

- En tu consola de Google Cloud, ve a la pestaña Cuenta de servicio > Detalles. Una vez allí, busca el correo electrónico asociado a esta cuenta de servicio de Google. Tiene un formato parecido a

<sa-name>@<project-id>.iam.gserviceaccount.com. - Copia este correo electrónico.

- Vuelve al cuadro de configuración de la integración en Datadog donde copiaste tu entidad de Datadog en la sección anterior).

- En el cuadro Añadir correo electrónico de cuenta de servicio, pega el correo electrónico que copiaste anteriormente.

- Haz clic en Verify and Save Account (Verificar y guardar cuenta).

Las métricas aparecerán en Datadog en aproximadamente quince minutos.

Validación

Para ver tus métricas, utiliza el menú de la izquierda para ir a Métricas > Resumen y busca gcp:

Configuración

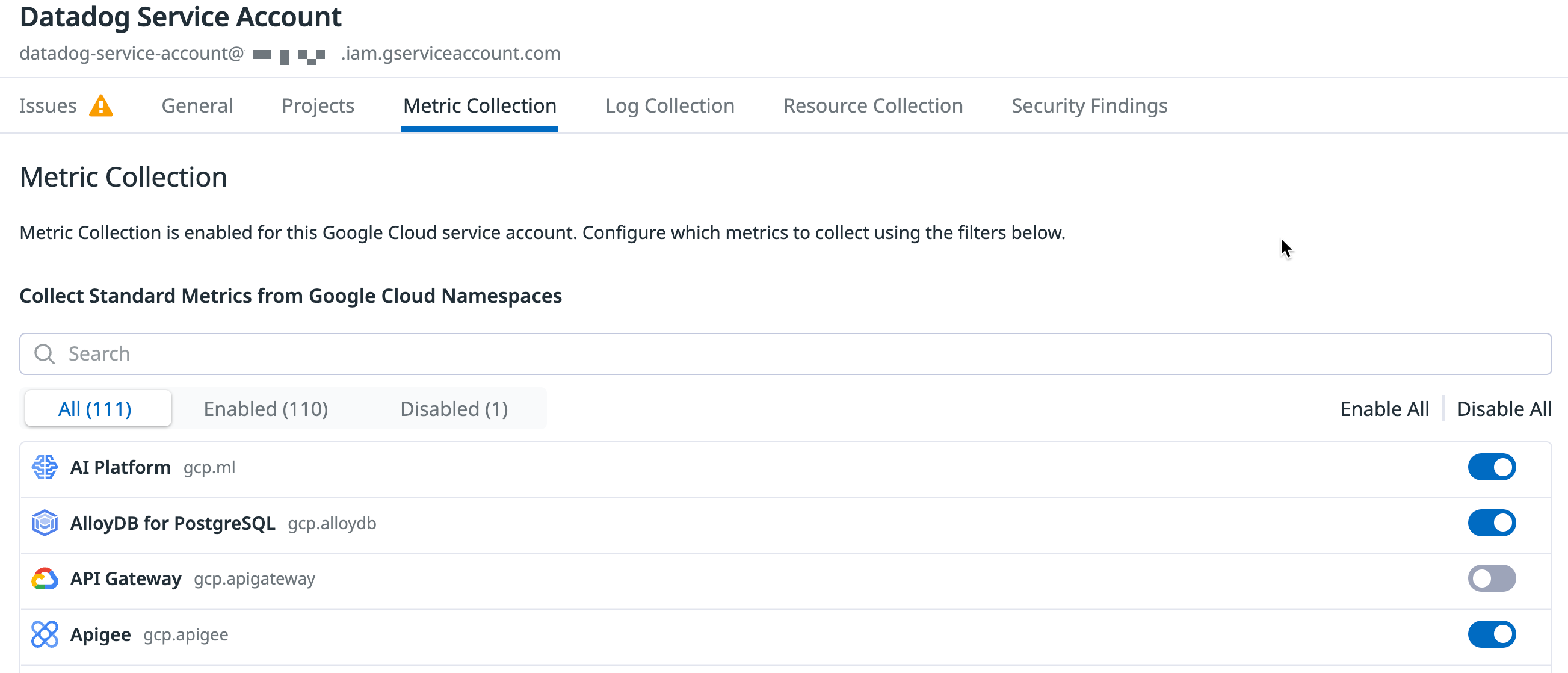

Limitar la recopilación de métricas por espacio de nombres de métricas

Limitar la recopilación de métricas por espacio de nombres de métricas

Opcionalmente, puedes elegir qué servicios de Google Cloud monitorizar con Datadog. La configuración de la recopilación de métricas de servicios específicos de Google te permite optimizar los costes de la API de monitorización de Google Cloud y, al mismo tiempo, conservar la visibilidad de tus servicios críticos.

En la pestaña Recopilación de métricas de la página de la integración Google Cloud de Datadog, desmarca los espacios de nombres de métricas que quieres excluir. También puedes desactivar la recopilación de todos los espacios de nombres de métricas.

Limitar la recopilación de métricas por etiqueta (tag)

Limitar la recopilación de métricas por etiqueta (tag)

Por defecto, verás todas tus instancias de Google Compute Engine (GCE) en la información general de la infraestructura Datadog. Datadog las etiqueta automáticamente con etiquetas (tags) de host GCE y con cualquier etiqueta (label) GCE que hayas añadido.

Opcionalmente, puedes utilizar etiquetas (tags) para limitar las instancias que se extraen en Datadog. En la pestaña Recopilación de métricas del proyecto, introduce las etiquetas (tags) en el cuadro de texto Limitar filtros de recopilación de métricas. Sólo se importarán a Datadog los hosts que coincidan con una de las etiquetas (tags) definidas. Puedes utilizar comodines (? para un solo carácter, * para varios caracteres), para buscar varios hosts coincidentes, o !, para excluir determinados hosts. Este ejemplo incluye todas las instancias de tamaño c1*, pero excluye los hosts de staging:

datadog:monitored,env:production,!env:staging,instance-type:c1.*

Para obtener más información, consulta la página de Google Organizar recursos mediante etiquetas (labels).

Aprovechar las ventajas del Datadog Agent

Utiliza el Datadog Agent para recopilar métricas más granulares y de baja latencia de tu infraestructura. Instala el Agent en cualquier host, incluyendo GKE, para obtener información más detallada sobre las trazas (traces) y los logs que puede recopilar. Para obtener más información, consulta ¿Por qué debería instalar el Datadog Agent en mis instancias en la nube?

Recopilación de logs

Reenvía los logs de tus servicios de Google Cloud a Datadog utilizando Google Cloud Dataflow y la plantilla de Datadog. Este método permite comprimir y agrupar los eventos antes de reenviarlos a Datadog.

Puedes utilizar el módulo terraform-gcp-datadog-integration para gestionar esta infraestructura a través de Terraform, o seguir las instrucciones de esta sección para:

- Crear un tema Pub/Sub y una suscripción pull para recibir logs de un sink de logs configurado

- Crear una cuenta de servicio del worker de Dataflow personalizada para proporcionar mínimo privilegio a tus workers del pipeline de Dataflow

- Crear un sink de logs para publicar logs en el tema Pub/Sub

- Crear un trabajo de Dataflow utilizando la plantilla de Datadog para transmitir logs desde la suscripción Pub/Sub a Datadog

Tienes control total sobre qué logs se envían a Datadog a través de los filtros de registro que creas en el sink de logs, incluidos logs de GCE y GKE. Consulta la página Lenguaje de consulta de registro de Google para obtener información sobre cómo escribir filtros. Para un examen detallado de la arquitectura creada, consulta Stream logs from Google Cloud to Datadog en el Centro de arquitectura de la nube.

Nota: Debes habilitar la API de Dataflow para utilizar Google Cloud Dataflow. Para obtener más información, consulta Habilitación de API en la documentación de Google Cloud.

Para recopilar logs de aplicaciones que se ejecutan en GCE o GKE, también puedes utilizar el Datadog Agent.

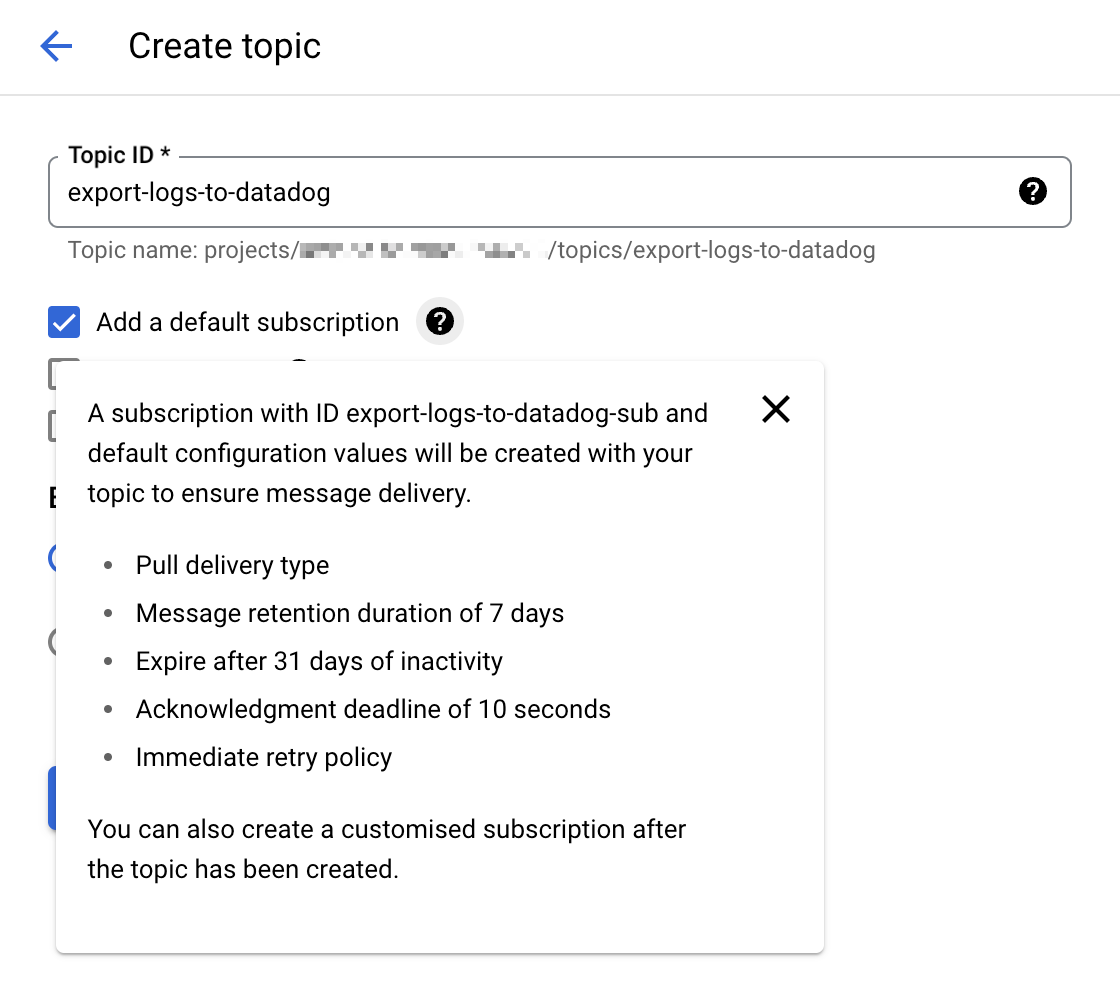

1. Crear un tema y una suscripción Cloud Pub/Sub

Ve a la consola de Cloud Pub/Sub y crea un nuevo tema. Selecciona la opción Añadir una suscripción predeterminada para simplificar la configuración.

Nota: También puedes configurar manualmente una suscripción Cloud Pub/Sub con el tipo de entrega Pull. Si creas tu suscripción Pub/Sub manualmente, deja la casilla

Enable dead letteringdesmarcada. Para obtener más información, consulta Características de Pub/Sub no compatibles.

Proporciona un nombre explícito para ese tema como

export-logs-to-datadogy haz clic en Create (Crear).Crea un tema adicional y una suscripción por defecto para gestionar cualquier mensaje de log rechazado por la API de Datadog. El nombre de este tema se utiliza en la plantilla de Datadog Dataflow como parte de la configuración de la ruta del parámetro de plantilla

outputDeadletterTopic. Cuando hayas inspeccionado y corregido cualquier problema en los mensajes fallidos, envíalos de nuevo al temaexport-logs-to-datadogoriginal ejecutando un trabajo de plantilla Pub/Sub a Pub/Sub.Datadog recomienda crear un secreto en Secret Manager, con el valor válido de tu clave de API Datadog, para utilizarlo posteriormente en la plantilla de Datadog Dataflow.

Advertencia: Las Cloud Pub/Subs están sujetas a cuotas y limitaciones de Google Cloud. Si el número de logs que tienes supera estas limitaciones, Datadog te recomienda dividir tus logs en diferentes temas. Para obtener información sobre cómo configurar tus notificaciones de monitor si te acercas a esos límites, consulta la sección Monitorizar el reenvío de logs Pub/Sub.

2. Crear una cuenta de servicio de worker de Dataflow personalizada

El comportamiento predeterminado de los workers de pipelines de Dataflow consiste en utilizar la cuenta de servicio de Compute Engine por defecto de tu proyecto, que concede permisos a todos los recursos del proyecto. Si estás reenviando logs desde un entorno de Producción, deberías crear una cuenta de servicio de worker personalizada con sólo los roles y permisos necesarios, y asignar esta cuenta de servicio a tus workers de pipelines de Dataflow.

- Ve a la página Cuentas de servicio en la consola de Google Cloud y selecciona tu proyecto.

- Haz clic en CREATE SERVICE ACCOUNT (Crear cuenta de servicio) y asigna un nombre descriptivo a la cuenta de servicio. Haz clic en CREATE AND CONTINUE (Crear y continuar).

- Añade los roles en la tabla de permisos necesarios y haz clic en DONE (Listo).

Permisos necesarios

- Administrador de Dataflow

roles/dataflow.admin

Permitir que esta cuenta de servicio realice tareas administrativas de Dataflow.- Worker de Dataflow

roles/dataflow.worker

Permitir que esta cuenta de servicio realice operaciones de trabajos de Dataflow.- Visor de Pub/Sub

roles/pubsub.viewer

Permitir que esta cuenta de servicio vea mensajes de la suscripción Pub/Sub con tus logs de Google Cloud.- Suscriptor de Pub/Sub

roles/pubsub.subscriber

Permitir que esta cuenta de servicio consuma mensajes de la suscripción Pub/Sub con tus logs de Google Cloud.- Editor de Pub/Sub

roles/pubsub.publisher

Permitir que esta cuenta de servicio publique los mensajes fallidos en una suscripción independiente, lo que permite analizar o reenviar los logs.- Secret Manager Secret Accessor

roles/secretmanager.secretAccessor

Permitir que esta cuenta de servicio acceda a la clave de API de Datadog en Secret Manager.- Administrador de almacenamiento de objetos

roles/storage.objectAdmin

Permitir que esta cuenta de servicio lea y escriba en el bucket de almacenamiento en la nube especificado para los archivos de staging.

Nota: Si no creas una cuenta de servicio personalizada para los workers de pipelines de Dataflow, asegúrate de que la cuenta de servicio predeterminada de Compute Engine tenga los permisos requeridos anteriores.

3. Exportar logs desde un tema Google Cloud Pub/Sub

Ve a la página del Logs Explorer en la consola de Google Cloud.

En la pestaña de Log Router (Enrutador de logs), selecciona Create Sink (Crear sumidero de datos).

Indica un nombre para el sumidero de datos.

Elige Cloud Pub/Sub como destino y selecciona el tema Cloud Pub/Sub creado para tal fin. Nota: El tema Cloud Pub/Sub puede encontrarse en un proyecto diferente.

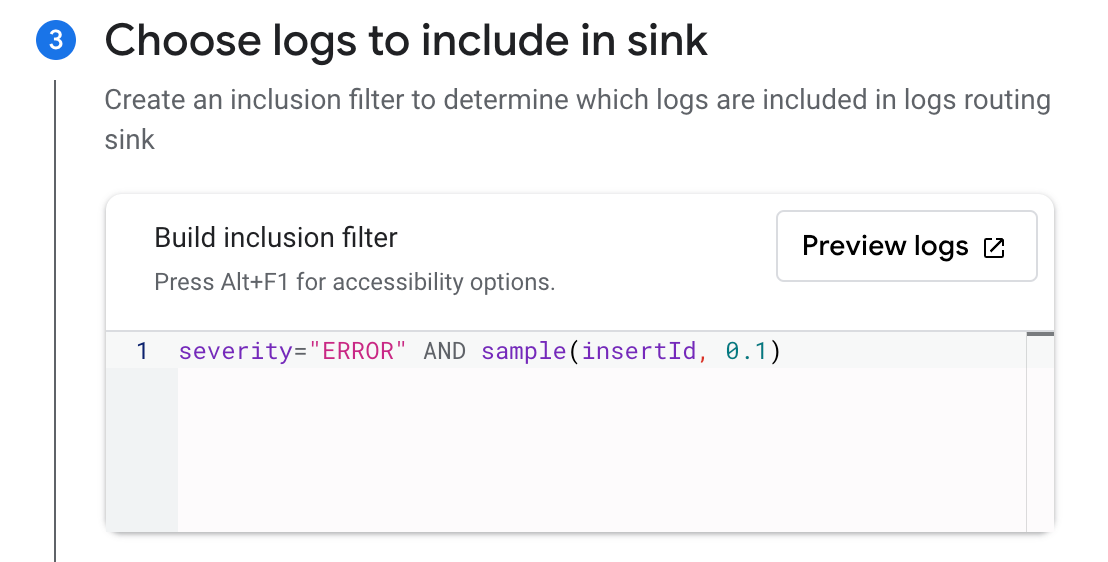

Elige los logs que quieres incluir en el sink con un filtro opcional de inclusión o exclusión. Puedes filtrar los logs con una consulta de búsqueda o utilizar la función de muestra. Por ejemplo, para incluir sólo el 10% de los logs con un nivel de

severitydeERROR, crea un filtro de inclusión conseverity="ERROR" AND sample(insertId, 0.1).Haz clic en Create Sink (Crear sink).

Nota: Es posible crear varias exportaciones desde Google Cloud Logging al mismo tema Cloud Pub/Sub con diferentes sumideros.

4. Crear y ejecutar el trabajo de Dataflow

Ve a la página Crear trabajo a partir de una plantilla en la consola de Google Cloud.

Asigna un nombre al trabajo y selecciona un endpoint regional de Dataflow.

Selecciona

Pub/Sub to Datadogen el desplegable Plantilla de Dataflow. Aparecerá la sección Parámetros requeridos. a. Selecciona la suscripción de entrada en el desplegable Suscripción de entrada Pub/Sub. b. Introduce lo siguiente en el campo URL de la API de logs de Datadog:https://

Nota: Asegúrate de que el selector de sitios de Datadog a la derecha de la página esté configurado con tu sitio de Datadog antes de copiar la URL de arriba.

c. Selecciona el tema creado para recibir fallos de mensajes en el desplegable Tema Pub/Sub de salida de mensajes muertos. d. Especifica una ruta para los archivos temporales en tu bucket de almacenamiento en el campo Ubicación temporal.

En Parámetros opcionales, marca

Include full Pub/Sub message in the payload.Si creaste un secreto en Secret Manager con el valor de tu clave de API Datadog, como se menciona en el paso 1, introduce el nombre de recurso del secreto en el campo ID de Google Cloud Secret Manager.

Para obtener información detallada sobre el uso de las demás opciones disponibles, consulta Parámetros de plantilla en la plantilla de Dataflow:

apiKeySource=KMSconapiKeyKMSEncryptionKeyconfigurada con tu ID de clave de Cloud KMS yapiKeyconfigurada con la clave de API cifrada.- No recomendado:

apiKeySource=PLAINTEXTconapiKeyconfigurada con la clave de API en texto sin formato.

- Si creaste una cuenta de servicio de worker personalizada, selecciónala en el desplegable Correo electrónico de cuenta de servicio.

- Haz clic en RUN JOB (Ejecutar trabajo).

Nota: Si tienes una VPC compartida, consulta la página Especificar una red y subred en la documentación de Dataflow para obtener directrices sobre cómo especificar los parámetros Network y Subnetwork.

Validación

Los nuevos eventos de generación de logs enviados al tema Cloud Pub/Sub aparecen en el Datadog Log Explorer.

Nota: Puedes utilizar la Calculadora de precios de Google Cloud para calcular los posibles costes.

Monitorizar el reenvío de logs de Cloud Pub/Sub

La integración de Google Cloud Pub/Sub proporciona métricas útiles para monitorizar el estado del reenvío de logs:

gcp.pubsub.subscription.num_undelivered_messagespara el número de mensajes pendientes de entregagcp.pubsub.subscription.oldest_unacked_message_agepara la antigüedad del mensaje no confirmado más antiguo de una suscripción

Utiliza las métricas anteriores con un monitor de métricas para recibir alertas de mensajes en tus suscripciones de entrada y de mensajes no entregados.

Monitorizar el pipeline de Dataflow

Utiliza la integración de Google Cloud Dataflow de Datadog para monitorizar todos los aspectos de tus pipelines de Dataflow. Puedes ver todas tus métricas claves de Dataflow en el dashboard predefinido, enriquecido con datos contextuales, como información sobre las instancias de GCE que ejecutan tus cargas de trabajo de Dataflow y el rendimiento de tu Pub/Sub.

También puedes utilizar un monitor recomendado preconfigurado para definir notificaciones sobre aumentos en el tiempo de backlog de tu pipeline. Para obtener más información, consulta Monitorizar tus pipelines de Dataflow con Datadog en el blog de Datadog.

La recopilación de logs de Google Cloud con una suscripción Pub/Sub Push pronto estará obsoleta.

La documentación anterior de la suscripción Push sólo se mantiene para solucionar problemas o modificar configuraciones legacy.

Utiliza una suscripción Pull con la plantilla de Datadog Dataflow, como se describe en Método Dataflow, para reenviar tus logs de Google Cloud a Datadog.

Monitorización ampliada de BigQuery

Join the Preview!

La monitorización ampliada de BigQuery está en Vista previa. Utiliza este formulario para registrarte y empezar a obtener información sobre el rendimiento de tus consultas.

Request AccessLa monitorización ampliada de BigQuery te proporciona una visibilidad granular de tus entornos BigQuery.

Monitorización del rendimiento de trabajos de BigQuery

Para monitorizar el rendimiento de tus trabajos de BigQuery, concede el rol Visor de recursos BigQuery a la cuenta de servicio de Datadog de cada proyecto de Google Cloud.

Notas:

- Necesitas haber verificado tu cuenta de servicio Google Cloud en Datadog, como se indica en la sección de configuración.

- No es necesario configurar Dataflow para recopilar logs de la monitorización ampliada de BigQuery.

- En la consola de Google Cloud, ve a la página de IAM.

- Haz clic en Grant access (Conceder acceso).

- Introduce el correo electrónico de tu cuenta de servicio en Nuevas entidades.

- Asigna el rol Visor de recursos BigQuery.

- Haz clic en SAVE (Guardar).

- En la página de la integración Google Cloud de Datadog haz clic en la pestaña BigQuery.

- Haz clic en el conmutador Enable Query Performance (Habilitar consulta del rendimiento).

Monitorización de la calidad de los datos de BigQuery

La monitorización de la calidad de los datos de BigQuery proporciona métricas de calidad de tus tablas de BigQuery (desde la relevancia y las actualizaciones al recuento de filas y el tamaño). Explora los datos de tus tablas en profundidad en la página de monitorización de la calidad de los datos.

Para recopilar métricas de calidad, concede el rol Visor de metadatos de BigQuery a la cuenta de servicio de Datadog para cada tabla de BigQuery que estés utilizando.

Nota: El Visor de metadatos de BigQuery puede aplicarse a nivel de tabla, conjunto de datos, proyecto u organización de BigQuery.

- Para la monitorización de la calidad de los datos de todas las tablas de un conjunto de datos, concede acceso a nivel de conjunto de datos.

- Para la monitorización de la calidad de los datos de todos los conjuntos de datos de un proyecto, concede acceso a nivel de proyecto.

- Ve a BigQuery.

- En el Explorador, busca el recurso BigQuery deseado.

- Haz clic en el menú de tres puntos situado junto al recurso y luego haz clic en Share -> Manage Permissions (Compartir -> Gestionar permisos).

- Haz clic en ADD PRINCIPAL (Añadir entidad).

- En el cuadro de nuevas entidades, introduce la cuenta de servicio Datadog configurada para la integración Google Cloud.

- Asigna el rol Visor de metadatos de BigQuery.

- Haz clic en SAVE (Guardar).

- En la página de la integración Google Cloud de Datadog haz clic en la pestaña BigQuery.

- Haz clic en el conmutador Enable Data Quality (Habilitar calidad de los datos).

Conservación de logs de trabajos de BigQuery

Datadog recomienda crear un nuevo índice de logs llamado data-observability-queries e indexar tus logs de trabajos de BigQuery durante 15 días. Utiliza el siguiente filtro de índice para extraer los logs:

service:data-observability @platform:*

Consulta el cálculo de costes en la página de tarifas de Log Management.

Recopilación de cambios de recursos

Join the Preview!

¡La recopilación de cambios de recursos está en Vista previa! Para solicitar acceso, utiliza el formulario adjunto.

Request AccessSelecciona Habilitar recopilación de recursos en la pestaña Recopilación de recursos de la página de la integración Google Cloud Page. Esto te permite recibir eventos de recursos en Datadog cuando Cloud Asset Inventory de Google detecta cambios en tus recursos en la nube.

A continuación, sigue los pasos que se indican a continuación para reenviar eventos de cambios de un tema Pub/Sub al Explorador de eventos de Datadog.

CLI Google Cloud

CLI Google Cloud

Crear un tema y una suscripción Cloud Pub/Sub

Crear un tema

- En la página de temas Google Cloud Pub/Sub, haz clic en CREATE TOPIC (Crear tema).

- Asigna un nombre descriptivo al tema.

- Desmarca la opción de añadir una suscripción por defecto.

- Haz clic en CREATE (Create).

Crear una suscripción

- En la página de suscripciones Google Cloud Pub/Sub, haz clic en CREATE SUBSCRIPTION (Crear suscripción).

- Introduce

export-asset-changes-to-datadogpara el nombre de la suscripción. - Selecciona el tema Cloud Pub/Sub creado anteriormente.

- Selecciona Pull como tipo de entrega.

- Haz clic en CREATE (Create).

Conceder acceso

Para leer desde esta suscripción Pub/Sub, la cuenta de servicio Google Cloud utilizada por la integración necesita el permiso pubsub.subscriptions.consume para la suscripción. Un rol predeterminado con permisos mínimos que permite esto es el rol Suscriptor Pub/Sub. Sigue los pasos que se indican a continuación para conceder este rol:

- En la página de suscripciones Google Cloud Pub/Sub, haz clic en la suscripción

export-asset-changes-to-datadog. - En el panel de información situado a la derecha de la página, haz clic en la pestaña Permisos. Si no ves el panel de información, haz clic en SHOW INFO PANEL (Mostrar panel de información).

- Haz clic en ADD PRINCIPAL (Añadir entidad).

- Introduce el correo electrónico de la cuenta de servicio utilizada por la integración Datadog Google Cloud. Puedes encontrar una lista de las cuentas de servicio a la izquierda de la pestaña Configuración en la página de la integración Google Cloud en Datadog.

Crear un flujo de recursos

Ejecuta el siguiente comando en Cloud Shell o la CLI gcloud para crear un flujo de Cloud Asset Inventory que envíe eventos de cambios al tema Pub/Sub creado anteriormente.

gcloud asset feeds create <FEED_NAME>

--project=<PROJECT_ID>

--pubsub-topic=projects/<PROJECT_ID>/topics/<TOPIC_NAME>

--asset-names=<ASSET_NAMES>

--asset-types=<ASSET_TYPES>

--content-type=<CONTENT_TYPE>

Actualiza los valores de los parámetros como se indica:

<FEED_NAME>: Nombre descriptivo del flujo de Cloud Asset Inventory.<PROJECT_ID>: tu ID de proyecto de Google Cloud.<TOPIC_NAME>: Nombre del tema Pub/Sub vinculado a la suscripciónexport-asset-changes-to-datadog.<ASSET_NAMES>: Lista separada por comas de nombres completos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-types.<ASSET_TYPES>: Lista separada por comas de tipos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-names.<CONTENT_TYPE>: Tipo de contenido de recurso opcional del que recibir eventos de cambios.

gcloud asset feeds create <FEED_NAME>

--folder=<FOLDER_ID>

--pubsub-topic=projects/<PROJECT_ID>/topics/<TOPIC_NAME>

--asset-names=<ASSET_NAMES>

--asset-types=<ASSET_TYPES>

--content-type=<CONTENT_TYPE>

Actualiza los valores de los parámetros como se indica:

<FEED_NAME>: Nombre descriptivo del flujo de Cloud Asset Inventory.<FOLDER_ID>: Tu ID de carpeta de Google Cloud.<TOPIC_NAME>: Nombre del tema Pub/Sub vinculado a la suscripciónexport-asset-changes-to-datadog.<ASSET_NAMES>: Lista separada por comas de nombres completos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-types.<ASSET_TYPES>: Lista separada por comas de tipos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-names.<CONTENT_TYPE>: Tipo de contenido de recurso opcional del que recibir eventos de cambios.

gcloud asset feeds create <FEED_NAME>

--organization=<ORGANIZATION_ID>

--pubsub-topic=projects/<PROJECT_ID>/topics/<TOPIC_NAME>

--asset-names=<ASSET_NAMES>

--asset-types=<ASSET_TYPES>

--content-type=<CONTENT_TYPE>

Actualiza los valores de los parámetros como se indica:

<FEED_NAME>: Nombre descriptivo del flujo de Cloud Asset Inventory.<ORGANIZATION_ID>: Tu ID de organización de Google Cloud.<TOPIC_NAME>: Nombre del tema Pub/Sub vinculado a la suscripciónexport-asset-changes-to-datadog.<ASSET_NAMES>: Lista separada por comas de nombres completos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-types.<ASSET_TYPES>: Lista separada por comas de tipos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-names.<CONTENT_TYPE>: Tipo de contenido de recurso opcional del que recibir eventos de cambios.

Terraform

Terraform

Crear un flujo de recursos

Copia la siguiente plantilla de Terraform y sustituye los argumentos necesarios:

locals {

project_id = "<PROJECT_ID>"

}

resource "google_pubsub_topic" "pubsub_topic" {

project = local.project_id

name = "<TOPIC_NAME>"

}

resource "google_pubsub_subscription" "pubsub_subscription" {

project = local.project_id

name = "export-asset-changes-to-datadog"

topic = google_pubsub_topic.pubsub_topic.id

}

resource "google_pubsub_subscription_iam_member" "subscriber" {

project = local.project_id

subscription = google_pubsub_subscription.pubsub_subscription.id

role = "roles/pubsub.subscriber"

member = "serviceAccount:<SERVICE_ACCOUNT_EMAIL>"

}

resource "google_cloud_asset_project_feed" "project_feed" {

project = local.project_id

feed_id = "<FEED_NAME>"

content_type = "<CONTENT_TYPE>" # Optional. Remove if unused.

asset_names = ["<ASSET_NAMES>"] # Optional if specifying asset_types. Remove if unused.

asset_types = ["<ASSET_TYPES>"] # Optional if specifying asset_names. Remove if unused.

feed_output_config {

pubsub_destination {

topic = google_pubsub_topic.pubsub_topic.id

}

}

}

Actualiza los valores de los parámetros como se indica:

<PROJECT_ID>: tu ID de proyecto de Google Cloud.<TOPIC_NAME>: Nombre del tema Pub/Sub que se vinculará a la suscripciónexport-asset-changes-to-datadog.<SERVICE_ACCOUNT_EMAIL>: Correo electrónico de la cuenta de servicio utilizada por la integración Datadog Google Cloud.<FEED_NAME>: Nombre descriptivo del flujo de Cloud Asset Inventory.<ASSET_NAMES>: Lista separada por comas de nombres completos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-types.<ASSET_TYPES>: Lista separada por comas de tipos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-names.<CONTENT_TYPE>: Tipo de contenido de recurso opcional del que recibir eventos de cambios.

locals {

project_id = "<PROJECT_ID>"

}

resource "google_pubsub_topic" "pubsub_topic" {

project = local.project_id

name = "<TOPIC_NAME>"

}

resource "google_pubsub_subscription" "pubsub_subscription" {

project = local.project_id

name = "export-asset-changes-to-datadog"

topic = google_pubsub_topic.pubsub_topic.id

}

resource "google_pubsub_subscription_iam_member" "subscriber" {

project = local.project_id

subscription = google_pubsub_subscription.pubsub_subscription.id

role = "roles/pubsub.subscriber"

member = "serviceAccount:<SERVICE_ACCOUNT_EMAIL>"

}

resource "google_cloud_asset_folder_feed" "folder_feed" {

billing_project = local.project_id

folder = "<FOLDER_ID>"

feed_id = "<FEED_NAME>"

content_type = "<CONTENT_TYPE>" # Optional. Remove if unused.

asset_names = ["<ASSET_NAMES>"] # Optional if specifying asset_types. Remove if unused.

asset_types = ["<ASSET_TYPES>"] # Optional if specifying asset_names. Remove if unused.

feed_output_config {

pubsub_destination {

topic = google_pubsub_topic.pubsub_topic.id

}

}

}

Actualiza los valores de los parámetros como se indica:

<PROJECT_ID>: tu ID de proyecto de Google Cloud.<FOLDER_ID>: ID de la carpeta en la que debe crearse este flujo.<TOPIC_NAME>: Nombre del tema Pub/Sub que se vinculará a la suscripciónexport-asset-changes-to-datadog.<SERVICE_ACCOUNT_EMAIL>: Correo electrónico de la cuenta de servicio utilizada por la integración Datadog Google Cloud.<FEED_NAME>: Nombre descriptivo del flujo de Cloud Asset Inventory.<ASSET_NAMES>: Lista separada por comas de nombres completos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-types.<ASSET_TYPES>: Lista separada por comas de tipos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-names.<CONTENT_TYPE>: Tipo de contenido de recurso opcional del que recibir eventos de cambios.

locals {

project_id = "<PROJECT_ID>"

}

resource "google_pubsub_topic" "pubsub_topic" {

project = local.project_id

name = "<TOPIC_NAME>"

}

resource "google_pubsub_subscription" "pubsub_subscription" {

project = local.project_id

name = "export-asset-changes-to-datadog"

topic = google_pubsub_topic.pubsub_topic.id

}

resource "google_pubsub_subscription_iam_member" "subscriber" {

project = local.project_id

subscription = google_pubsub_subscription.pubsub_subscription.id

role = "roles/pubsub.subscriber"

member = "serviceAccount:<SERVICE_ACCOUNT_EMAIL>"

}

resource "google_cloud_asset_organization_feed" "organization_feed" {

billing_project = local.project_id

org_id = "<ORGANIZATION_ID>"

feed_id = "<FEED_NAME>"

content_type = "<CONTENT_TYPE>" # Optional. Remove if unused.

asset_names = ["<ASSET_NAMES>"] # Optional if specifying asset_types. Remove if unused.

asset_types = ["<ASSET_TYPES>"] # Optional if specifying asset_names. Remove if unused.

feed_output_config {

pubsub_destination {

topic = google_pubsub_topic.pubsub_topic.id

}

}

}

Actualiza los valores de los parámetros como se indica:

<PROJECT_ID>: tu ID de proyecto de Google Cloud.<TOPIC_NAME>: Nombre del tema Pub/Sub que se vinculará a la suscripciónexport-asset-changes-to-datadog.<SERVICE_ACCOUNT_EMAIL>: Correo electrónico de la cuenta de servicio utilizada por la integración Datadog Google Cloud.<ORGANIZATION_ID>: Tu ID de organización de Google Cloud.<FEED_NAME>: Nombre descriptivo del flujo de Cloud Asset Inventory.<ASSET_NAMES>: Lista separada por comas de nombres completos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-types.<ASSET_TYPES>: Lista separada por comas de tipos de recursos de los que recibir eventos de cambios. Opcional si se especificaasset-names.<CONTENT_TYPE>: Tipo de contenido de recurso opcional del que recibir eventos de cambios.

Datadog recomienda configurar el parámetro asset-types con la expresión regular .* para recopilar los cambios de todos los recursos.

Nota: Debes especificar al menos un valor para los parámetros asset-names o asset-types.

Para ver la lista completa de parámetros configurables, consulta la referencia para la creación de flujos de recursos gcloud.

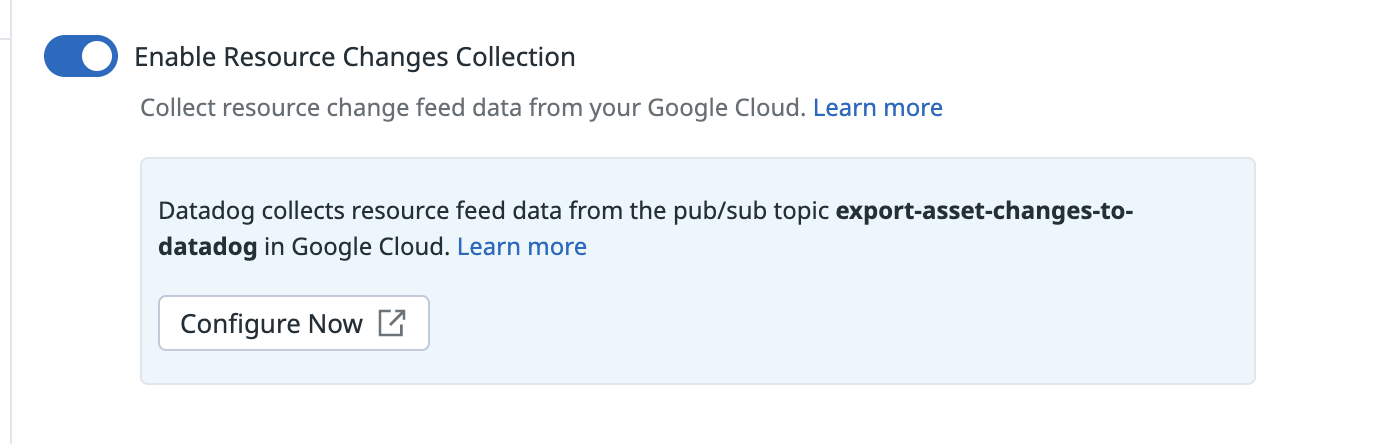

Activar la recopilación de cambios de recursos

Haz clic en Enable Resource Changes Collection (Habilitar recopilación de cambios de recursos) en la pestaña Recopilación de recursos de la página de la integración Google Cloud.

Validación

Busca tus eventos de cambios de recursos en el Explorador de eventos de Datadog.

Private Service Connect

Private Service Connect sólo está disponible para los sitios US5 y EU de Datadog.

Utiliza la integración Google Cloud Private Service Connect para visualizar conexiones, datos transferidos y paquetes descartados a través de Private Service Connect. Esto te proporciona una visibilidad de las métricas importantes de tus conexiones de Private Service Connect, tanto para los productores como para los consumidores. Private Service Connect (PSC) es un producto de red de Google Cloud que te permite acceder a servicios de Google Cloud, a servicios de socios externos y a aplicaciones de propiedad de la empresa directamente desde tu Virtual Private Cloud (VPC).

Para obtener más información, consulta Acceder a Datadog de forma privada y monitorizar tu uso de Google Cloud Private Service Connect en el blog de Datadog.

Datos recopilados

Métricas

Para ver las métricas, consulta las páginas individuales de la integración Google Cloud.

Métricas acumulativas

Las métricas acumulativas se importan a Datadog con una métrica .delta para cada nombre de métrica. Una métrica acumulativa es una métrica cuyo valor aumenta constantemente con el tiempo. Por ejemplo, una métrica para sent bytes podría ser acumulativa. Cada valor registra el número total de bytes enviados por un servicio en ese momento. El valor delta representa el cambio desde la medición anterior.

Por ejemplo:

gcp.gke.container.restart_count es una métrica ACUMULATIVA. Al importar esta métrica como una métrica acumulativa, Datadog añade la métrica gcp.gke.container.restart_count.delta que incluye los valores delta (a diferencia del valor agregado emitido como parte de la métrica ACUMULATIVA). Para obtener más información, consulta los tipos de métricas de Google Cloud.

Eventos

Todos los eventos de servicios generados por tu Google Cloud Platform se reenvían a tu Explorador de eventos de Datadog.

Checks de servicio

La integración Google Cloud Platform no incluye checks de servicios.

Etiquetas (Tags)

Las etiquetas (tags) se asignan automáticamente en función de diferentes opciones de configuración de Google Cloud Platform y Google Compute Engine. La etiqueta (tag) project_id se añade a todas las métricas. Las etiquetas (tags) adicionales se recopilan de Google Cloud Platform cuando están disponibles y varían en función del tipo de métrica.

Además, Datadog recopila lo siguiente como etiquetas (tags):

- Cualquier host con las etiquetas (labels)

<key>:<value>. - Etiquetas (labels) personalizadas de Google Pub/Sub, GCE, Cloud SQL y Cloud Storage.

Solucionar problemas

¿Metadatos incorrectos para las métricas gcp.logging definidas por el usuario?

En el caso de las métricas gcp.logging no estándar, como las métricas que van más allá de las métricas de generación de logs predefinidas de Datadog, es posible que los metadatos aplicados no se correspondan con Google Cloud Logging.

En estos casos, los metadatos deben definirse manualmente yendo a la página de resumen de métricas, buscando y seleccionando la métrica en cuestión, y haciendo clic en el icono del lápiz situado junto a los metadatos.

¿Necesitas ayuda? Ponte en contacto con el servicio de asistencia de Datadog.

Referencias adicionales

Documentación útil adicional, enlaces y artículos:

- Mejorar el cumplimiento y la postura de seguridad de tu entorno Google Cloud con Datadog.

- Monitorizar Google Cloud Vertex AI con Datadog

- Monitorizar tus pipelines Dataflow con Datadog

- Crear y gestionar tu integración Google Cloud con Terraform

- Monitorizar BigQuery con Datadog

- Solucionar más rápidamente los cambios en la infraestructura con Cambios recientes del Catálogo de recursos

- Transmitir logs desde Google Cloud a Datadog