- Agents

- Essentials

- Getting Started

- Agent

- API

- APM Tracing

- Containers

- Dashboards

- Database Monitoring

- Datadog

- Datadog Site

- DevSecOps

- Incident Management

- Integrations

- Internal Developer Portal

- Logs

- Monitors

- Notebooks

- OpenTelemetry

- Profiler

- Search

- Session Replay

- Security

- Serverless for AWS Lambda

- Software Delivery

- Synthetic Monitoring and Testing

- Tags

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- Extend Datadog

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Build an Integration with Datadog

- Create an Agent-based Integration

- Create an API-based Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create an Integration Dashboard

- Create a Monitor Template

- Create a Cloud SIEM Detection Rule

- Install Agent Integration Developer Tool

- Service Checks

- Community

- Guides

- OpenTelemetry

- Administrator's Guide

- API

- Partners

- Datadog Mobile App

- DDSQL Reference

- CoScreen

- CoTerm

- Remote Configuration

- Cloudcraft (Standalone)

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Monitors and Alerting

- Service Level Objectives

- Metrics

- Watchdog

- Bits AI

- Internal Developer Portal

- Error Tracking

- Change Tracking

- Event Management

- Incident Response

- Actions & Remediations

- Infrastructure

- Cloudcraft

- Resource Catalog

- Universal Service Monitoring

- End User Device Monitoring

- Hosts

- Containers

- Processes

- Serverless

- Network Monitoring

- Storage Management

- Cloud Cost

- Application Performance

- APM

- Continuous Profiler

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Setting Up Postgres

- Setting Up MySQL

- Setting Up SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Setting Up ClickHouse

- Connecting DBM and Traces

- Data Collected

- Exploring Database Hosts

- Exploring Query Metrics

- Exploring Query Samples

- Exploring Database Schemas

- Exploring Recommendations

- Troubleshooting

- Guides

- Data Streams Monitoring

- Data Observability

- Digital Experience

- Real User Monitoring

- Synthetic Testing and Monitoring

- Continuous Testing

- Experiments

- Product Analytics

- Session Replay

- Software Delivery

- CI Visibility

- CD Visibility

- Deployment Gates

- Test Optimization

- Code Coverage

- PR Gates

- DORA Metrics

- Feature Flags

- Developer Integrations

- Security

- Security Overview

- Cloud SIEM

- Code Security

- Cloud Security

- App and API Protection

- AI Guard

- Workload Protection

- Sensitive Data Scanner

- AI Observability

- Log Management

- Observability Pipelines

- Configuration

- Sources

- Processors

- Destinations

- Packs

- Akamai CDN

- Amazon CloudFront

- Amazon VPC Flow Logs

- AWS Application Load Balancer Logs

- AWS CloudTrail

- AWS Elastic Load Balancer Logs

- AWS Network Load Balancer Logs

- Cisco ASA

- Cloudflare

- F5

- Fastly

- Fortinet Firewall

- HAProxy Ingress

- Istio Proxy

- Juniper SRX Firewall Traffic Logs

- Netskope

- NGINX

- Okta

- Palo Alto Firewall

- Windows XML

- ZScaler ZIA DNS

- Zscaler ZIA Firewall

- Zscaler ZIA Tunnel

- Zscaler ZIA Web Logs

- Search Syntax

- Scaling and Performance

- Monitoring and Troubleshooting

- Guides and Resources

- Log Management

- CloudPrem

- Administration

- Account Management

- Data Security

- Help

Set up Static Code Analysis (SAST)

This product is not supported for your selected Datadog site. ().

Code Security is not available for the site.

Overview

To set up Datadog SAST in-app, navigate to Security > Code Security.

Select where to run Static Code Analysis scans

Scan with Datadog-hosted scanning

You can run Datadog Static Code Analysis (SAST) scans directly on Datadog infrastructure. Supported repository types include:

- GitHub (excluding repositories that use Git Large File Storage)

- GitLab.com and GitLab Self-Managed

- Azure DevOps

To get started, navigate to the Code Security page.

Scan in CI pipelines

Datadog Static Code Analysis runs in your CI pipelines using the datadog-ci CLI.

First, configure your Datadog API and application keys. Add DD_APP_KEY and DD_API_KEY as secrets. Please ensure your Datadog application key has the code_analysis_read scope.

Next, run Static Code Analysis by following instructions for your chosen CI provider below.

See instructions based on your CI provider:

Select your source code management provider

Datadog Static Code Analysis supports all source code management providers, with native support for GitHub, GitLab, and Azure DevOps.

Configure a GitHub App with the GitHub integration tile and set up the source code integration to enable inline code snippets and pull request comments.

When installing a GitHub App, the following permissions are required to enable certain features:

Content: Read, which allows you to see code snippets displayed in DatadogPull Request: Read & Write, which allows Datadog to add feedback for violations directly in your pull requests using pull request comments, as well as open pull requests to fix vulnerabilitiesChecks: Read & Write, which allows you to create checks on SAST violations to block pull requests

See the GitLab source code setup instructions to connect GitLab repositories to Datadog. Both GitLab.com and Self-Managed instances are supported.

Note: Your Azure DevOps integrations must be connected to a Microsoft Entra tenant. Azure DevOps Server is not supported.

See the Azure source code setup instructions to connect Azure DevOps repositories to Datadog.

If you are using another source code management provider, configure Static Code Analysis to run in your CI pipelines using the datadog-ci CLI tool and upload the results to Datadog.

You must run an analysis of your repository on the default branch before results can begin appearing on the Code Security page.

Customize your configuration

By default, Datadog Static Code Analysis (SAST) scans your repositories with Datadog’s default rulesets for your programming language(s). You can customize which rulesets or rules to run or ignore, in addition to other parameters. You can customize these settings locally in your repository or within the Datadog App.

Configuration locations

Datadog Static Code Analysis (SAST) can be configured within Datadog and/or by using a file within your repository’s root directory.

There are three levels of configuration:

- Org-level configuration (Datadog)

- Repository-level configuration (Datadog)

- Repository-level configuration (repo file)

All three configurations use the same YAML format, and they are merged in order (see Configuration precedence and merging).

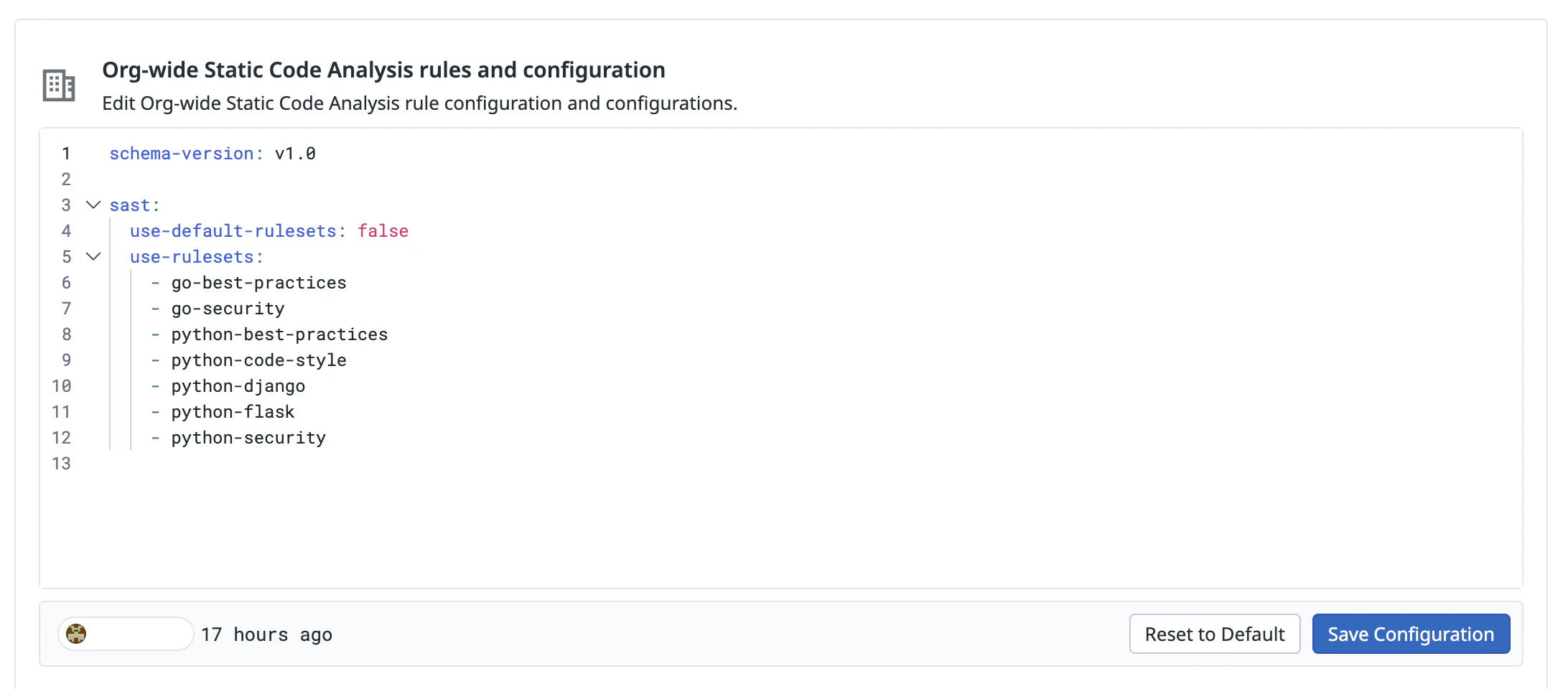

Org-level configuration

Use org-level configuration to define rules that apply to all repositories and specify global paths or files to ignore.

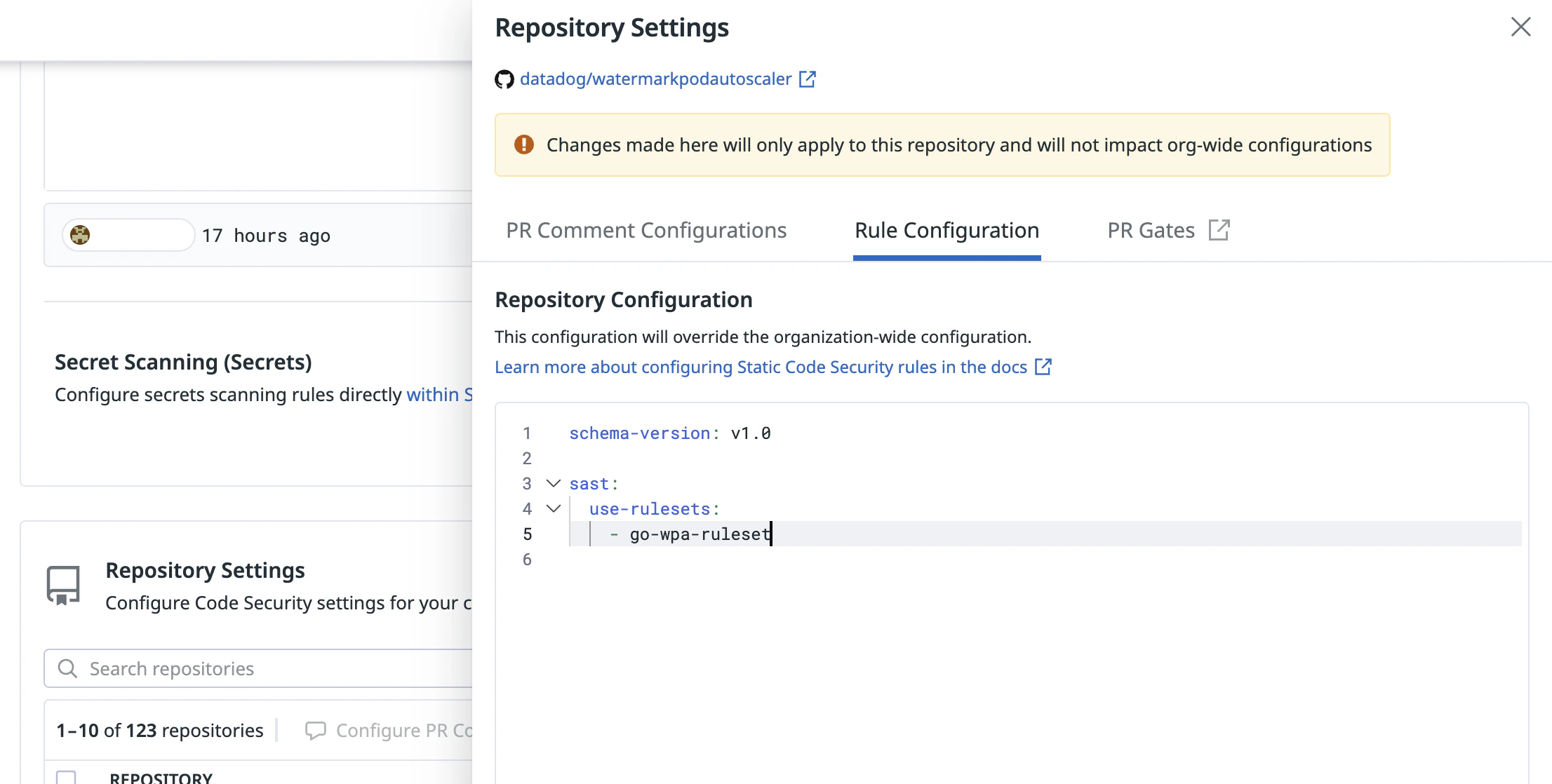

Repository-level configuration

Configurations at the repository level apply only to the repository selected. These configurations are merged with the org configuration, with the repository configuration taking precedence. Use repository-level configuration to define overrides for repository-specific details or add rules specific to that repository.

Repository-level configuration (file)

You can also define configuration in a code-security.datadog.yaml file at the root of your repo. This file takes precedence over the repository-level configuration defined in Datadog. Use this file to customize rule configurations and iterate on setup and testing.

Default rulesets

By default, Datadog enables the default rulesets for your repository’s programming languages (use-default-rulesets: true). To modify the enabled rulesets:

- Add rulesets: List them under

use-rulesets - Disable specific rulesets: List them under

ignore-rulesets - Disable all default rulesets: Set

use-default-rulesets: false, then list the desired rulesets underuse-rulesets

For the full list of default rulesets, see Static Code Analysis (SAST) Rules.

Configuration precedence and merging

Configurations are merged in the following order, where each level overrides the one before it:

- Org-level (Datadog)

- Repo-level (Datadog)

- Repo-level file (

code-security.datadog.yaml)

The following merge rules apply:

- Lists (

use-rulesets,ignore-rulesets,ignore-paths,only-paths): Concatenated, with duplicates removed - Scalar values (

use-default-rulesets,use-gitignore,ignore-generated-files,max-file-size-kb,category, and per-path entries forseverityandarguments): The value from the highest-precedence configuration is used - Maps (

ruleset-configs,rule-configs,arguments): Recursively merged

The following example shows how configurations are merged:

Org-level:

schema-version: v1.0

sast:

use-default-rulesets: false

use-rulesets:

- A

ruleset-configs:

A:

rule-configs:

foo:

ignore-paths:

- "path/to/ignore"

arguments:

maxCount: 10

Repo-level:

schema-version: v1.0

sast:

use-rulesets:

- B

ignore-rulesets:

- C

ruleset-configs:

A:

rule-configs:

foo:

arguments:

maxCount: 22

bar:

only-paths:

- "src"

Merged result:

schema-version: v1.0

sast:

use-default-rulesets: false

use-rulesets:

- A

- B

ignore-rulesets:

- C

ruleset-configs:

A:

rule-configs:

foo:

ignore-paths:

- "path/to/ignore"

arguments:

maxCount: 22

bar:

only-paths:

- "src"

The maxCount: 22 value from the repo-level configuration overrides the maxCount: 10 value from the org-level configuration because repo-level settings have higher precedence. All other fields from the org-level configuration are retained because they are not overridden.

Configuration format

The following configuration format applies to all configuration locations: org-level, repository-level, and repository-level (file).

The configuration file must begin with schema-version: v1.0, followed by a sast key containing the analysis configuration. The full structure is as follows:

schema-version: v1.0

sast:

use-default-rulesets: true

use-rulesets:

- ruleset-name

ignore-rulesets:

# Always ignore these rulesets (even if it is a default ruleset or listed in `use-rulesets`)

- ignored-ruleset-name

ruleset-configs:

ruleset-name:

# Only apply this ruleset to the following paths/files

only-paths:

- "path/example"

- "**/*.file"

# Do not apply this ruleset in the following paths/files

ignore-paths:

- "path/example/directory"

- "**/config.file"

rule-configs:

rule-name:

# Only apply this rule to the following paths/files

only-paths:

- "path/example"

- "**/*.file"

# Do not apply this rule to the following paths/files

ignore-paths:

- "path/example/directory"

- "**/config.file"

arguments:

# Set the rule's argument to value.

argument-name: value

severity: ERROR

category: CODE_STYLE

rule-name:

arguments:

# Set different argument values in different subtrees

argument-name:

# Set the rule's argument to value_1 by default (root path of the repo)

/: value_1

# Set the rule's argument to value_2 for specific paths

path/example: value_2

global-config:

# Only analyze the following paths/files

only-paths:

- "path/example"

- "**/*.file"

# Do not analyze the following paths/files

ignore-paths:

- "path/example/directory"

- "**/config.file"

use-gitignore: true

ignore-generated-files: true

max-file-size-kb: 200

The sast key supports the following fields:

| Property | Type | Description | Default |

|---|---|---|---|

use-default-rulesets | Boolean | Whether to enable Datadog default rulesets. | true |

use-rulesets | Array | A list of ruleset names to enable. | None |

ignore-rulesets | Array | A list of ruleset names to disable. Takes precedence over use-rulesets and use-default-rulesets. | None |

ruleset-configs | Object | A map from ruleset name to its configuration. | None |

global-config | Object | Global settings for the repository. | None |

Ruleset configuration

Each entry in the ruleset-configs map configures a specific ruleset. A ruleset does not need to be listed in use-rulesets for its configuration to apply; the configuration is used whenever the ruleset is enabled, including through use-default-rulesets.

| Property | Type | Description | Default |

|---|---|---|---|

only-paths | Array | File paths or glob patterns. Only files matching these patterns are processed for this ruleset. | None |

ignore-paths | Array | File paths or glob patterns to exclude from analysis for this ruleset. | None |

rule-configs | Object | A map from rule name to its configuration. | None |

Rule configuration

Each entry in a ruleset’s rule-configs map configures a specific rule:

| Property | Type | Description | Default |

|---|---|---|---|

only-paths | Array | File paths or glob patterns. The rule is applied only to files matching these patterns. | None |

ignore-paths | Array | File paths or glob patterns to exclude. The rule is not applied to files matching these patterns. | None |

arguments | Object | Parameters and values for the rule. Values can be scalars or defined per path. | None |

severity | String or Object | The rule severity. Valid values: ERROR, WARNING, NOTICE, NONE. Can be a single value or defined per path. | None |

category | String | The rule category. Valid values: BEST_PRACTICES, CODE_STYLE, ERROR_PRONE, PERFORMANCE, SECURITY. | None |

Argument and severity configuration

Arguments and severity can be defined in one of two formats:

Single value: Applies to the whole repository.

arguments: argument-name: value severity: ERRORPer-path mapping: Different values for different subtrees. The longest matching path prefix applies. Use

/as a catch-all default.arguments: argument-name: /: value_default path/example: value_specific severity: /: WARNING path/example: ERRORKey Type Description Default /Any The default value when no specific path is matched. None specific pathAny The value for files matching the specified path or glob pattern. None

The category field takes a single string value for the whole repository.

Global configuration

The global-config object controls repository-wide settings:

| Property | Type | Description | Default |

|---|---|---|---|

only-paths | Array | File paths or glob patterns. Only matching files are analyzed. | None |

ignore-paths | Array | File paths or glob patterns to exclude. Matching files are not analyzed. | None |

use-gitignore | Boolean | Whether to include entries from the .gitignore file in ignore-paths. | true |

ignore-generated-files | Boolean | Whether to include common generated file patterns in ignore-paths. | true |

max-file-size-kb | Number | Maximum file size (in kB) to analyze. Larger files are ignored. | 200 |

Example configuration:

schema-version: v1.0

sast:

use-default-rulesets: false

use-rulesets:

- python-best-practices

- python-security

- python-code-style

- python-inclusive

- python-django

- custom-python-ruleset

ruleset-configs:

python-code-style:

rule-configs:

max-function-lines:

# Do not apply the rule max-function-lines to the following files

ignore-paths:

- "src/main/util/process.py"

- "src/main/util/datetime.py"

arguments:

# Set the max-function-lines rule's threshold to 150 lines

max-lines: 150

# Override this rule's severity

severity: NOTICE

max-class-lines:

arguments:

# Set different thresholds for the max-class-lines rule in different subtrees

max-lines:

# Set the rule's threshold to 200 lines by default (root path of the repo)

/: 200

# Set the rule's threshold to 100 lines in src/main/backend

src/main/backend: 100

# Override this rule's severity with different values in different subtrees

severity:

# Set the rule's severity to NOTICE by default

/: NOTICE

# Set the rule's severity to NONE in tests/

tests: NONE

python-django:

# Only apply the python-django ruleset to the following paths

only-paths:

- "src/main/backend"

- "src/main/django"

# Do not apply the python-django ruleset in files matching the following pattern

ignore-paths:

- "src/main/backend/util/*.py"

global-config:

# Only analyze source files

only-paths:

- "src/main"

- "src/tests"

- "**/*.py"

# Do not analyze third-party files

ignore-paths:

- "lib/third_party"

Legacy configuration

Datadog Static Code Analysis (SAST) previously used a different configuration file (static-analysis.datadog.yml) and schema. This schema is deprecated and does not receive new updates, but it is documented in the datadog-static-analyzer repository.

If both files are present, code-security.datadog.yaml takes precedence over static-analysis.datadog.yml.

Ignoring violations

Ignore for a repository

Add a rule configuration in your code-security.datadog.yaml file. The following example ignores the rule javascript-express/reduce-server-fingerprinting for all directories.

schema-version: v1.0

sast:

ruleset-configs:

javascript-express:

rule-configs:

reduce-server-fingerprinting:

ignore-paths:

- "**"

Ignore for a file or directory

Add a rule configuration in your code-security.datadog.yaml file. The following example ignores the rule javascript-express/reduce-server-fingerprinting for a specific file. For more information on how to ignore by path, see Customize your configuration.

schema-version: v1.0

sast:

ruleset-configs:

javascript-express:

rule-configs:

reduce-server-fingerprinting:

ignore-paths:

- "ad-server/src/app.js"

Ignore for a specific instance

To ignore a specific instance of a violation, comment no-dd-sa above the line of code to ignore. This prevents that line from ever producing a violation. For example, in the following Python code snippet, the line foo = 1 would be ignored by Static Code Analysis scans.

#no-dd-sa

foo = 1

bar = 2

You can also use no-dd-sa to only ignore a particular rule rather than ignoring all rules. To do so, specify the name of the rule you wish to ignore in place of <rule-name> using this template:

no-dd-sa:<rule-name>

For example, in the following JavaScript code snippet, the line my_foo = 1 is analyzed by all rules except for the javascript-code-style/assignment-name rule, which tells the developer to use camelCase instead of snake_case.

// no-dd-sa:javascript-code-style/assignment-name

my_foo = 1

myBar = 2

Link findings to Datadog services and teams

Datadog associates code and library scan results with Datadog services and teams to automatically route findings to the appropriate owners. This enables service-level visibility, ownership-based workflows, and faster remediation.

To determine the service where a vulnerability belongs, Datadog evaluates several mapping mechanisms in the order listed in this section.

Each vulnerability is mapped with one method only: if a mapping mechanism succeeds for a particular finding, Datadog does not attempt the remaining mechanisms for that finding.

Using service definitions that include code locations in the Software Catalog is the only way to explicitly control how static findings are mapped to services. The additional mechanisms described below, such as Error Tracking usage patterns and naming-based inference, are not user-configurable and depend on existing data from other Datadog products. Consequently, these mechanisms might not provide consistent mappings for organizations not using these products.

Mapping using the Software Catalog (recommended)

Services in the Software Catalog identify their codebase content using the codeLocations field. This field is available in the Software Catalog schema version v3 and allows a service to specify:

- a repository URL

apiVersion: v3

kind: service

metadata:

name: billing-service

owner: billing-team

datadog:

codeLocations:

- repositoryURL: https://github.com/org/myrepo.git

- one or more code paths inside that repository

apiVersion: v3

kind: service

metadata:

name: billing-service

owner: billing-team

datadog:

codeLocations:

- repositoryURL: https://github.com/org/myrepo.git

paths:

- path/to/service/code/**

If you want all the files in a repository to be associated with a service, you can use the glob ** as follows:

apiVersion: v3

kind: service

metadata:

name: billing-service

owner: billing-team

datadog:

codeLocations:

- repositoryURL: https://github.com/org/myrepo.git

paths:

- path/to/service/code/**

- repositoryURL: https://github.com/org/billing-service.git

paths:

- "**"

The schema for this field is described in the Software Catalog entity model.

Datadog goes through all Software Catalog definitions and checks whether the finding’s file path matches. For a finding to be mapped to a service through codeLocations, it must contain a file path.

Some findings might not contain a file path. In those cases, Datadog cannot evaluate codeLocations for that finding, and this mechanism is skipped.

Services defined with a Software Catalog schema v2.x do not support codeLocations. Existing definitions can be upgraded to the v3 schema in the Software Catalog. After migration is completed, changes might take up to 24 hours to apply to findings. If you are unable to upgrade to v3, Datadog falls back to alternative linking techniques (described below). These rely on less precise heuristics, so accuracy might vary depending on the Code Security product and your use of other Datadog features.

Example (v3 schema)

apiVersion: v3

kind: service

metadata:

name: billing-service

owner: billing-team

datadog:

codeLocations:

- repositoryURL: https://github.com/org/myrepo.git

paths:

- path/to/service/code/**

- repositoryURL: https://github.com/org/billing-service.git

paths:

- "**"

SAST finding

If a vulnerability appeared in github.com/org/myrepo at /src/billing/models/payment.py, then using the codeLocations for billing-service Datadog would add billing-service as an owning service. If your service defines an owner (see above), then Datadog links that team to the finding too. In this case, the finding would be linked to the billing-team.

SCA finding

If a library was declared in github.com/org/myrepo at /go.mod, then Datadog would not match it to billing-service.

Instead, if it was declared in github.com/org/billing-service at /go.mod, then Datadog would match it to billing-service due to the “**” catch-all glob. Consequently, Datadog would link the finding to the billing-team.

Datadog attempts to map a single finding to as many services as possible. If no matches are found, Datadog continues onto the next linking method.

When the Software Catalog cannot determine the service

If the Software Catalog does not provide a match, either because the finding’s file path does not match any codeLocations, or because the service uses the v2.x schema, Datadog evaluates whether Error Tracking can identify the service associated with the code. Datadog uses only the last 30 days of Error Tracking data due to product data-retention limits.

When Error Tracking processes stack traces, the traces often include file paths.

For example, if an error occurs in: /foo/bar/baz.py, Datadog inspects the directory: /foo/bar. Datadog then checks whether the finding’s file path resides under that directory.

If the finding file is under the same directory:

- Datadog treats this as a strong indication that the vulnerability belongs to the same service.

- The finding inherits the service and team associated with that error in Error Tracking.

If this mapping succeeds, Datadog stops here.

Service inference from file paths or repository names

When neither of the above strategies can determine the service, Datadog inspects naming patterns in the repository and file paths.

Datadog evaluates whether:

- The file path contains identifiers matching a known service.

- The repository name corresponds to a service name.

When using the finding’s file path, Datadog performs a reverse search on each path segment until it finds a matching service or exhausts all options.

For example, if a finding occurs in github.com/org/checkout-service at /foo/bar/baz/main.go, Datadog takes the last path segment, main, and sees if any Software Catalog service uses that name. If there is a match, the finding is attributed to that service. If not, the process continues with baz, then bar, and so on.

When all options have been tried, Datadog checks whether the repository name, checkout-service, matches a Software Catalog service name. If no match is found, Datadog is unsuccessful at linking your finding using Software Catalog.

This mechanism ensures that findings receive meaningful service attribution when no explicit metadata exists.

Link findings to teams through Code Owners

If Datadog is able to link your finding to a service using the above strategies, then the team that owns that service (if defined) is associated with that finding automatically.

Regardless of whether Datadog successfully links a finding to a service (and a Datadog team), Datadog uses the CODEOWNERS information from your finding’s repository to link Datadog and GitHub teams to your findings.

You must accurately map your Git provider teams to your Datadog Teams for team attribution to function properly.

Diff-aware scanning

Diff-aware scanning enables Datadog’s static analyzer to only scan the files modified by a commit in a feature branch. It accelerates scan time significantly by not having the analysis run on every file in the repository for every scan. To enable diff-aware scanning in your CI pipeline, follow these steps:

- Make sure your

DD_APP_KEY,DD_SITEandDD_API_KEYvariables are set in your CI pipeline. - Add a call to

datadog-ci git-metadata uploadbefore invoking the static analyzer. This command ensures that Git metadata is available to the Datadog backend. Git metadata is required to calculate the number of files to analyze. - Ensure that the datadog-static-analyzer is invoked with the flag

--diff-aware.

Example of commands sequence (these commands must be invoked in your Git repository):

datadog-ci git-metadata upload

datadog-static-analyzer -i /path/to/directory -g -o sarif.json -f sarif –-diff-aware <...other-options...>

Note: When a diff-aware scan cannot be completed, the entire directory is scanned.

Upload third-party static analysis results to Datadog

SARIF importing has been tested for Snyk, CodeQL, Semgrep, Gitleaks, and Sysdig. Reach out to Datadog Support if you experience any issues with other SARIF-compliant tools.

You can send results from third-party static analysis tools to Datadog, provided they are in the interoperable Static Analysis Results Interchange Format (SARIF) Format. Node.js version 14 or later is required.

To upload a SARIF report:

Ensure the

DD_API_KEYandDD_APP_KEYvariables are defined.Optionally, set a

DD_SITEvariable (this defaults todatadoghq.com).Install the

datadog-ciutility:npm install -g @datadog/datadog-ciRun the third-party static analysis tool on your code and output the results in the SARIF format.

Upload the results to Datadog:

datadog-ci sarif upload $OUTPUT_LOCATION

SARIF Support Guidelines

Datadog supports ingestion of third-party SARIF files that are compliant with the 2.1.0 SARIF schema. The SARIF schema is used differently by static analyzer tools. If you want to send third-party SARIF files to Datadog, please ensure they comply with the following details:

- The violation location is specified through the

physicalLocationobject of a result.- The

artifactLocationand it’surimust be relative to the repository root. - The

regionobject is the part of the code highlighted in the Datadog UI.

- The

- The

partialFingerprintsis used to uniquely identify a finding across a repository. propertiesandtagsadds more information:- The tag

DATADOG_CATEGORYspecifies the category of the finding. Acceptable values areSECURITY,PERFORMANCE,CODE_STYLE,BEST_PRACTICES,ERROR_PRONE. - The violations annotated with the category

SECURITYare surfaced in the Vulnerabilities explorer and the Security tab of the repository view.

- The tag

- The

toolsection must have a validdriversection with anameandversionattributes.

For example, here’s an example of a SARIF file processed by Datadog:

{

"runs": [

{

"results": [

{

"level": "error",

"locations": [

{

"physicalLocation": {

"artifactLocation": {

"uri": "missing_timeout.py"

},

"region": {

"endColumn": 76,

"endLine": 6,

"startColumn": 25,

"startLine": 6

}

}

}

],

"message": {

"text": "timeout not defined"

},

"partialFingerprints": {

"DATADOG_FINGERPRINT": "b45eb11285f5e2ae08598cb8e5903c0ad2b3d68eaa864f3a6f17eb4a3b4a25da"

},

"properties": {

"tags": [

"DATADOG_CATEGORY:SECURITY",

"CWE:1088"

]

},

"ruleId": "python-security/requests-timeout",

"ruleIndex": 0

}

],

"tool": {

"driver": {

"informationUri": "https://www.datadoghq.com",

"name": "<tool-name>",

"rules": [

{

"fullDescription": {

"text": "Access to remote resources should always use a timeout and appropriately handle the timeout and recovery. When using `requests.get`, `requests.put`, `requests.patch`, etc. - we should always use a `timeout` as an argument.\n\n#### Learn More\n\n - [CWE-1088 - Synchronous Access of Remote Resource without Timeout](https://cwe.mitre.org/data/definitions/1088.html)\n - [Python Best Practices: always use a timeout with the requests library](https://www.codiga.io/blog/python-requests-timeout/)"

},

"helpUri": "https://link/to/documentation",

"id": "python-security/requests-timeout",

"properties": {

"tags": [

"CWE:1088"

]

},

"shortDescription": {

"text": "no timeout was given on call to external resource"

}

}

],

"version": "<tool-version>"

}

}

}

],

"version": "2.1.0"

}

SARIF to CVSS severity mapping

The SARIF format defines four severities: none, note, warning, and error. However, Datadog reports violations and vulnerabilities severity using the Common Vulnerability Scoring System (CVSS), which defined five severities: critical, high, medium, low and none.

When ingesting SARIF files, Datadog maps SARIF severities into CVSS severities using the mapping rules below.

| SARIF severity | CVSS severity |

|---|---|

| Error | Critical |

| Warning | High |

| Note | Medium |

| None | Low |

Data Retention

Datadog stores findings in accordance with our Data Rentention Periods. Datadog does not store or retain customer source code.